Alibaba’s latest language model, Qwen 3.5-397B-A17B, flips the script on what’s possible with open-weight AI. Clocking in at 397 billion parameters but activating just 17 billion per token, it outperforms Alibaba’s own trillion-parameter Qwen3-Max on key benchmarks—all while slashing inference costs. For enterprises weighing proprietary models against self-hosted alternatives, this release signals a turning point: the most capable AI may no longer require the largest models.

The timing couldn’t be more strategic. Released during the Lunar New Year, Qwen 3.5 arrives as IT leaders finalize 2026 AI budgets, offering a direct challenge to the prevailing assumption that cutting-edge performance demands massive, cloud-dependent models. With a fraction of the computational overhead, Qwen 3.5 delivers competitive results against GPT-5.2 and Claude Opus 4.5—raising questions about whether enterprises need to rent or can now own their AI infrastructure.

The model’s efficiency isn’t just theoretical. Alibaba claims it decodes 19 times faster than Qwen3-Max at 256K context lengths, with 60% lower operational costs. That’s a game-changer for teams juggling inference bills, especially when compared to Google’s Gemini 3 Pro, which runs at roughly 18 times the cost.

Architecture: Why Fewer Active Parameters Mean More

Qwen 3.5 builds on Alibaba’s experimental Qwen3-Next, but scales its sparse Mixture-of-Experts (MoE) architecture to 512 experts—up from 128 in prior versions. This means only a subset of parameters engage per task, reducing the compute footprint to levels closer to a 17B dense model. The result? Dramatic speedups without sacrificing performance.

Two technical upgrades amplify these gains

- Multi-token prediction: Accelerates pre-training and boosts throughput, a technique adopted by several proprietary models but rarely seen in open-weight releases.

- Enhanced attention mechanism: Inherited from Qwen3-Next, it optimizes memory usage at extreme context lengths (up to 1M tokens in the hosted Plus variant).

The open-weight version handles 256K tokens natively, while the cloud-hosted Qwen3.5-Plus scales to 1M—making it one of the few open models capable of processing entire documents or long-form reasoning in a single pass.

Multimodal by Design, Not an Afterthought

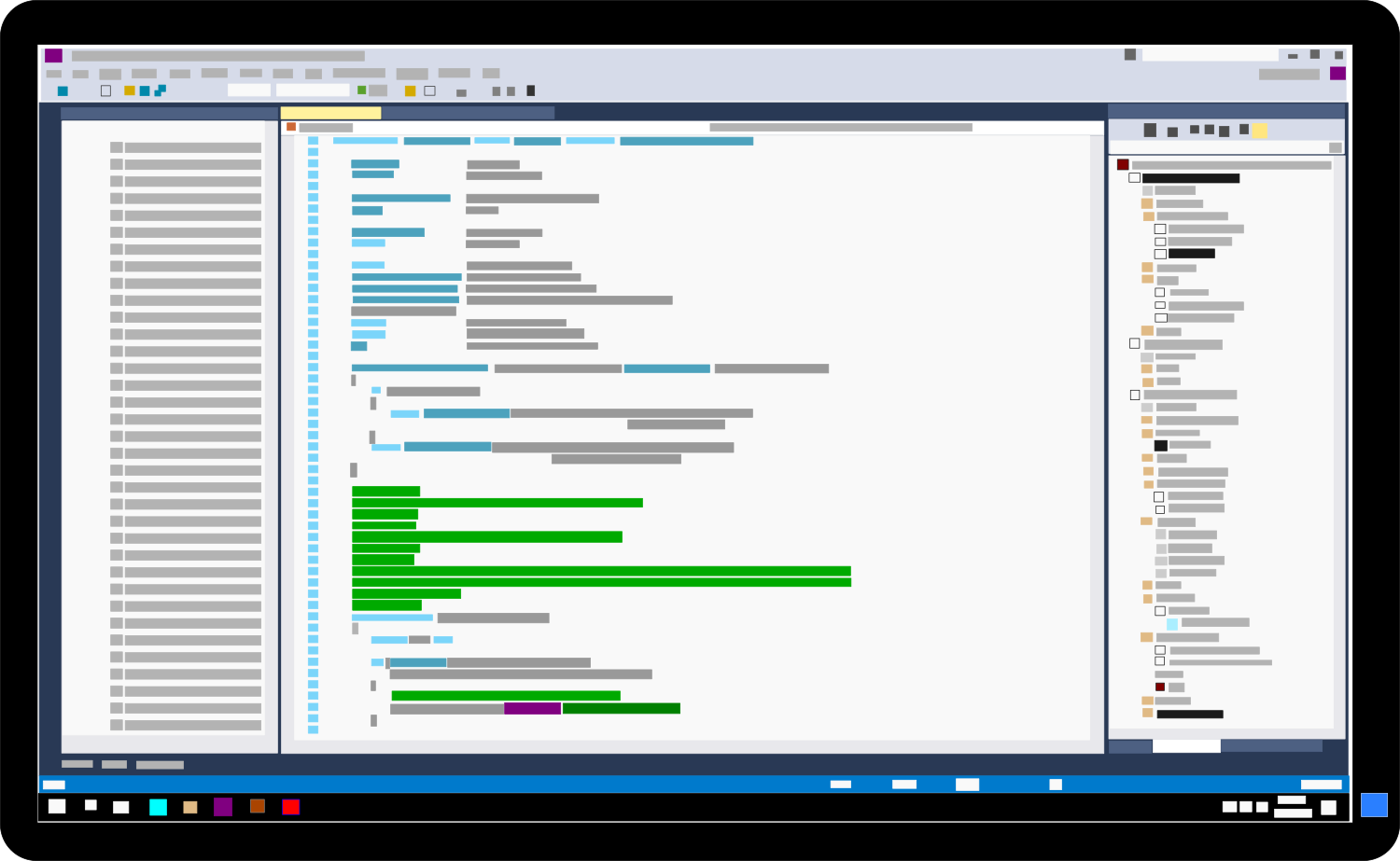

Most multimodal models bolt vision capabilities onto a language backbone. Qwen 3.5 trains from scratch on text, images, and video simultaneously, embedding visual reasoning into its core. This approach shines in tasks requiring tight text-image integration, such as extracting data from technical diagrams or parsing UI screenshots for automation.

Benchmark results reflect this advantage

- MathVista: 90.3 (leading in visual math reasoning).

- MMMU: 85.0 (multimodal academic benchmark).

- Competitive with GPT-5.2 and Claude Opus 4.5 on coding and general reasoning, despite using far fewer parameters.

While it trails Gemini 3 on some vision-specific tests, Qwen 3.5’s native integration gives it an edge in complex, real-world scenarios where text and visuals must be processed together.

Global Reach and Cost Efficiency

Qwen 3.5 expands language support to 201 languages and dialects—up from 119 in Qwen 3—with a tokenizer now matching Google’s 256K vocabulary. This isn’t just about coverage; it’s about efficiency. Non-Latin scripts like Arabic, Japanese, and Hindi are encoded more compactly, reducing token counts by 15–40% per language. For enterprises serving multilingual users, this translates to faster responses and lower inference costs.

Agentic Workflows and Open-Source Flexibility

Alibaba positions Qwen 3.5 as an agentic model, designed to execute multi-step tasks autonomously. The open-source Qwen Code CLI lets developers offload coding tasks via natural language, similar to Anthropic’s Claude Code. Compatibility with OpenClaw—an open-source agentic framework gaining traction—adds another layer of practicality, with 15,000 RL training environments refining task execution.

The hosted Qwen3.5-Plus variant offers adaptive inference modes: fast for real-time tasks, thinking for complex reasoning, and auto for dynamic optimization. This flexibility is critical for enterprises where a single model must handle everything from customer queries to deep analytics.

Deployment: Power Requirements and Licensing

Running Qwen 3.5 in-house demands serious hardware. The quantized version requires 256GB of RAM (512GB recommended), targeting GPU nodes rather than workstations. This aligns with enterprise inference setups but rules out casual deployment.

Licensing simplifies procurement: all open-weight models use Apache 2.0, allowing commercial use, modification, and redistribution without royalties. For legal teams, this eliminates the friction of proprietary restrictions.

This is the first release in the Qwen 3.5 family, with smaller distilled models (likely including an 80B variant) expected soon. If the Qwen3 pattern holds, a tiered lineup—from 600M to 397B—will follow, offering scalability for different use cases.

For IT leaders, the message is clear: frontier AI no longer requires compromises. Qwen 3.5 delivers multimodal reasoning, 1M-token contexts, and agentic capabilities—all while being self-hostable. The question now is infrastructure readiness.

Qwen 3.5-397B-A17B is available now on Hugging Face under Apache 2.0. The hosted Qwen3.5-Plus variant is accessible via Alibaba Cloud Model Studio, with free public evaluation at chat.qwen.ai.