Data center operators running AI workloads face a growing bottleneck: copper-based interconnects that slow down signal transfer between nodes. AMD is addressing this with a return to its former manufacturing partner, GlobalFoundries, to develop co-packaged optics (CPO) for the Instinct MI500 series of AI accelerators, set to launch in 2027.

CPO technology uses light instead of electrical signals to move data between chips and nodes, drastically reducing latency. This isn’t just about speed—it’s about efficiency. Traditional copper wiring loses signal integrity over distance, forcing data center designs to cluster compute resources tightly. CPO could change that, enabling seamless communication across multiple racks or even facilities without sacrificing performance.

AMD’s collaboration with GlobalFoundries isn’t a complete revival of its old silicon manufacturing days. The company sold GlobalFoundries in 2008 and fully divested by 2012. Since then, the foundry has focused on advanced packaging and photonics while AMD shifted to TSMC for leading-edge nodes like 3 nm and 2 nm for its Zen-based CPUs. But with AI demand pushing the limits of copper, both companies see an opportunity in silicon photonics.

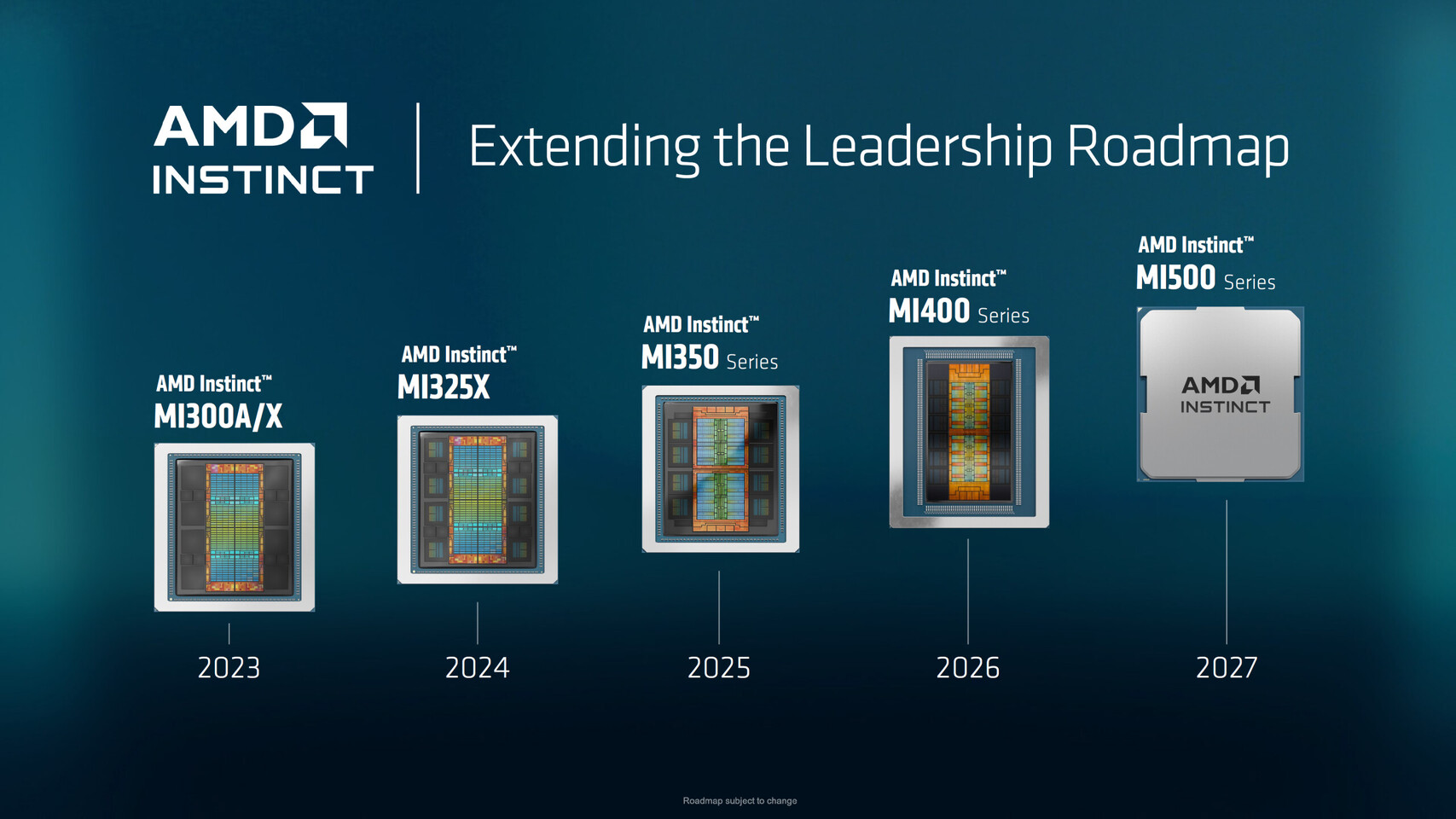

The Instinct MI500 series will build on the current MI400 lineup, which already delivers strong performance for AI and high-performance computing (HPC) workloads. The addition of CPO is expected to further improve bandwidth while cutting power consumption—a critical factor as data centers scale up. However, this isn’t AMD’s first foray into photonics. Earlier generations like the Instinct MI300 series already incorporated some photonic elements, but the MI500 will take it a step further with full co-packaged optics.

The timing is strategic. NVIDIA has been quietly working on its own CPO solutions for projects like Vera Rubin’s Rubin Ultra variant, signaling that the industry is shifting toward photonics as a standard. AMD’s move suggests it’s not just playing catch-up but aiming to set a new benchmark in AI accelerator design.

What remains unclear is how widely CPO will be adopted beyond high-end data centers. While the technology promises breakthroughs in latency and power efficiency, its cost and scalability could limit immediate widespread use. For now, AMD’s focus is on delivering a solution that meets next year’s demands—proving that even after 14 years apart, this partnership still has potential to shape the future of AI hardware.