Artificial intelligence has long been framed as a solitary thinker, churning through data in isolation to arrive at answers. But Google’s latest research upends that assumption, demonstrating that the most advanced reasoning models don’t just analyze—they argue. By simulating internal debates among specialized ‘characters’ with opposing viewpoints, these systems achieve higher accuracy in complex tasks than traditional linear reasoning.

The phenomenon, dubbed ‘society of thought,’ emerges organically in models trained via reinforcement learning (RL), such as DeepSeek-R1 and QwQ-32B. Unlike human-crafted prompts that force debate, these models develop the ability to split into distinct personas—such as a ‘Critical Verifier’ or ‘Methodical Problem-Solver’—without explicit instruction. The result? Fewer errors, deeper exploration of alternatives, and a marked reduction in biases that plague single-perspective reasoning.

For enterprises, the implications are profound. The study suggests that scrubbing training data to eliminate ‘messy’ debates—where incorrect paths are explored—may actually hinder model performance. Meanwhile, prompt engineers could unlock better results by designing interactions that mimic adversarial collaboration, and high-stakes applications may require interfaces that expose these internal deliberations for auditing.

How ‘Society of Thought’ Works

At its core, society of thought mirrors cognitive science theories that human reasoning evolved as a social process. Just as teams solve problems through structured dissent, AI models improve when they simulate conversations between diverse ‘agents’ embedded within a single system. These agents aren’t separate models but emergent roles—such as a ‘Planner’ proposing a solution and a ‘Critical Verifier’ challenging assumptions—operating in tandem.

The breakthrough becomes clear in benchmarks. In a chemistry synthesis task, DeepSeek-R1 initially proposed a standard reaction pathway. But its internal ‘Critical Verifier’, characterized by high conscientiousness and low agreeableness, intervened with a counterargument. This friction led to a corrected synthesis path. Similarly, in creative writing tasks, a ‘Semantic Fidelity Checker’ rejected stylistic changes that strayed from the original intent, forcing a compromise that preserved meaning while enhancing fluency.

Even in math puzzles like the ‘Countdown Game’, the model’s reasoning evolved from monologues to dynamic debates. Early attempts relied on linear problem-solving, but as training progressed, two personas emerged: a ‘Methodical Problem-Solver’ executing calculations and an ‘Exploratory Thinker’ interrupting failed attempts with suggestions like ‘Maybe we can try using negative numbers.’ This shift doubled accuracy on complex tasks.

Why Monologues Fail—and How to Fix Them

The study challenges the assumption that longer chains of thought guarantee better results. Instead, diverse behaviors—such as verification, backtracking, and dissent—drive improvements. Models trained on monologues underperformed those that naturally developed multi-agent conversations through RL. Conversely, fine-tuning on debate-heavy data (even when debates included wrong answers) outperformed traditional supervised fine-tuning on clean, linear solutions.

For developers, the takeaway is clear: prompt engineering must go beyond generic instructions. Instead of asking a model to ‘debate itself,’ prompts should assign opposing dispositions—such as a risk-averse auditor vs. a growth-oriented strategist—to force discriminatory reasoning. Even subtle cues, like steering the model to express ‘surprise,’ can trigger superior deliberation.

Enterprises may also need to rethink data curation. The study found that models trained on ‘messy’ conversational data—where problems were solved iteratively, including failed attempts—learned exploratory habits faster than those trained on sanitized ‘Golden Answers.’ Discarding engineering logs or Slack threads where debates unfolded could deprive models of the very cognitive diversity that enhances performance.

The Transparency Imperative

In high-stakes applications, trust hinges on visibility. The research argues that users should see the internal dissent behind AI decisions—not just the final answer. This could mean redesigning interfaces to display ‘debate logs’, allowing stakeholders to participate in calibrating outputs. For industries like finance or healthcare, where compliance is critical, open-weight models may offer an advantage over proprietary APIs that obscure reasoning processes.

Proprietary providers currently treat chain-of-thought as a trade secret, but the study suggests this approach may be unsustainable. As enterprises demand auditable reasoning, the value of exposing internal conflicts could prompt a shift toward transparency—either through licensing or open-source alternatives.

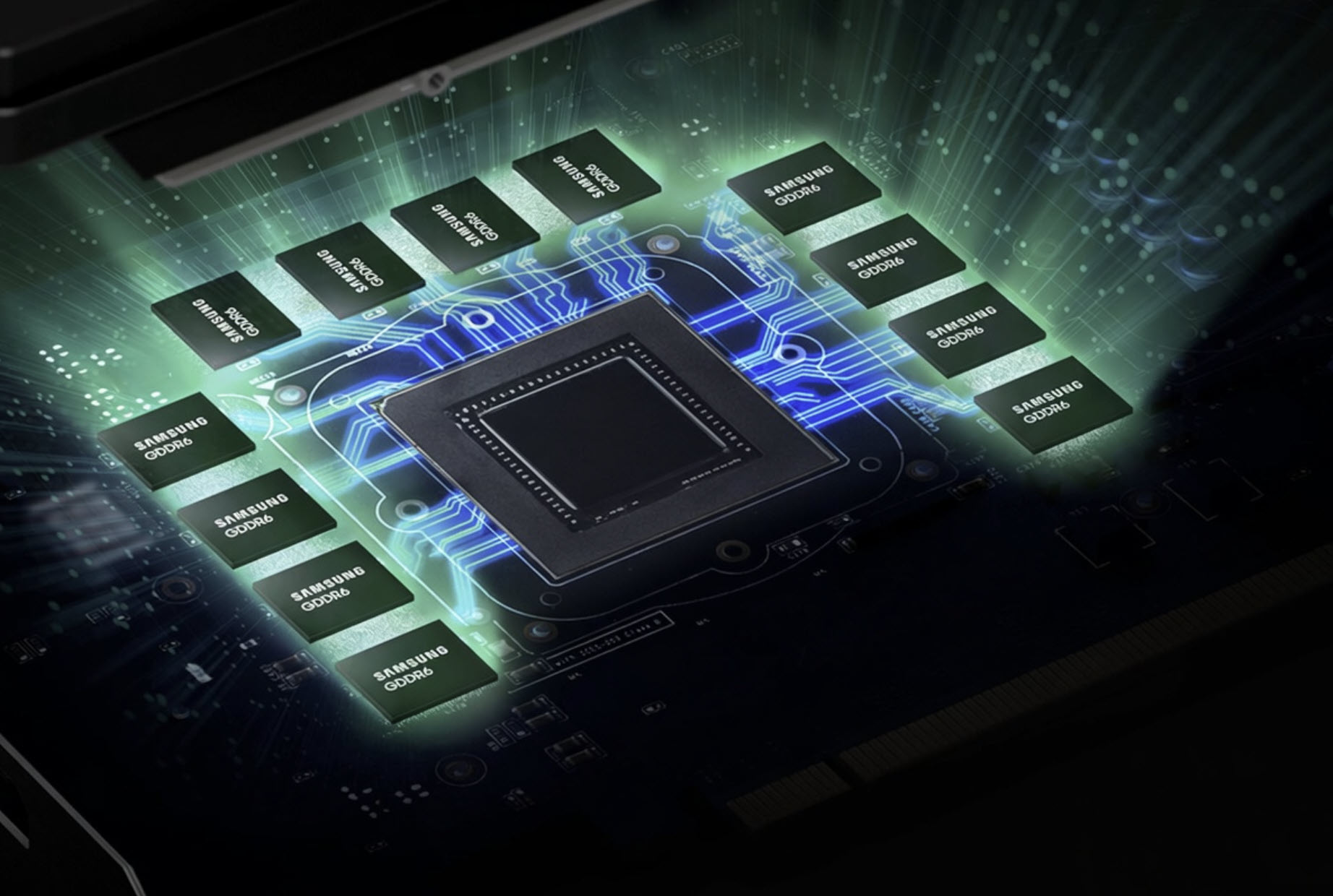

The broader implication? AI architecture is becoming less about raw computation and more about organizational design. Just as human teams thrive on structured dissent, the most capable AI systems may emerge from models that mimic small-group dynamics—debating, verifying, and refining ideas before committing to an answer.

For now, the lesson is simple: the best AI doesn’t just think alone. It argues.