NVIDIA has quietly closed the book on its once-ambitious push into ARM, selling its final remaining stake in the chip designer for $140 million. The transaction, detailed in recent SEC filings, caps a strategic retreat from a venture that once promised to reshape the data center with custom ARM-based CPUs. Yet, the divestiture also signals a pivot: NVIDIA is now betting heavily on x86 as the foundation for next-generation AI infrastructure.

The sale doesn’t mean ARM is irrelevant. Far from it. The company’s Grace Hopper and upcoming Vera CPUs remain critical to NVIDIA’s roadmap, particularly for cloud and edge deployments where power efficiency and scalability matter most. But the shift toward x86—evident in NVIDIA’s partnership with Intel—suggests a growing recognition that ARM alone may not deliver the raw single-threaded performance demanded by agentic AI systems.

The ARM Bet: What Went Right—and What Didn’t

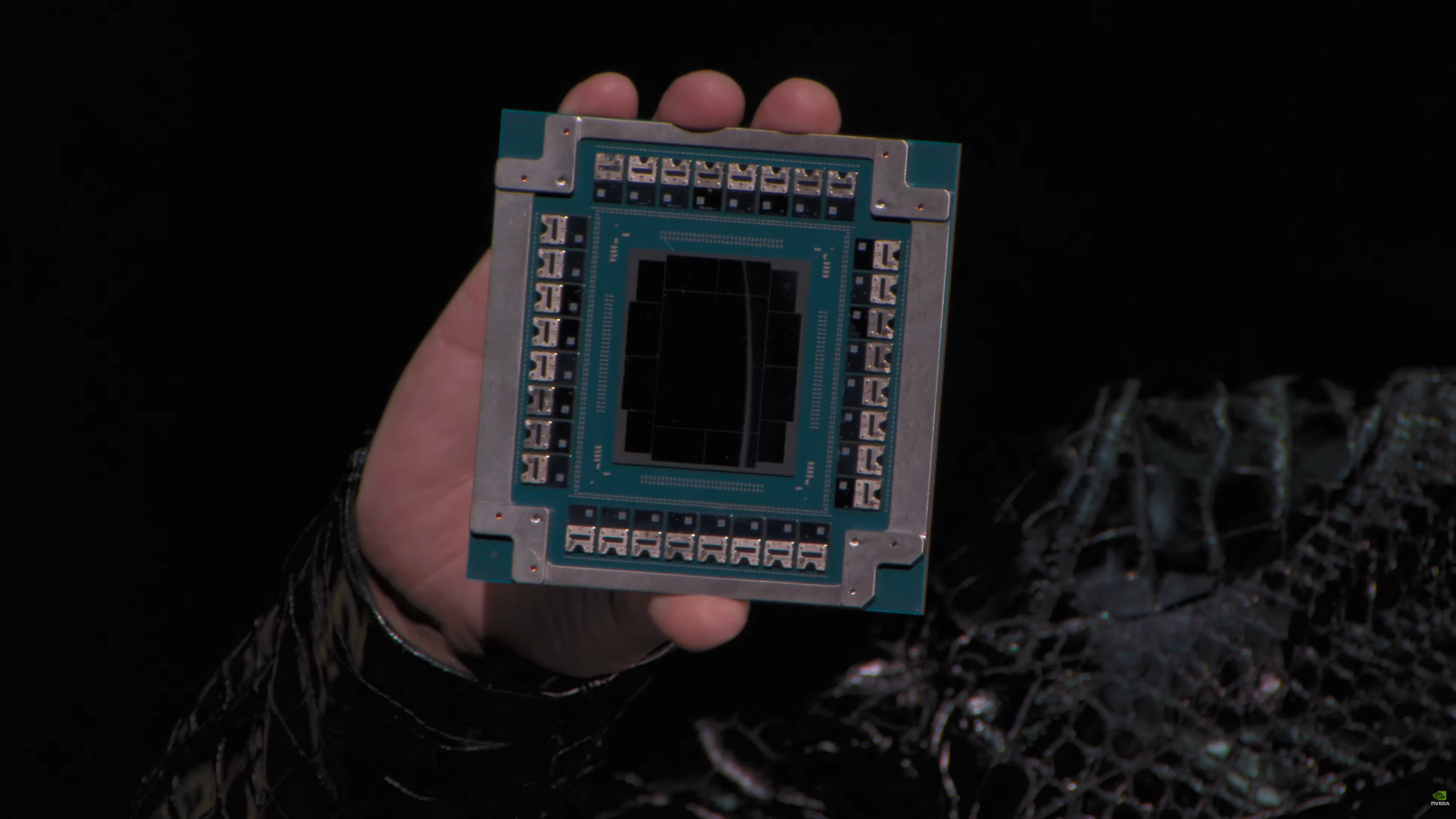

ARM’s architecture has long been the backbone of mobile and embedded devices, but its inroads into the data center have been slower than anticipated. NVIDIA’s investment in ARM was supposed to accelerate that transition, particularly with the Grace CPU and its pairing with the Blackwell GPU. The combo delivered impressive results in multi-threaded workloads, but analysts and hyperscalers have increasingly flagged a critical weakness: ARM’s struggle with single-threaded burst performance.

In agentic AI environments—where millions of microtasks compete for CPU time—even microsecond delays can cascade into bottlenecks. GPUs, designed for parallel workloads, often sit idle while waiting for CPU instructions. x86, with its optimized single-threaded execution, has emerged as the preferred choice for orchestrating these workflows. That’s why Intel and AMD are seeing record demand for their server CPUs, with hyperscalers upgrading racks to handle the growing CPU total addressable market.

Why x86 Is Winning the Agentic AI Game

The technical advantages of x86 in this space are well-documented. Beyond raw speed, the ecosystem matters. Most enterprise data centers run on x86 firmware, virtualization stacks, and decades of compiled software. Migrating to ARM would require costly overhauls—something few hyperscalers are willing to undertake during a critical upgrade cycle.

NVIDIA’s partnership with Intel, announced earlier this year, aims to bridge that gap. By integrating an x86-equivalent solution into its NVLink-fused server racks, NVIDIA is effectively hedging its bets. The move doesn’t render ARM obsolete; it acknowledges that x86 may be the safer play for the foreseeable future. For now, Vera CPUs will stick with ARM, but future generations—like the rumored Feynman line—could introduce x86 options.

What This Means for the Future

The $140 million sale is framed as a financial decision, not a strategic reversal. But it underscores a broader industry trend: ARM’s dominance in AI is being challenged on multiple fronts. While NVIDIA continues to refine its ARM-based CPUs, the company’s embrace of x86 reflects a pragmatic acknowledgment of market realities.

For hyperscalers, the message is clear: if you’re building systems for agentic AI, x86 may be the more reliable path—at least for now. ARM still has a role to play, but the race for AI supremacy is no longer a one-horse show. NVIDIA’s bet on diversification could be its best move yet in an increasingly fragmented landscape.