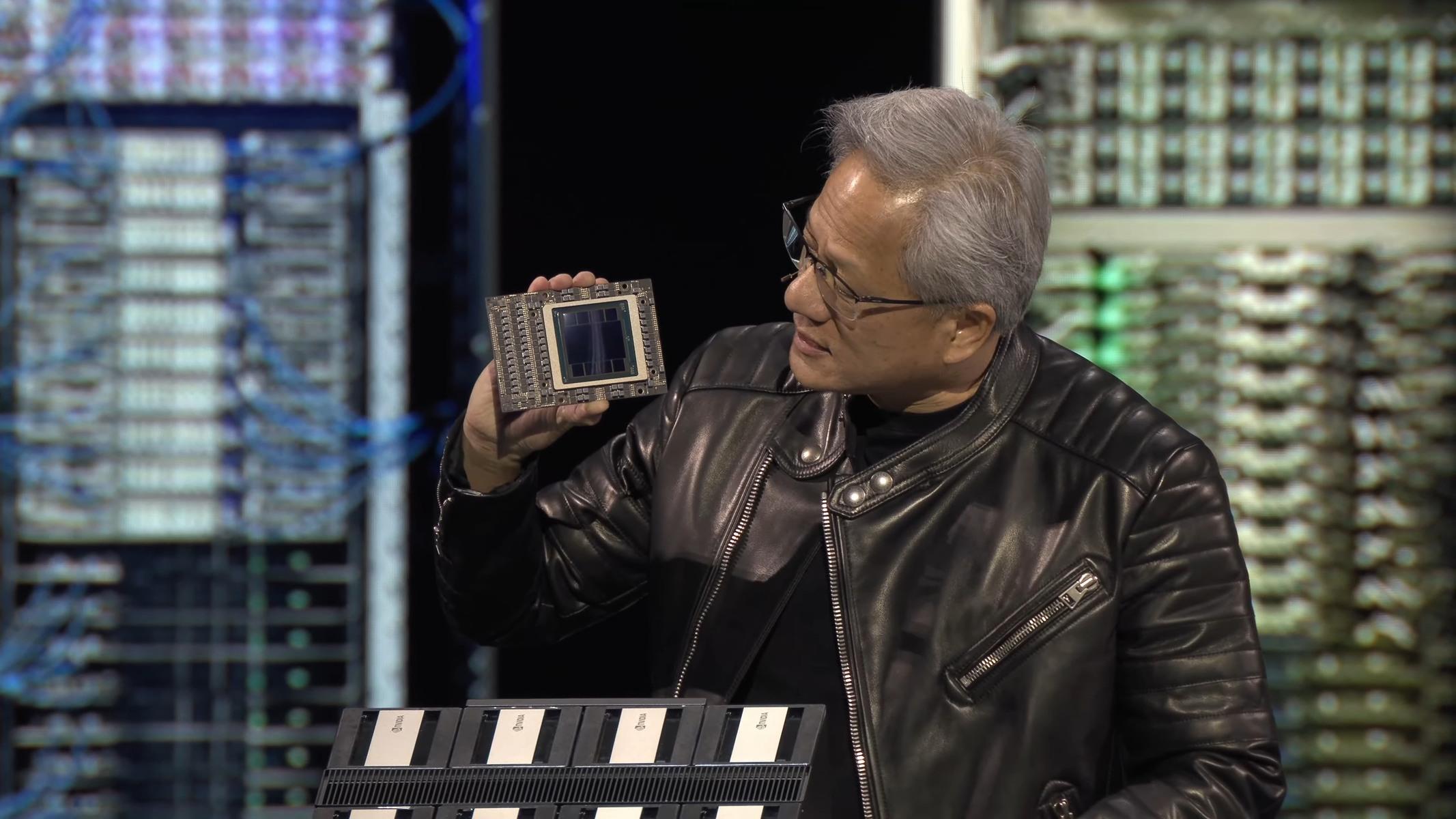

Imagine an AI system that not only processes vast amounts of data but does so with the efficiency of a finely tuned engine. That’s the promise of NVIDIA’s latest Blackwell Ultra architecture, now benchmarked in real-world conditions. The GB300 NVL72—a 72-GPU monstrosity—has emerged as a game-changer for hyperscalers, offering a 50x improvement in tokens per watt compared to its predecessor, the Hopper architecture. This leap isn’t just about raw power; it’s about redefining how AI models handle complex, long-context tasks at a fraction of the cost.

The shift toward agentic AI—where models act as autonomous agents capable of reasoning, planning, and executing tasks—demands infrastructure that can keep pace. Traditional GPUs struggle with the memory bandwidth and latency requirements of these workloads, but Blackwell Ultra addresses that with a bold redesign. At its core, the NVL72 configuration unites 72 GPUs into a single, unified fabric, delivering a staggering 130 terabytes per second of connectivity. That’s a far cry from Hopper’s 8-chip NVLink setup, which, while powerful, was never designed for the scale of modern agentic frameworks.

NVIDIA’s focus on efficiency is evident in the numbers. The company reports a 35x reduction in the cost per million tokens when running Blackwell Ultra, making it the preferred choice for labs pushing the boundaries of AI. But the real breakthrough lies in how it handles long-context workloads—the kind of tasks that require agents to maintain state across thousands of tokens. Here, Blackwell Ultra delivers up to 1.5x lower cost per token and twice the speed in attention processing, a critical metric for models that need to reason over extensive inputs.

Key Specs: Blackwell Ultra GB300 NVL72

- Architecture: Blackwell Ultra (GB300 NVL72)

- GPU Count: 72 unified via NVLink

- Memory Bandwidth: 130 TB/s (vs. 8-chip NVLink in Hopper)

- Precision Format: NVFP4 (optimized for throughput)

- Token/Watt Efficiency: 50x improvement over Hopper

- Cost per Million Tokens: 35x reduction

- Long-Context Performance: 1.5x lower cost per token, 2x faster attention processing

- Target Workloads: Agentic AI, frontier model inference, hyperscale deployments

The implications are clear: Blackwell Ultra isn’t just an incremental upgrade—it’s a fundamental rethinking of how AI infrastructure scales. For hyperscalers and research labs, this means lower operational costs, faster iteration cycles, and the ability to deploy models that were previously out of reach due to memory or latency constraints. NVIDIA’s extreme co-design approach, which integrates hardware, software, and algorithms from the ground up, has paid off in spades. And with Vera Rubin, the next iteration of Blackwell, still on the horizon, the company is doubling down on its lead in the AI infrastructure race.

While Blackwell Ultra is already making waves in early hyperscaler deployments, the full impact will become clearer as more benchmarks and real-world use cases emerge. One thing is certain: the bar for AI efficiency has been raised, and NVIDIA’s latest architecture is setting a new standard for what’s possible.