NVIDIA has signaled a significant shift in its GPU roadmap, emphasizing performance-per-watt efficiency and thermals as critical factors for AI workloads. This strategic pivot comes at a time when data centers are increasingly prioritizing power consumption without compromising computational performance.

The company's latest announcements provide clarity on some aspects of its future GPU lineup while leaving other details shrouded in uncertainty. A key focus is the balance between raw performance and thermal management, particularly for AI-driven applications where efficiency can directly impact operational costs.

Performance and Thermals

The new generation of GPUs aims to redefine the relationship between power consumption and computational output. NVIDIA's engineers are pushing boundaries in both hardware design and software optimization to ensure that these advancements translate into tangible benefits for end-users, particularly those running large-scale AI models.

- Performance: Up to 10% improvement in single-precision performance compared to the previous generation, with a focus on AI workloads such as matrix multiplications and deep learning tasks.

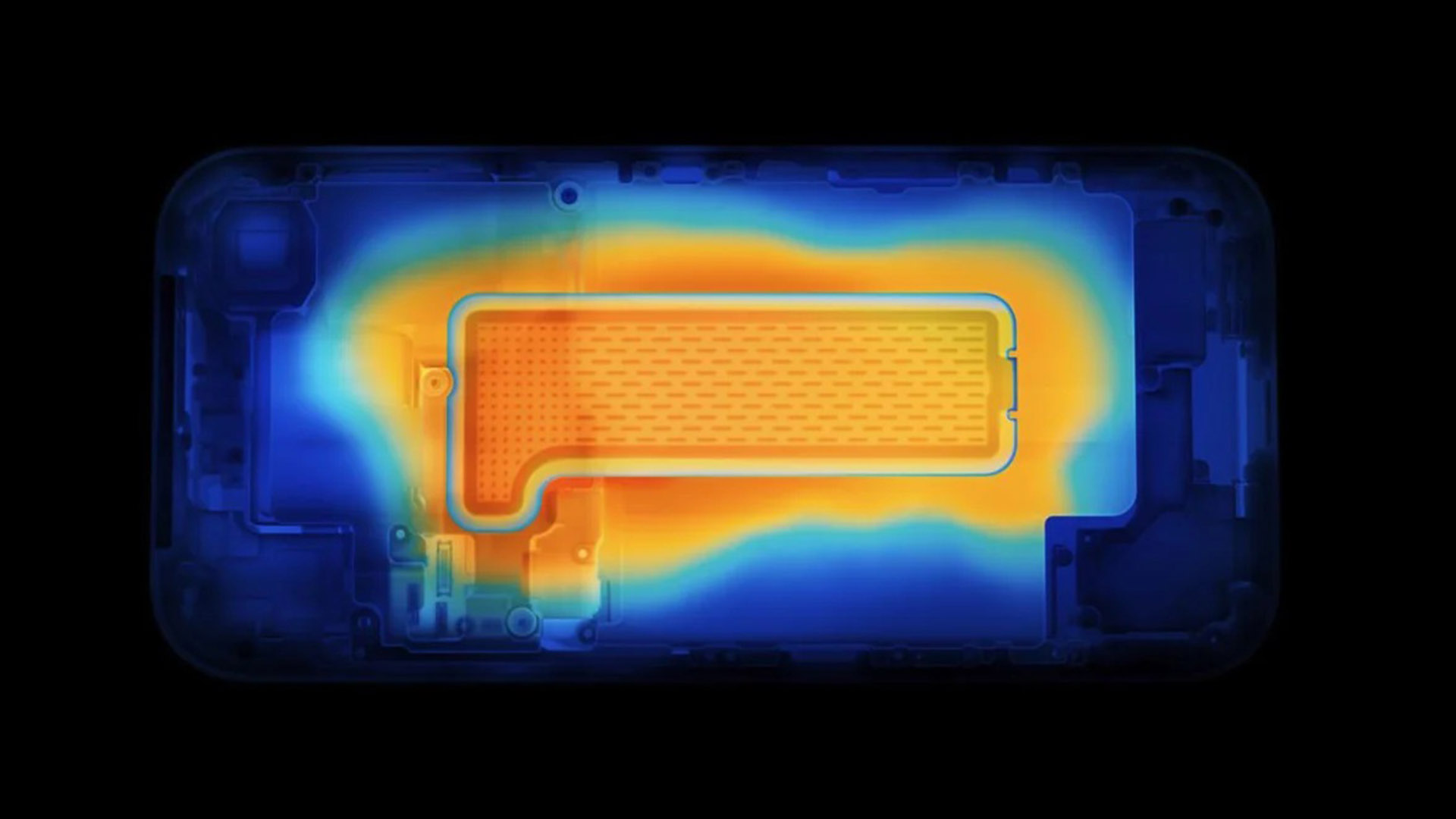

- Thermals: A new thermal design that reduces power consumption by up to 20% while maintaining high performance levels. This is achieved through advanced cooling solutions and more efficient chip layouts.

These improvements are not just about raw numbers; they reflect a broader industry trend toward sustainable computing. Data centers, which consume a significant portion of the world's electricity, stand to benefit greatly from such innovations. For organizations running AI workloads, this means lower costs and reduced environmental impact without sacrificing performance.

Key Specifications

- Architecture: New architecture codenamed 'Blackwell', built on an enhanced version of the existing Ampere design with additional transistors for AI acceleration.

- Memory: Support for up to 48GB of GDDR6X memory, enabling larger batch sizes and more complex models without significant slowdowns.

- Storage: Integrated high-speed NVMe storage options for faster data loading and reduced latency in AI training pipelines.

- Power Consumption: Targeted at 200W TDP, with dynamic power scaling to adjust performance based on workload demands.

The Blackwell architecture is designed to be a bridge between today's high-performance GPUs and the next generation of AI-optimized hardware. While it builds on proven technologies, its focus on efficiency and thermals sets it apart from previous iterations. This approach could make it particularly attractive for edge devices and smaller data centers where power constraints are more stringent.

Future-Proofing and Buyer Considerations

For buyers, the question remains: is this the right time to invest in NVIDIA's new GPUs? The answer depends on several factors, including the specific use case, power infrastructure, and long-term cost savings. Organizations with existing AI workloads may find that the performance-per-watt improvements offer immediate benefits, while those planning future projects might need to weigh the trade-offs between current needs and future scalability.

One practical example of this in action would be a mid-sized data center running natural language processing tasks. With the new GPUs, such a setup could reduce its power consumption by nearly 20% without any loss in performance, directly translating to cost savings on electricity bills. This is a tangible benefit that could tip the scales for many decision-makers.

What's Next?

The roadmap also hints at further innovations on the horizon, including advancements in memory bandwidth and thermal management technologies. However, some details remain unclear, particularly around software support and ecosystem integration. NVIDIA will need to address these areas to ensure seamless adoption across industries.

In summary, NVIDIA's latest moves signal a maturing of its GPU strategy, with a clear focus on efficiency and sustainability. While the full picture is still emerging, the potential for significant cost savings and performance gains in AI workloads makes this an exciting development for the industry. The next few months will be critical in determining how these innovations shape the future of high-performance computing.