NVIDIA’s next major hardware announcement is shaping up to be one of its boldest in years. At an upcoming event, the company’s CEO has hinted that GTC 2026 will showcase chips that defy conventional expectations—possibly building on its recent Vera Rubin AI platform or introducing an entirely new architecture. The stakes are high: if these rumors hold, the reveal could mark a turning point in how AI workloads are processed, with potential ripple effects across data centers, cloud providers, and even edge computing.

The context is critical. NVIDIA has spent the last two years refining its AI infrastructure strategy, shifting from pre-training dominance (Hopper, Blackwell) to inference optimization (Grace Blackwell Ultra, Vera Rubin). Now, with SRAM-focused designs and possible 3D-stacking partnerships—such as those with Groq’s LPUs—rumors suggest the company is preparing to push boundaries further. The question isn’t just what* will be unveiled, but how* it will redefine the limits of compute.

The Vera Rubin Foundation and Beyond

At CES 2026, NVIDIA unveiled the Vera Rubin AI lineup, a suite of six custom chips designed for real-time AI inference. The lineup included Vera CPUs and Rubin GPUs, optimized for low-latency, high-bandwidth workloads. While details on Rubin’s full capabilities remain under wraps, whispers in the industry suggest NVIDIA may expand this architecture with Rubin CPX—a chip explicitly engineered for AI-centric data centers. If true, this could mean a more integrated approach to acceleration, where CPU and GPU tasks are handled by a single, unified silicon solution.

But the bigger bet may lie elsewhere. The Feynman architecture, NVIDIA’s next-generation SRAM-centric design, has long been framed as a revolutionary leap. Unlike traditional GPUs, Feynman is rumored to prioritize on-chip memory bandwidth and 3D-stacking—potentially integrating logic processing units (LPUs) from partners like Groq. If NVIDIA unveils Feynman at GTC, it could signal a departure from conventional GPU scaling, instead favoring a more specialized, memory-optimized approach for AI inference.

Why This Matters: The Shift from Pre-Training to Inference

The AI market has evolved rapidly. A few years ago, the focus was on training massive models—hence the dominance of Hopper and Blackwell in supercomputing. Today, the bottleneck has shifted to inference: serving AI responses in real time with minimal latency. This is where Vera Rubin and, potentially, Feynman come into play.

- Latency reduction: Rubin’s architecture is said to cut processing delays by leveraging tighter CPU-GPU integration, crucial for applications like autonomous systems and real-time translation.

- SRAM innovation: Feynman’s rumored SRAM-heavy design could redefine how data is accessed, reducing the need for off-chip memory and improving efficiency.

- 3D-stacking partnerships: If NVIDIA integrates LPUs from Groq or other partners, it could create a new class of hybrid accelerators, blending GPU power with specialized logic.

For NVIDIA, this isn’t just about hardware—it’s about controlling the entire AI stack. The company has already secured partnerships with cloud providers, data center operators, and even edge device manufacturers. A Feynman or Rubin-derived reveal at GTC could solidify its position as the default choice for AI infrastructure, much like it did with CUDA for GPUs.

What to Expect at GTC 2026

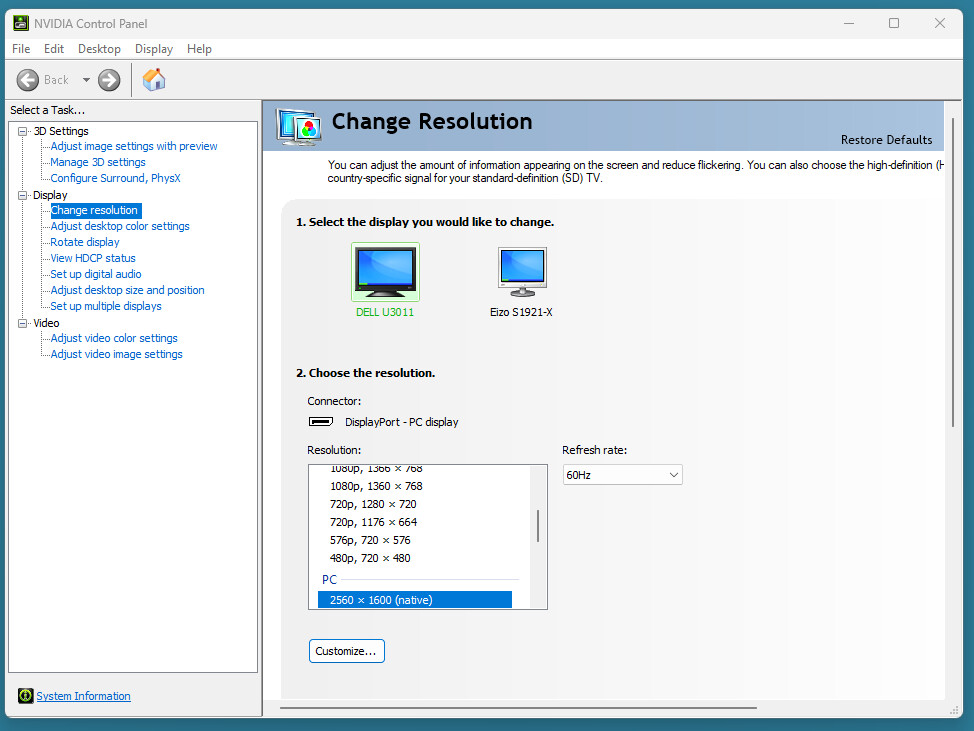

GTC 2026 kicks off on March 15th in San Jose, California, with Huang’s keynote expected to set the tone. While NVIDIA has not confirmed specifics, industry insiders speculate on a few possibilities

- A next-gen Rubin variant (e.g., Rubin CPX) optimized for cloud-scale inference, with tighter CPU-GPU integration.

- The Feynman architecture, potentially featuring 3D-stacking and LPU partnerships, aimed at ultra-low-latency AI workloads.

- Expanded software ecosystem integrations, such as new versions of TensorRT or CUDA optimizations for Rubin/Feynman.

- Announcements around energy efficiency, given the focus on SRAM and on-chip memory bandwidth.

One thing is clear: NVIDIA is betting heavily on inference as the next frontier. If the rumors hold, GTC 2026 won’t just introduce new chips—it could redefine the entire landscape of AI acceleration.

The event will also likely highlight NVIDIA’s broader strategy in AI, which extends beyond chips. The company has framed AI as an industry, not just a technology, encompassing energy, semiconductors, and cloud infrastructure. By controlling the hardware layer, NVIDIA ensures dominance across the stack—from training to deployment. For competitors and partners alike, the implications are significant: those who adapt to Rubin or Feynman will shape the future of AI; those who don’t may find themselves playing catch-up.

As the countdown begins, one thing is certain: whatever NVIDIA unveils in March, it will be a moment that reshapes the industry’s trajectory.