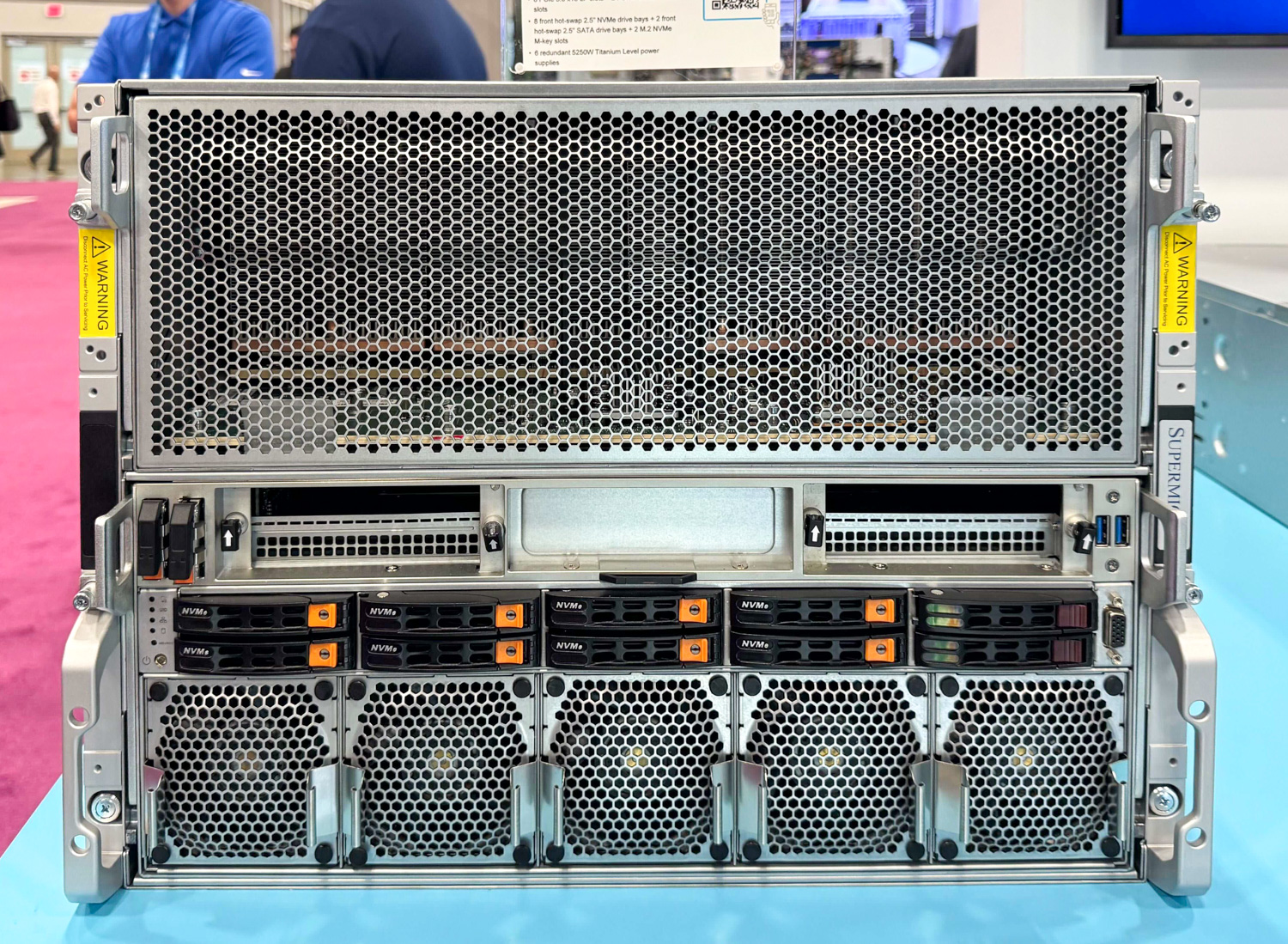

The demand for scalable AI workloads has pushed data center operators to seek hardware that can deliver both raw performance and efficiency without compromising on flexibility. The Supermicro H14 JumpStart platform, featuring AMD's Instinct MI350X GPU, addresses this need with a specialized architecture designed specifically for AI tasks. Unlike general-purpose GPUs, the MI350X is engineered to minimize bottlenecks in AI inference and training, offering a performance-per-watt advantage that traditional solutions struggle to match.

The Architecture of Efficiency

At the heart of the H14 JumpStart lies its dual-socket server platform, which supports up to four Instinct MI350X GPUs per node. Each GPU delivers 2 petaFLOPS of AI-optimized compute power, coupled with 128 GB of HBM2e memory—a significant leap from previous generations. This high memory capacity enables larger batch sizes in AI training and inference, reducing the overhead associated with data sharding and improving overall throughput.

Unified Memory Architecture: A Shift in Paradigm

A standout feature of the MI350X is AMD's unified memory architecture (UMA), which treats GPU and CPU memory as a single, coherent pool. This design choice eliminates latency spikes that occur when workloads alternate between compute-intensive and memory-bound phases, such as in hybrid AI models. While this approach offers performance benefits for specialized AI tasks, it introduces tradeoffs for users with mixed workloads who rely on traditional GPU-CPU separation.

- Dual-socket server platform supporting up to four Instinct MI350X GPUs per node

- 2 petaFLOPS of AI-optimized performance per GPU

- 128 GB HBM2e memory per GPU, enabling larger batch sizes and reduced data sharding overhead

- Unified memory architecture (UMA) for lower latency in hybrid workloads

- Supermicro's proprietary software stack for streamlined deployment and management

Performance Considerations and Tradeoffs

The MI350X's specialization comes with inherent limitations. While it excels in AI-centric tasks, its versatility is constrained compared to general-purpose GPUs like the NVIDIA H100 or AMD Radeon Instinct MI250X. Organizations with diverse workloads may find themselves limited to AI-focused use cases, which could pose challenges if their computational needs evolve over time. Additionally, the platform's high memory capacity, while beneficial for large-scale deployments, represents a premium cost that may not be justified in environments where cost per petaFLOP is a critical factor.

Data Center-Ready with Software Integration

Supermicro's H14 JumpStart is built with data center reliability in mind. The platform includes a proprietary software stack designed to simplify deployment, management, and maintenance—critical factors in environments where downtime is unacceptable. This integration extends beyond hardware configuration, offering tools for workload optimization and resource allocation, which can significantly improve operational efficiency.

Implications for AI Infrastructure

The H14 JumpStart platform is positioned as a high-performance solution for organizations focused on AI inference or training. Its combination of high memory bandwidth, unified architecture, and data center-ready form factor makes it a strong contender in the growing market for AI-optimized infrastructure. However, users must carefully weigh the benefits of specialization against the potential loss of flexibility.

Where Specialization Meets Strategy

The engineering tradeoffs in the H14 JumpStart—prioritizing memory efficiency and unified architecture—could redefine how organizations approach large-scale AI deployments. For those willing to commit to an AI-centric infrastructure, this platform offers a compelling alternative to traditional GPU-based solutions. The key lies in aligning the platform's strengths with specific use cases while being mindful of its limitations.

In summary, the Supermicro H14 JumpStart with AMD's Instinct MI350X represents a significant advancement in AI-optimized data center solutions. Its focus on performance, memory efficiency, and software integration positions it as a viable option for organizations seeking to push the boundaries of large-scale AI workloads. As the demand for specialized AI infrastructure continues to grow, platforms like this will play an increasingly important role in shaping the future of high-performance computing.