The promise of AI-powered document understanding has been one of the most hyped narratives in enterprise tech over the past year. Yet in fields where precision isn’t just preferred but mandatory—engineering, aerospace, or pharmaceuticals—Retrieval-Augmented Generation (RAG) systems have delivered underwhelming results. The core problem isn’t a lack of model sophistication, but a fundamental oversight: these systems treat technical documents as undifferentiated text, ignoring the very structure that makes them usable.

When RAG was first introduced, its ability to pull relevant passages from vast document repositories seemed revolutionary. But in practice, the technology has stumbled when faced with the complexity of real-world technical manuals. A query about a safety specification in a 300-page PDF might return a header without its corresponding data, or worse, a fabricated response that bears no relation to the original text. The issue isn’t the AI’s intelligence—it’s the brute-force approach to document processing that treats tables, diagrams, and hierarchical relationships as afterthoughts.

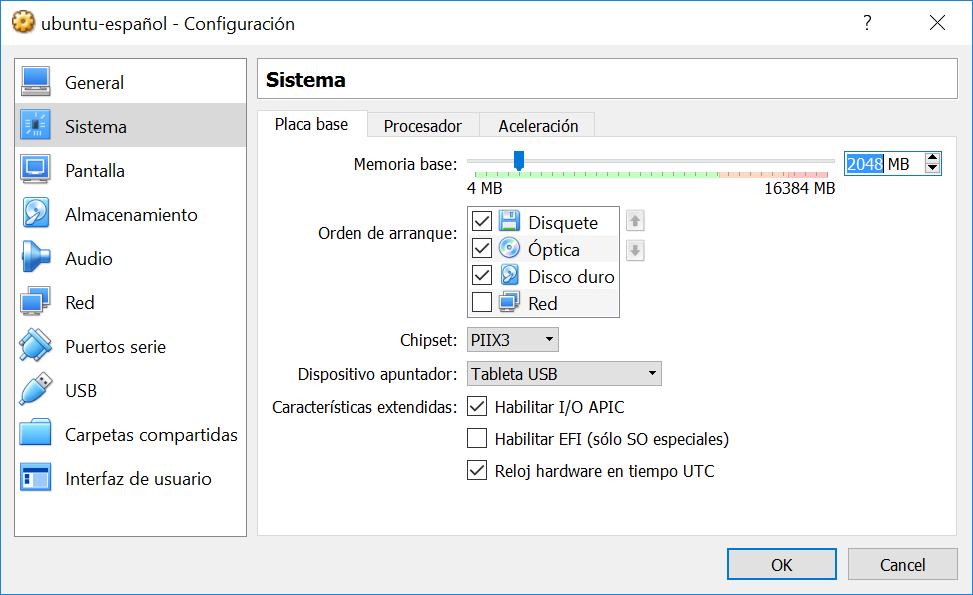

Why standard RAG systems fail with technical documents:Documents are split into fixed-size chunks (e.g., 500 characters), breaking apart tables, severing headers from data rows, and flattening hierarchical relationships.Visual data—flowcharts, schematics, and diagrams—are ignored entirely, leaving 30-50% of critical information inaccessible.Query results lack traceability, forcing users to manually verify answers in source documents—a process that defeats the purpose of automation.Even when answers are technically correct, the lack of contextual presentation erodes trust in high-stakes environments.

The solution lies in two interconnected advancements: semantic chunking and multimodal processing. Semantic chunking replaces arbitrary text segmentation with structure-aware extraction, ensuring that a query about a system’s maximum operating voltage retrieves the complete table row—not just a fragmented header. Early adopters report retrieval accuracy improvements of up to 40% for tabular data when using these methods, effectively eliminating the ‘hallucination trigger’ of fragmented context.

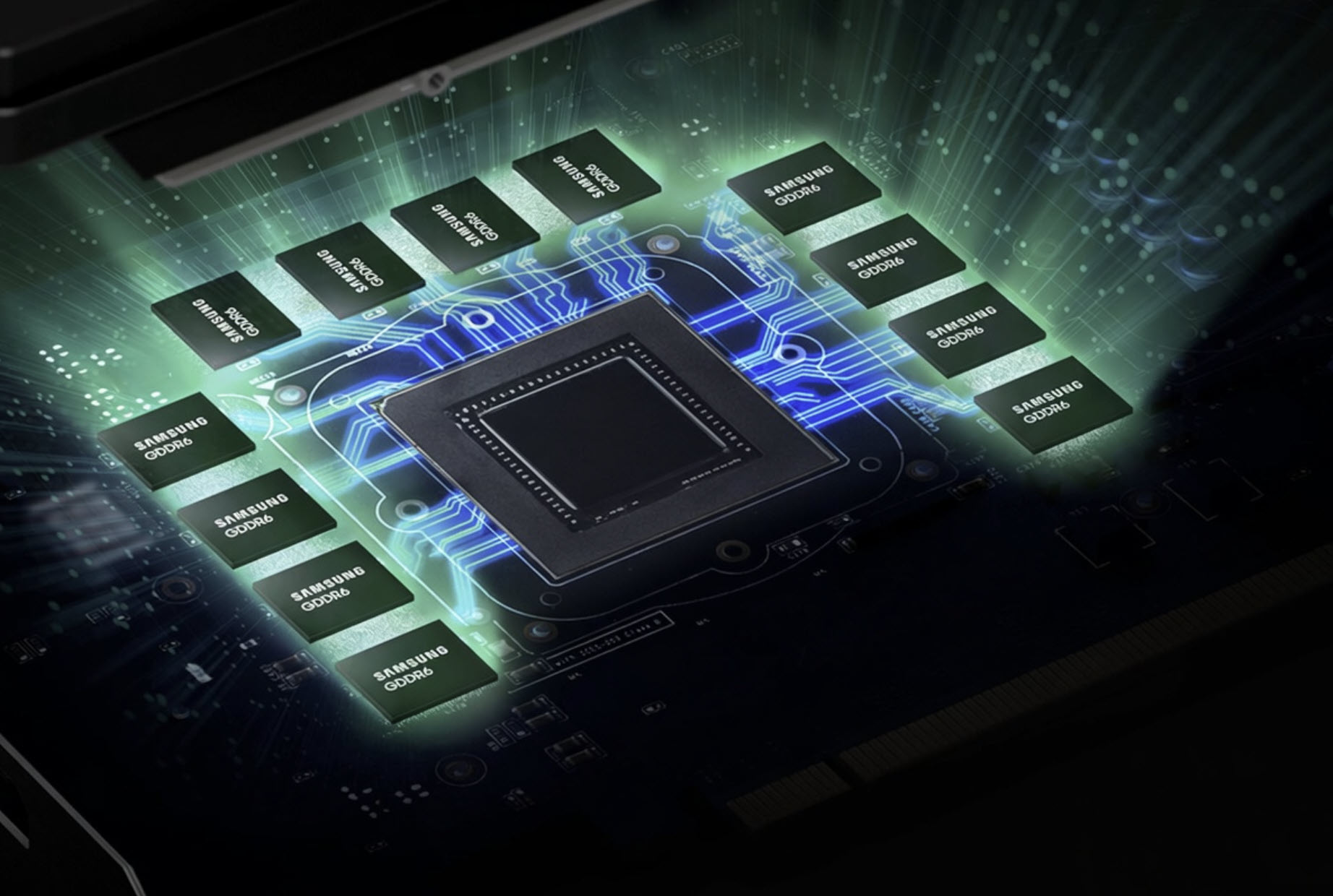

But the real breakthrough comes when RAG systems begin to process visual data. Most technical documents contain critical information in diagrams, flowcharts, or schematics—yet traditional RAG pipelines treat these as binary blobs. New preprocessing techniques combine optical character recognition (OCR) with vision-language models to extract metadata from images. For example, a temperature vs. pressure chart might be analyzed to generate a searchable description: ‘Process C requires 80°C minimum to maintain stability.’ This metadata is then embedded alongside the original image, enabling vector search to match queries even when the answer is visual.

Trust is the final hurdle. Current RAG interfaces often cite a source filename without context, leaving users to manually hunt through documents to verify answers. The fix is an evidence-based user interface that highlights the exact table cell or diagram used to generate a response. If the system cites a voltage limit, the user sees the corresponding table cell rendered in context. This transparency turns skepticism into operational confidence—a prerequisite for deployments in industries where mistakes can have catastrophic consequences.

The architecture is evolving rapidly. Native multimodal embeddings, like those now available in leading models, are eliminating the need for intermediate captioning by mapping text and images directly into a shared vector space. Meanwhile, advancements in long-context language models may soon render chunking obsolete, allowing entire technical manuals to be processed as single inputs. Yet today’s bottleneck remains preprocessing: without semantic and multimodal foundations, even the most advanced models will struggle to extract meaningful insights from unstructured data.

The gap between RAG demos and real-world performance isn’t about model size—it’s about whether the system respects the data’s inherent structure. By moving beyond flat text processing and unlocking the logic embedded in technical documents, enterprises can transform their PDF archives into true knowledge assets. The question isn’t whether RAG will work for technical fields—it’s whether organizations will adopt the preprocessing methods that make it reliable. The time to act is now, before the limitations of current implementations become a competitive liability.