Most discussions about AI agents focus on the wrong thing. The debate rages over model architectures, GPU acceleration, and cloud scaling—yet the critical infrastructure enabling these agents to function remains conspicuously overlooked.

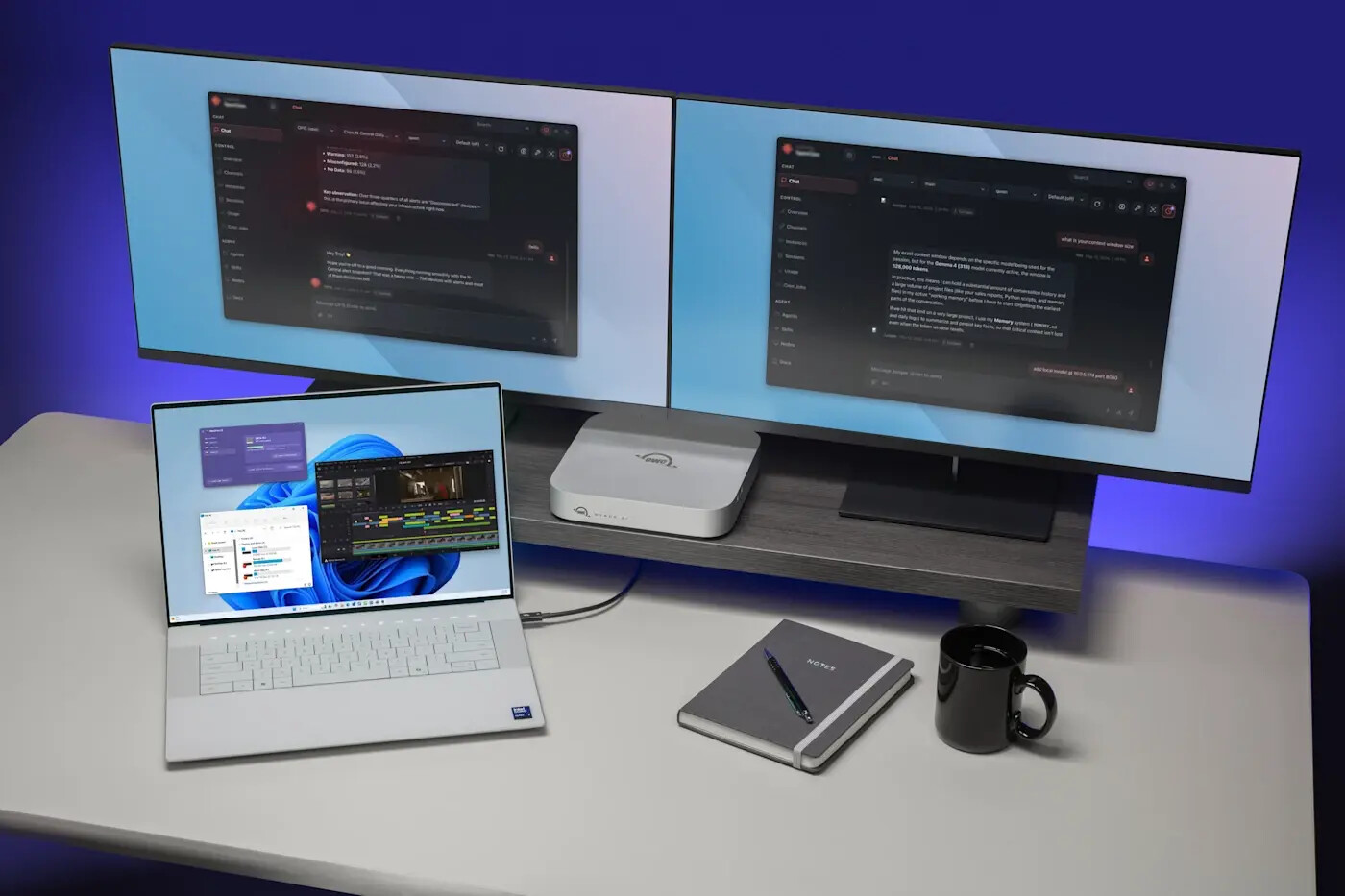

Agents, like any intelligent system, require more than computational power. They need **operational senses**—the ability to perceive, interpret, and act on real-world data in real time. Without them, even the most advanced AI becomes a high-performance black box, making decisions based on outdated, fragmented, or incomplete information.

This isn’t a theoretical concern. It’s a design flaw with immediate consequences. Organizations deploying agents without robust data infrastructure risk

- Automated systems that oscillate between paralysis and overreaction due to contradictory data streams.

- Critical workflows that stall mid-execution because agents lack visibility into system dependencies.

- Post-incident investigations that devolve into data archaeology, as teams scramble to reconstruct events from fragmented logs.

The problem isn’t just technical—it’s strategic. The velocity of agentic AI doesn’t mask data gaps; it exposes them at machine speed. What once were minor inefficiencies in analytics become existential risks when agents operate autonomously.

Three Senses Agents Can’t Do Without

For AI agents to function in enterprise environments, they require three core sensory capabilities—each more critical than the last

- Real-time operational awareness: Continuous, high-velocity streams of telemetry, logs, and metrics across applications, infrastructure, and security tools. An agent detecting a security anomaly must see the event unfolding now, not hours later.

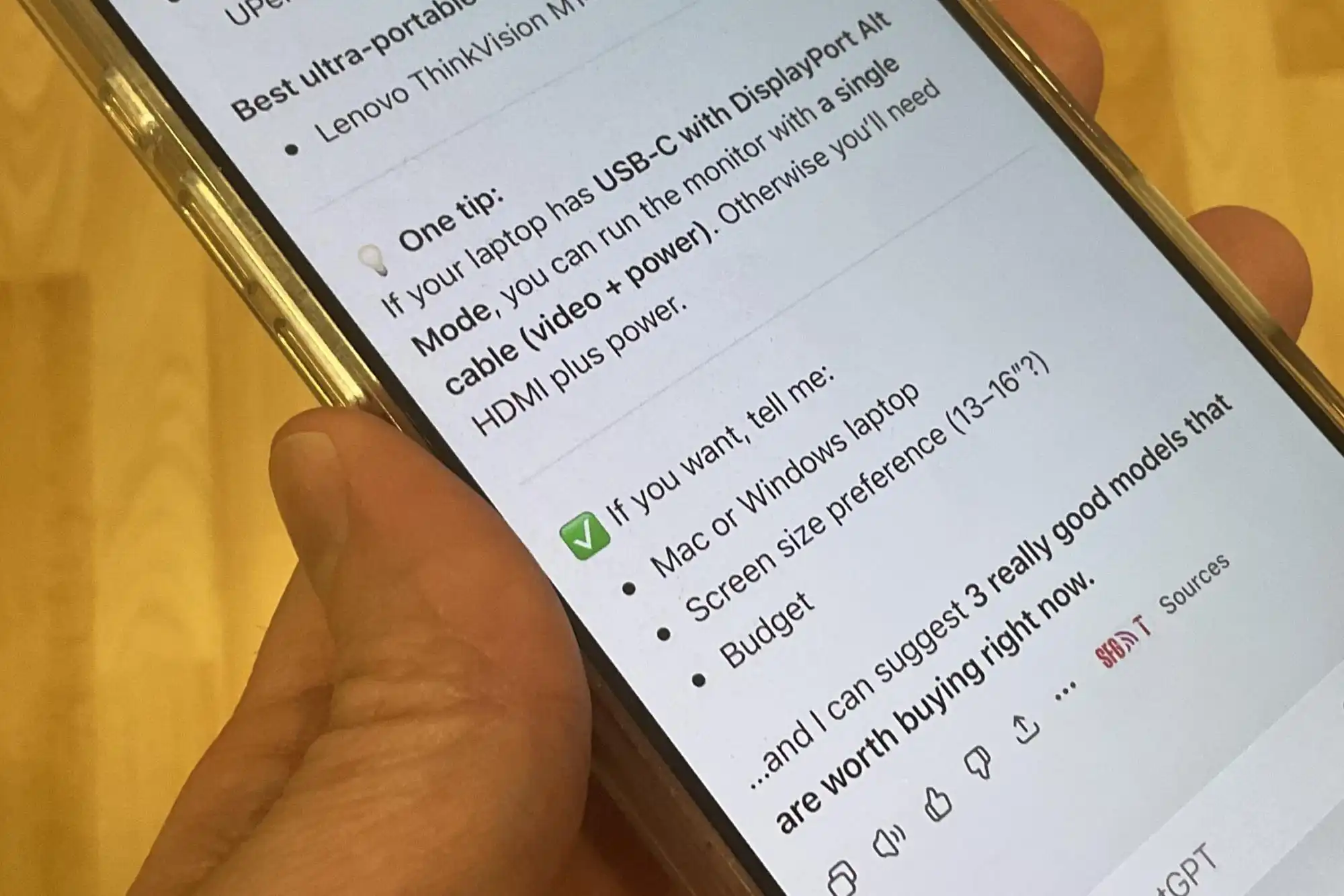

- Contextual understanding: The ability to correlate disparate data points instantly. A login failure is meaningless in isolation, but when paired with unusual network traffic and a recent configuration change, it becomes a confirmed breach.

- Historical memory: Access to baselines, patterns, and anomalies over time. Agents must recognize what ‘normal’ looks like to distinguish between routine fluctuations and genuine incidents requiring intervention.

Without these, agents are flying blind. The analogy to self-driving cars is apt: even the most advanced AI can’t navigate without sensors. In enterprise settings, the stakes are higher—the consequences of poor data visibility range from failed automation to systemic outages.

The Hidden Cost of Data Debt

Most organizations already have the infrastructure problem. Data silos, inconsistent formats, and legacy monitoring tools create a ‘data debt’ that has festered for years. In traditional analytics, these issues slow down insights. In agentic environments, they paralyze operations.

Consider the ripple effects

- Inconsistent decisions: Agents receive conflicting signals from fragmented data sources, leading to erratic behavior—sometimes doing nothing, other times triggering unnecessary failovers.

- Stalled automation: Workflows break when agents lack visibility into system dependencies or ownership, leaving critical gaps in execution.

- Manual recovery: When failures occur, teams spend days reconstructing events because there’s no clear lineage to explain the agent’s actions.

The issue isn’t just technical—it’s cultural. Organizations have treated data as a byproduct of operations for too long. But in the agentic era, data becomes the raw material of decision-making. The cost of neglect isn’t just slower insights—it’s the collapse of autonomous systems under their own weight.

How Leaders Are Building for the Agentic Future

The organizations succeeding in this transition aren’t the ones with the most agents or the fanciest models. They’re the ones treating **operational data as infrastructure**—as critical as power, networking, or security. Their approach centers on four pillars

- Unified data at scale: Consolidating disparate monitoring tools into a single operational data platform capable of handling petabyte-level datasets without proportional cost increases. Efficiency comes from tiering, federation, and AI-driven automation.

- Built-in context: Data must arrive pre-correlated, with embedded relationships between systems, dependencies, and business impact. This reduces the cognitive load on agents and accelerates decision-making.

- Traceable lineage: Every agent decision must be auditable, with a clear record of the data that informed it. This isn’t just for debugging—it’s for compliance, trust, and accountability in autonomous systems.

- Open interoperability: Agents won’t operate in walled gardens. They need to sense across multi-cloud, hybrid, and on-premises environments, requiring adherence to open standards and API integrations.

These capabilities aren’t just useful—they’re necessary. The competitive advantage in 2026 won’t belong to the organization with the most advanced AI models. It will belong to those whose agents have the **operational senses** to act on reality.

The irony is that this infrastructure benefits more than just AI. It enhances human operations, traditional automation, and business intelligence immediately. Organizations that invest in it today won’t just future-proof their agents—they’ll transform how they operate entirely.

For now, the question isn’t whether your agents can reason or scale. It’s whether they can see.