A fundamental shift in how online gaming servers are managed is underway. Artificial intelligence is being integrated at the infrastructure level, promising not just faster response times but also smarter resource allocation that could redefine the player experience.

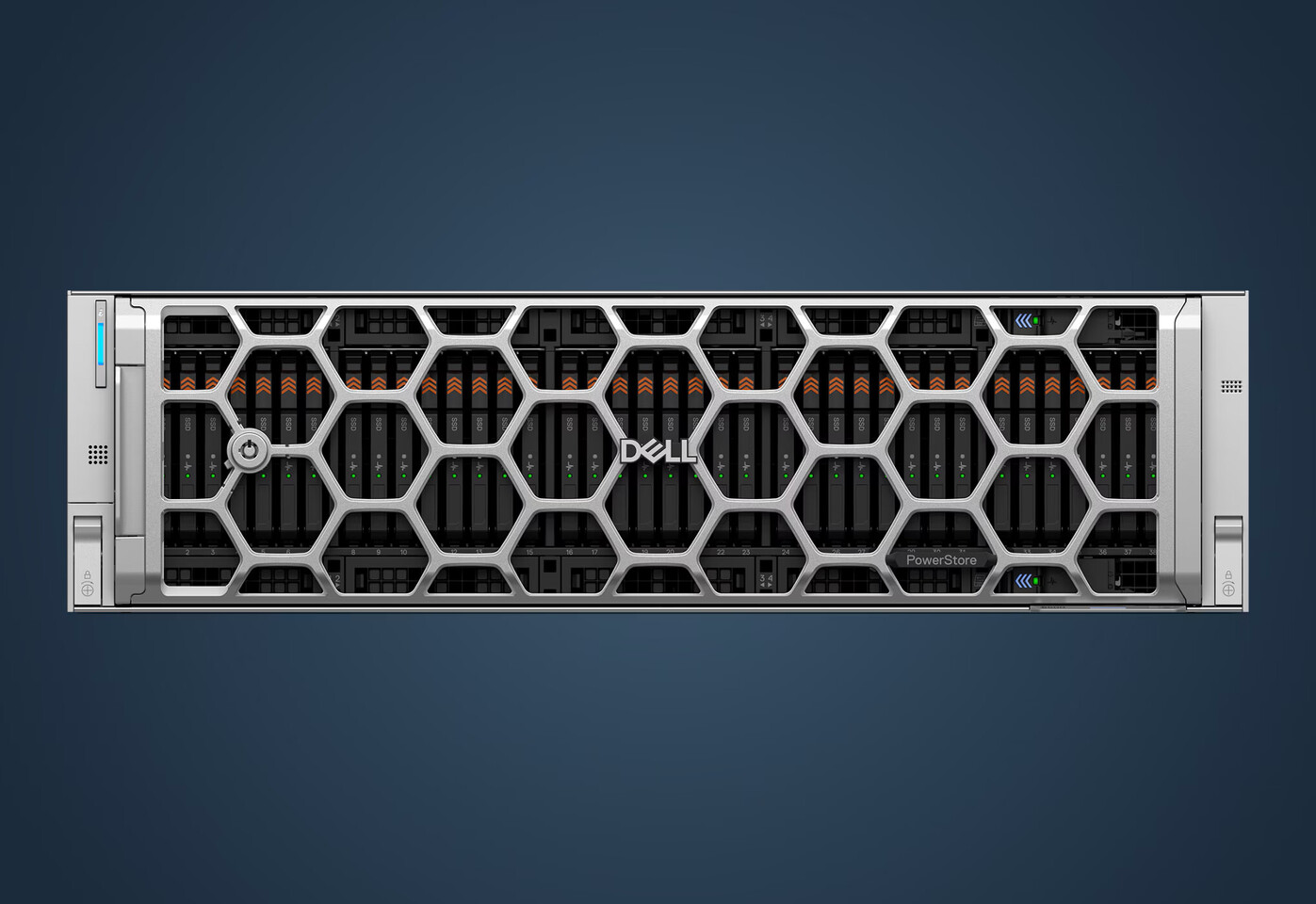

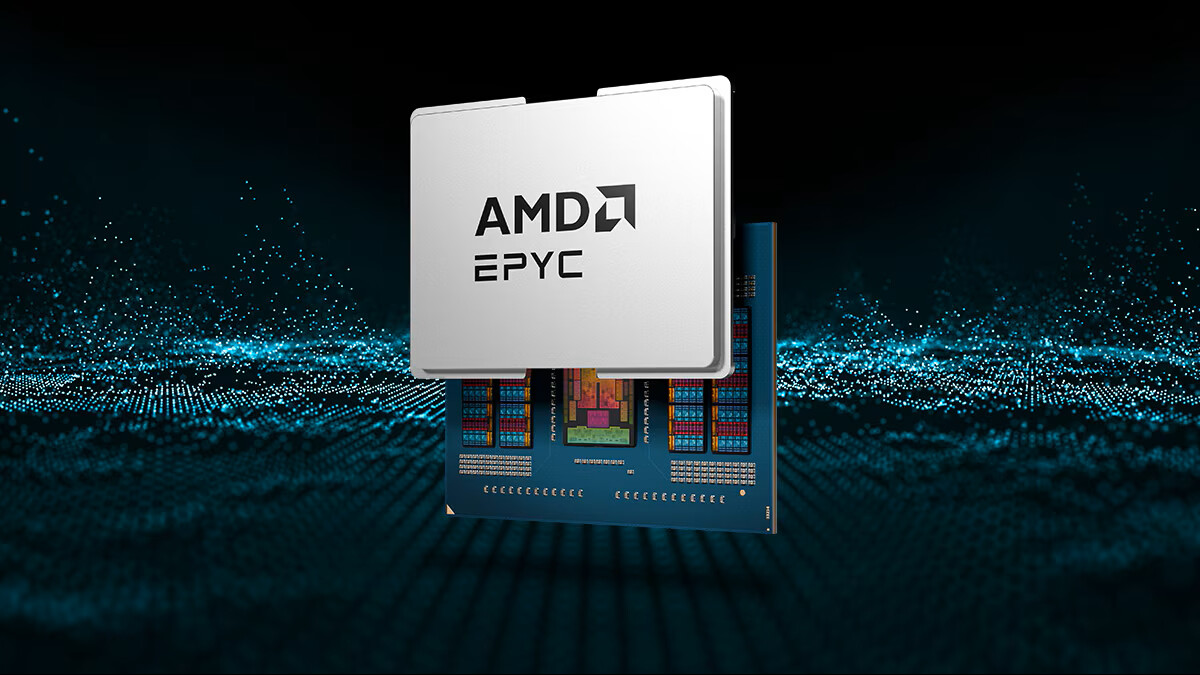

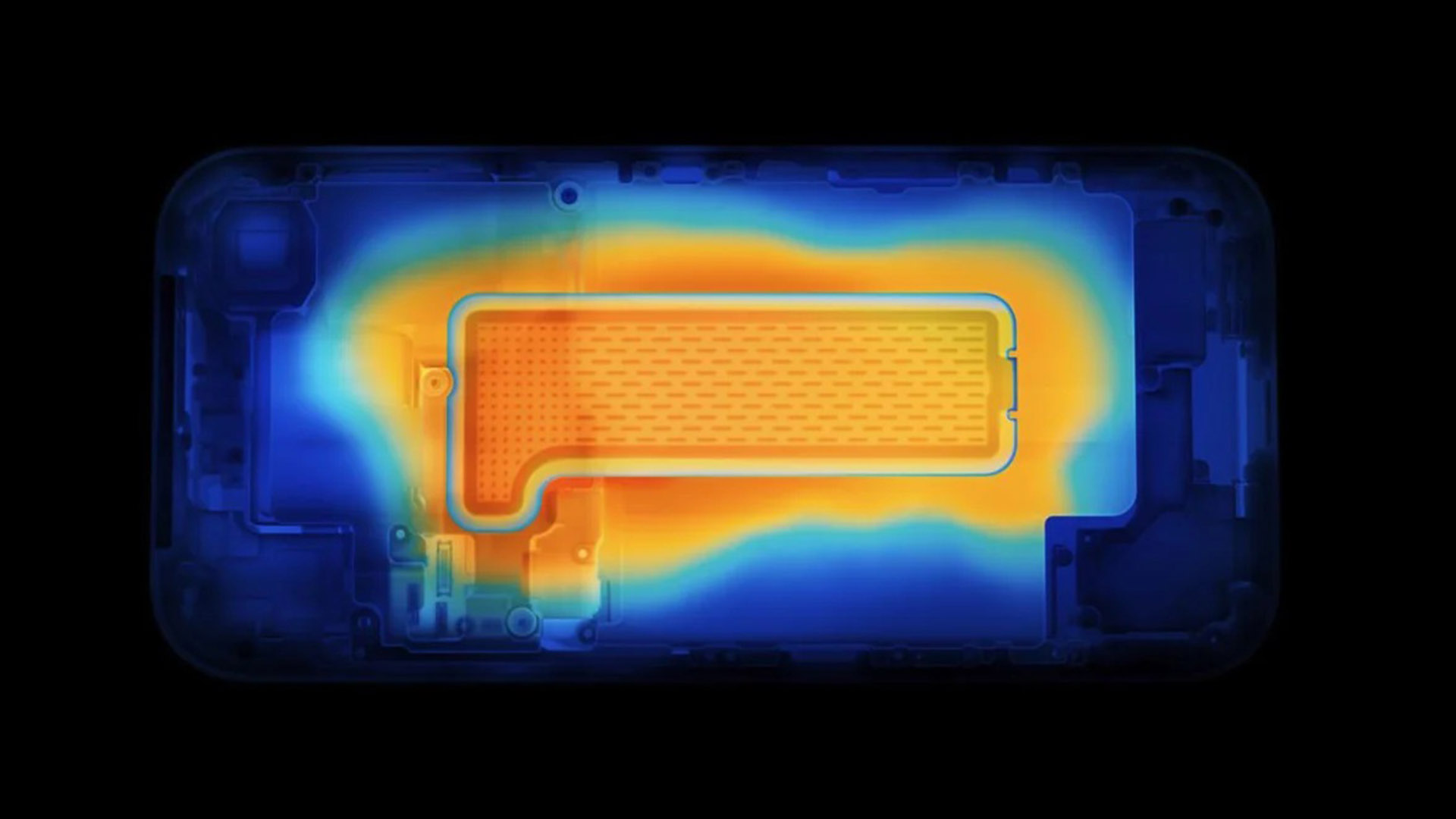

At the heart of this change is a new platform designed to run AI workloads alongside traditional gaming traffic on the same hardware. Unlike previous attempts to overlay AI functions on gaming servers, this approach is built from the ground up with performance-per-watt in mind—a critical factor as data centers face increasing pressure to balance power consumption and cooling demands.

Performance reimagined

The platform leverages a combination of dedicated AI accelerators and high-bandwidth memory (HBM) to process real-time analytics without compromising on latency. Early benchmarks suggest it can handle up to 10,000 simultaneous players with sub-50ms response times, even under heavy AI workloads. This is achieved through a tightly coupled architecture that minimizes data movement between components, reducing thermal output while maintaining peak performance.

Key technical details

- AI accelerator: 16-core tensor processor (4.2 TOPS)

- Memory: 32 GB HBM2e (256-bit bus)

- Bandwidth: 800 GB/s

- Thermal design power (TDP): 200 W

The thermal efficiency is a standout feature. Traditional gaming servers often require additional cooling solutions when pushed to capacity, but this platform maintains stable operation at ambient temperatures up to 45°C without active liquid cooling. This could lead to significant cost savings for data center operators, who are increasingly prioritizing power-efficient hardware.

Broader industry implications

For game developers and server providers, the introduction of AI-driven optimization marks a departure from reactive scaling strategies. Instead of adding more machines to handle peak loads, AI can dynamically allocate resources based on player behavior patterns, reducing waste and improving uptime. This is particularly relevant for multiplayer titles where latency and stability are non-negotiable.

While the immediate impact will be felt in high-traffic environments like MMOs or competitive shooters, the underlying technology could eventually trickle down to smaller-scale servers used by indie studios or cloud-based gaming services. The focus on thermals also aligns with broader industry trends toward sustainable data center operations.

The most significant change, however, is the potential for AI to become an invisible but indispensable part of gaming infrastructure—no longer a separate layer but an integral component that shapes how servers operate at their core.