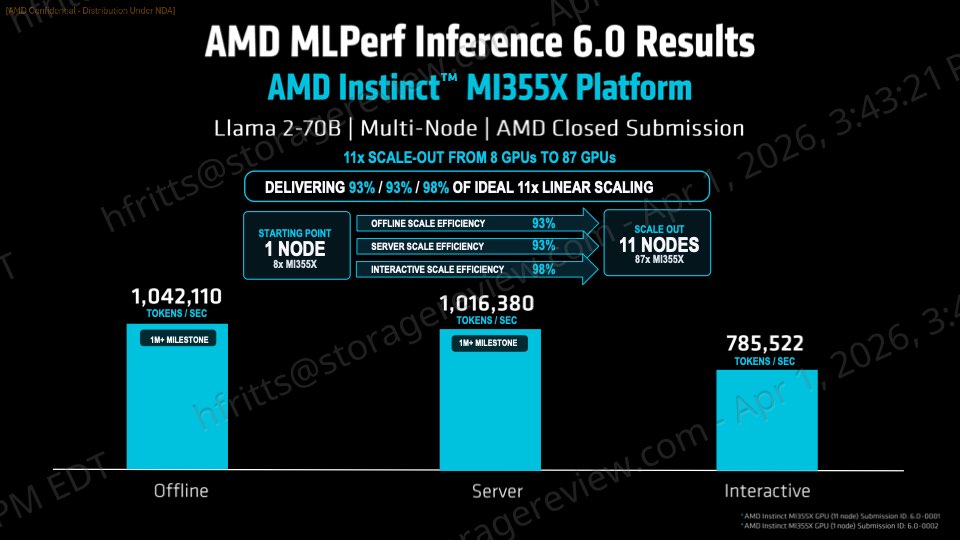

The AMD Instinct MI355X accelerator has solidified its position as a leading choice for AI inference workloads, particularly in the MLPerf Inference v6.0 benchmarks. Its ability to process over 1 million tokens per second demonstrates a significant leap forward in performance, reflecting both raw computational power and optimized software integration. This performance is not an isolated metric but part of a broader trend where accelerators are increasingly being judged on their ability to handle complex, real-world AI tasks efficiently.

One of the MI355X's standout features is its support for a scalable ROCm stack, which allows developers to deploy it in configurations ranging from single-node setups to large-scale clusters. This flexibility addresses a critical need in modern AI infrastructure, where workloads can vary dramatically in size and complexity. For system administrators, this means the ability to scale resources dynamically without being locked into a rigid architecture. The ROCm stack's maturity also plays a role here, as it provides a stable foundation for both current and future AI applications.

However, performance gains come with tradeoffs that developers must carefully evaluate. The MI355X, while excelling in inference tasks, introduces constraints related to memory bandwidth and power consumption that can impact deployment decisions. In environments where resource allocation is tightly managed—such as cloud data centers or large-scale enterprise deployments—these factors can influence whether the accelerator is the best fit for a given workload. For example, applications requiring high memory throughput may find the MI355X's performance compelling, while others with more demanding multi-modal requirements might need to explore additional options.

Looking ahead, the MI355X's role in more complex AI scenarios remains an open question. While it delivers strong results in inference tasks, its performance in multi-modal workloads—where accelerators are pushed to their limits—has yet to be fully demonstrated. These workloads often involve a mix of data types and processing requirements that can strain even the most capable hardware. Developers should consider this when planning their AI infrastructure, as the MI355X's strengths may not translate directly to all use cases.

For those invested in future-proofing their AI systems, the MI355X offers a compelling balance of performance and scalability. Its ability to integrate seamlessly into existing ROCm-based environments means that enterprises can adopt it without overhauling their current setups. However, the decision to deploy the MI355X should be based on a thorough evaluation of its fit within specific workloads, rather than relying solely on benchmark numbers. The accelerator's true value will be realized in how well it adapts to the evolving demands of AI, particularly as multi-modal and more complex tasks become the norm.

In conclusion, the AMD Instinct MI355X is a notable advancement in AI inference hardware, but its full potential hinges on how well it performs beyond traditional benchmarks. Developers and administrators should weigh its strengths against their unique requirements to determine if it aligns with long-term goals. As AI workloads continue to diversify, the MI355X's scalability and performance will be key indicators of its lasting impact in the field.