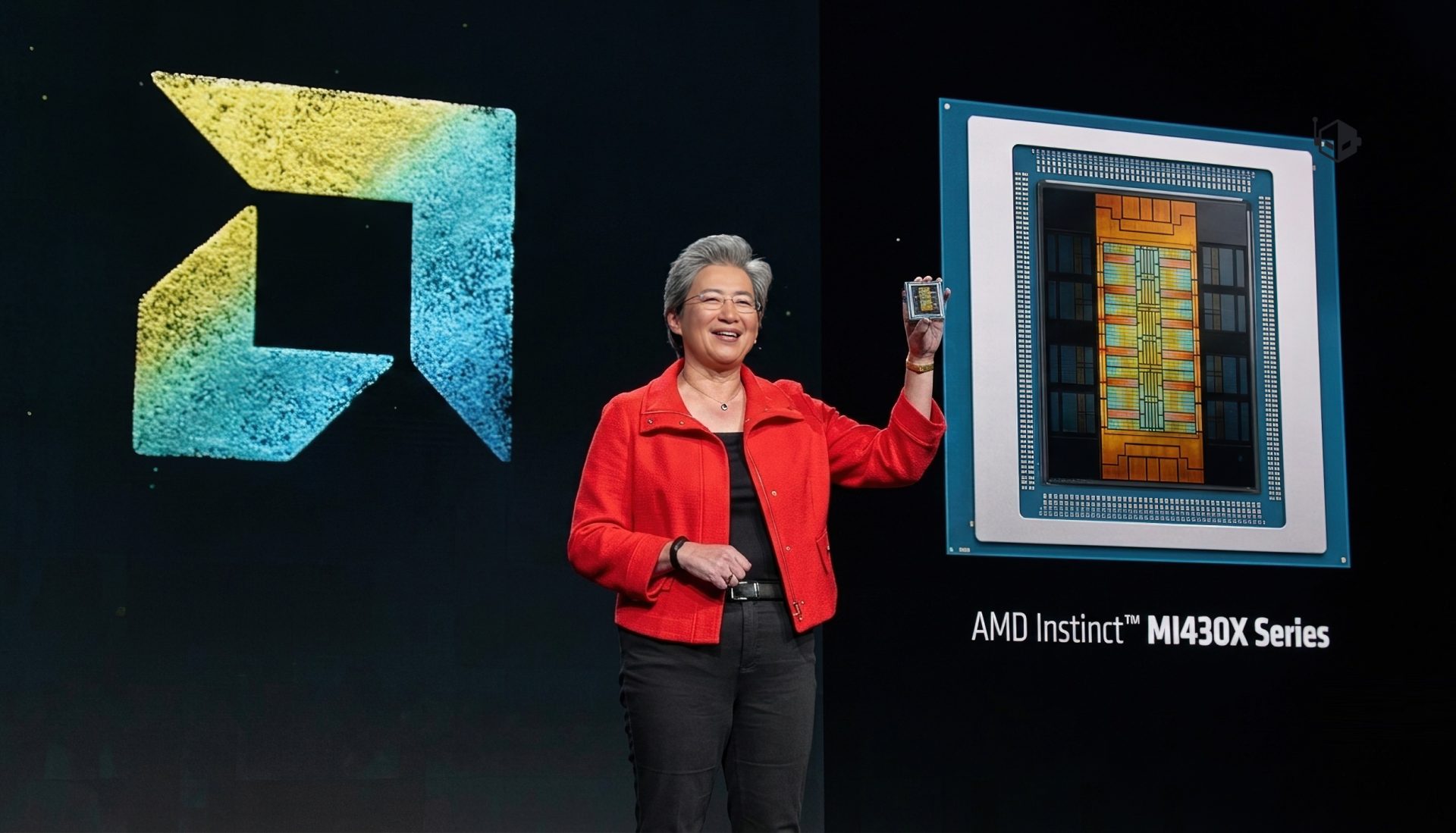

Enterprises chasing AI performance often face a stark choice: cloud-based solutions that can introduce privacy risks and unpredictable costs, or on-prem accelerator platforms that demand significant power and cooling upgrades. AMD’s latest Instinct MI350P PCIe GPUs aim to carve out a third option—one that drops into existing server racks without the need for dedicated infrastructure changes.

Designed as dual-slot cards for standard air-cooled servers, the MI350P series is built to handle inference workloads and RAG pipelines while leveraging the data center power and cooling already in place. This approach avoids the capital expenditure required for GPU accelerator platforms, making it an attractive proposition for organizations that need more compute than CPUs can provide but aren’t yet ready to overhaul their infrastructure.

Performance That Fits the Rack

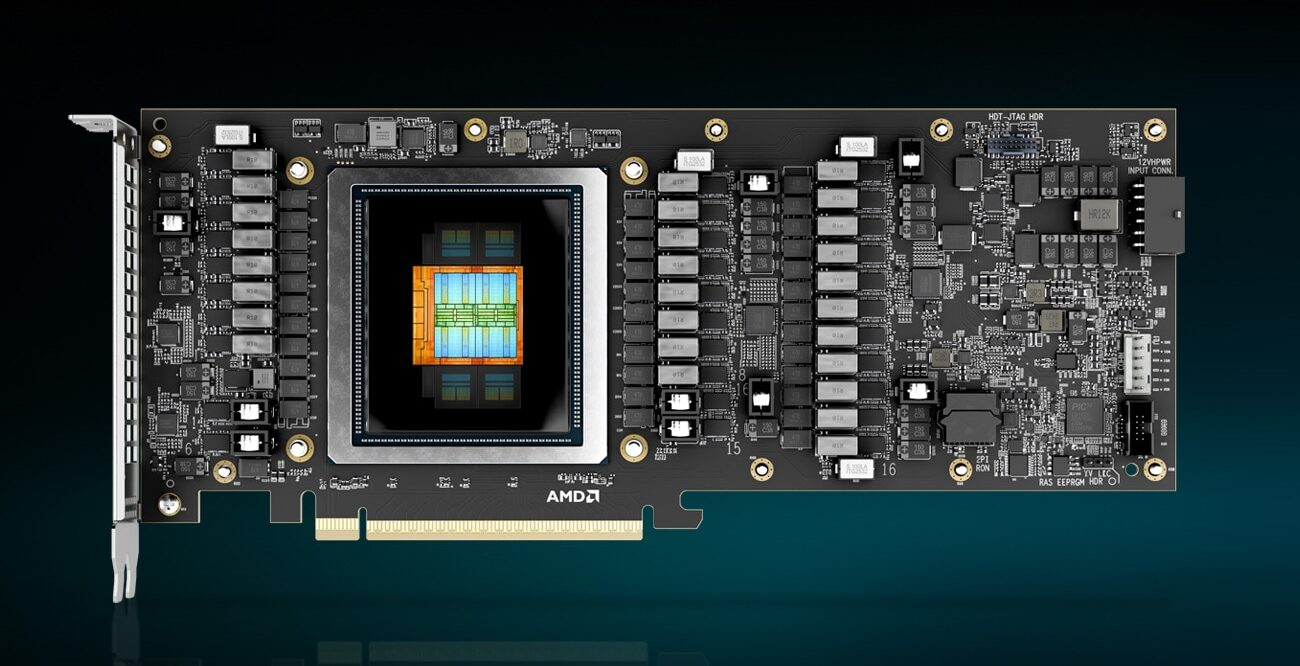

The MI350P is positioned as a high-performance PCIe card with estimated 2,299 TeraFLOPS of compute at MXFP6 precision, scaling up to 4,600 peak TFLOPS when running in MXFP4 mode—the highest performance claimed for an enterprise PCIe card. This is paired with 144 GB of HBM3E memory, delivering up to 4 TB/s bandwidth, which AMD says is critical for handling larger AI models without bottlenecking.

- Native support for lower-precision MXFP6 and MXFP4, optimizing throughput for pure TFLOPS performance.

- Sparsity acceleration for mainstream 8-bit and 16-bit precisions, improving efficiency in memory usage.

- Open ecosystem with low-cost development stacks to simplify deployment and reduce operating expenses.

The cards also support higher precision levels like INT8 and BF16, where sparsity features further enhance performance. AMD emphasizes that the MI350P is designed to minimize power and cooling demands while maintaining GPU throughput, making it suitable for standard air-cooled data centers—including support for FP8, MXFP8, and MXFP4 formats.

Enterprise AI Without Per-Token Costs

AMD frames the MI350P as part of a broader strategy to provide an open, cost-effective foundation for enterprise AI. The company offers its open-source AMD enterprise AI reference stack at no licensing cost, integrating with frameworks like PyTorch and Kubernetes GPU Operator for lifecycle management. This stack is intended to accelerate deployment without per-token charges, reducing reliance on cloud-based inference services.

For organizations already running on-prem AI workloads, the MI350P promises minimal code changes during migration, seamless integration with existing pipelines, and scalability as demands evolve. The goal is clear: avoid the need to rebuild infrastructure from scratch while still gaining the performance edge required for agentic AI applications.

Caveats and Considerations

The MI350P isn’t without tradeoffs. While it avoids the power and cooling overhead of dedicated accelerator platforms, its PCIe form factor may limit scalability in high-density clusters compared to solutions like NVIDIA’s H100 or AMD’s own Instinct MI300X. Enterprises with workloads pushing beyond eight cards per system might find themselves constrained by rack space or bandwidth limitations.

Additionally, while the open ecosystem is a selling point, its success hinges on adoption from AI software partners and frameworks. If the reference stack lacks broad compatibility, enterprises may still face integration hurdles that could offset the cost savings.

The MI350P’s 2 nm Zen-based architecture (with 3 nm I/O die) positions it at the leading edge of process technology, but real-world performance will depend on how well AMD optimizes its AI stack for these precision levels. Competitors like NVIDIA continue to dominate discrete GPU market share, and AMD’s challenge will be proving that PCIe-based solutions can deliver comparable ROI without sacrificing flexibility.

For now, the MI350P appears tailored to mid-sized enterprises or those testing on-prem AI without immediate plans for massive scale. Smaller deployments—where power constraints and per-token costs are key concerns—may find this a compelling alternative to cloud inference. Larger organizations may still lean toward accelerator platforms, but AMD’s move could pressure competitors to rethink the balance between performance, cost, and infrastructure requirements.