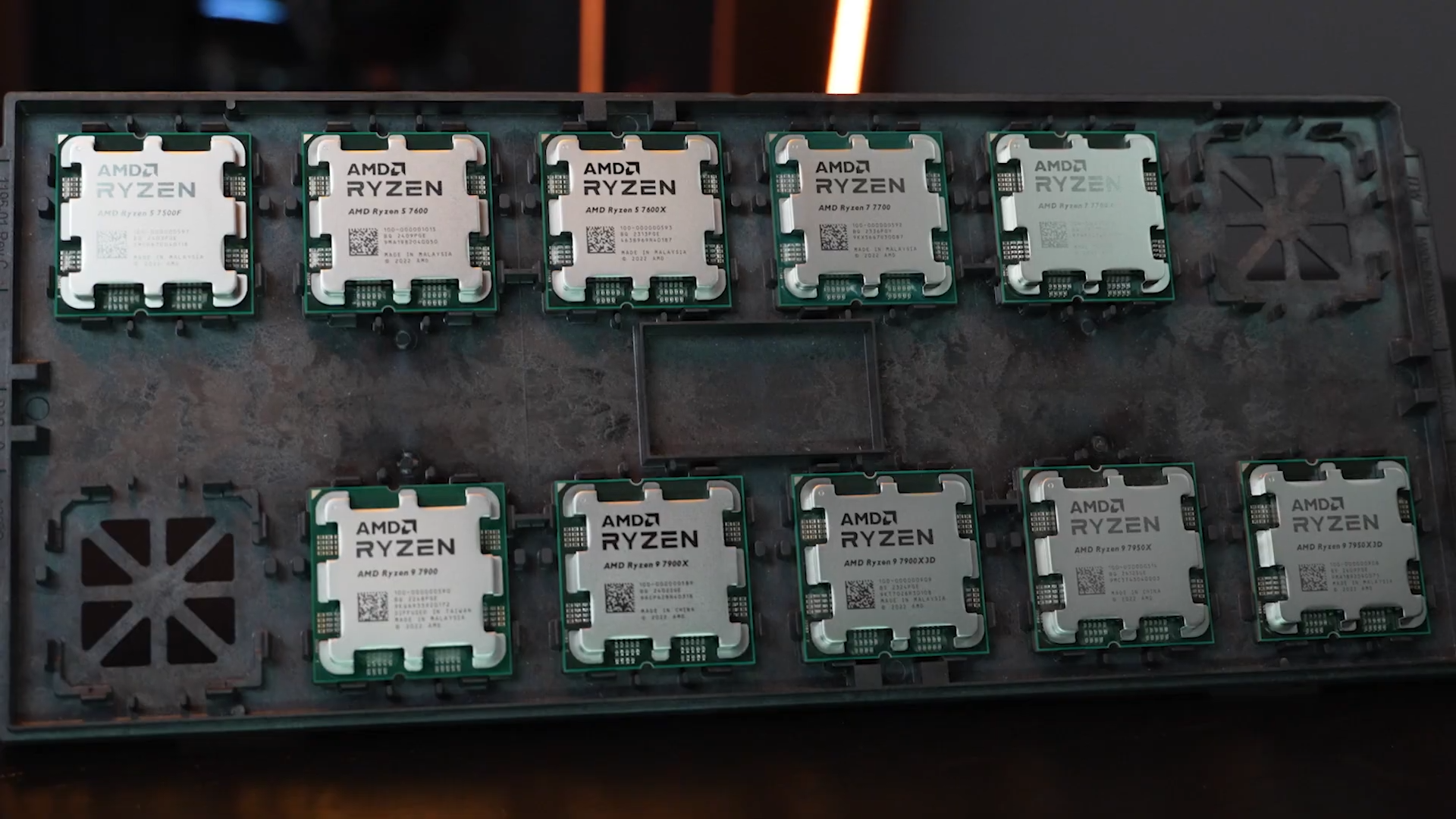

A classroom in Poland is about to become a testing ground for one of computing’s most contentious practices: overclocking. On April 10, a high school teacher will oversee 25 student teams as they attempt to coax maximum performance from identical hardware kits—each equipped with 16 GB of dual-channel DDR4 memory and a 2 TB NVMe SSD. The event isn’t just about hitting the highest benchmark scores; it’s an experiment in whether raw speed can be sustained without sacrificing stability, longevity, or reliability.

Participants might expect that overclocking is purely about dialing up clock speeds for instant performance gains. In reality, the challenge will reveal a more nuanced landscape where thermal management, power efficiency, and hardware degradation become critical factors. The teams’ choices—whether to rely on air cooling’s simplicity or liquid cooling’s aggressive heat dissipation—will determine not just their scores but how long the system remains functional.

The competition’s standardized setup ensures fairness while exposing a fundamental truth: overclocking is less about raw potential and more about managing trade-offs. Air cooling, for instance, avoids the risk of leaks or pump failure but often falls short in dissipating heat from high-performance components. Liquid cooling, on the other hand, can deliver superior thermal performance—if it doesn’t fail under the stress of sustained overclocking. The margin between a system that runs flawlessly and one that crashes under load will be razor-thin.

This isn’t just an academic exercise; it mirrors real-world scenarios where professionals balance immediate gains against long-term costs. In fields like rendering, scientific simulations, or AI training, the difference between stock and overclocked performance can be significant—but so are the consequences: increased power draw, reduced component lifespan, and voided warranties. The competition may force students to confront these limitations head-on, challenging the notion that pushing hardware harder always yields better results.

What stands out is how this event blurs the line between education and practical engineering. Students won’t just learn about clock speeds; they’ll grapple with the operational realities of extreme tuning—thermal throttling, voltage instability, and the physical wear on components under prolonged stress. The lessons extend beyond benchmarks to include power efficiency, system longevity, and even ethical considerations around hardware usage.

The competition’s outcome will likely highlight a paradox: overclocking can deliver impressive short-term performance, but its practical application in professional environments remains limited by these very constraints. For students, this is more than a technical challenge; it’s an introduction to the complexities of real-world computing, where performance isn’t just about speed but sustainability.

The most significant shift here is the integration of advanced hardware tuning into an educational context. It offers students a hands-on way to explore computational limits while facing the consequences of those choices—something that traditional curricula often overlook. Whether this approach catches on remains to be seen, but it represents a bold step toward bridging theory and practice in tech education.