Graphics processing has long been measured by raw power—terafLOPS, clock speeds, hardware specifications. But NVIDIA’s DLSS 5 signals a shift: performance is now also defined by algorithmic intelligence.

The latest iteration of the company’s AI-driven rendering technology promises up to twice the speed of its predecessor while maintaining high-quality output. Yet beneath that promise lies a growing concern about the visual artifacts it introduces, particularly in fast-moving or complex scenes. For IT teams, this raises critical questions about cost, infrastructure, and long-term adoption.

Performance vs. Perception

DLSS 5’s technical achievements are substantial

- Up to 2x performance improvement over DLSS 3.5 in internal benchmarks.

- Enhanced ray tracing acceleration, reducing render times without compromising visual fidelity.

- Improved temporal stability, addressing motion artifacts that plagued earlier versions.

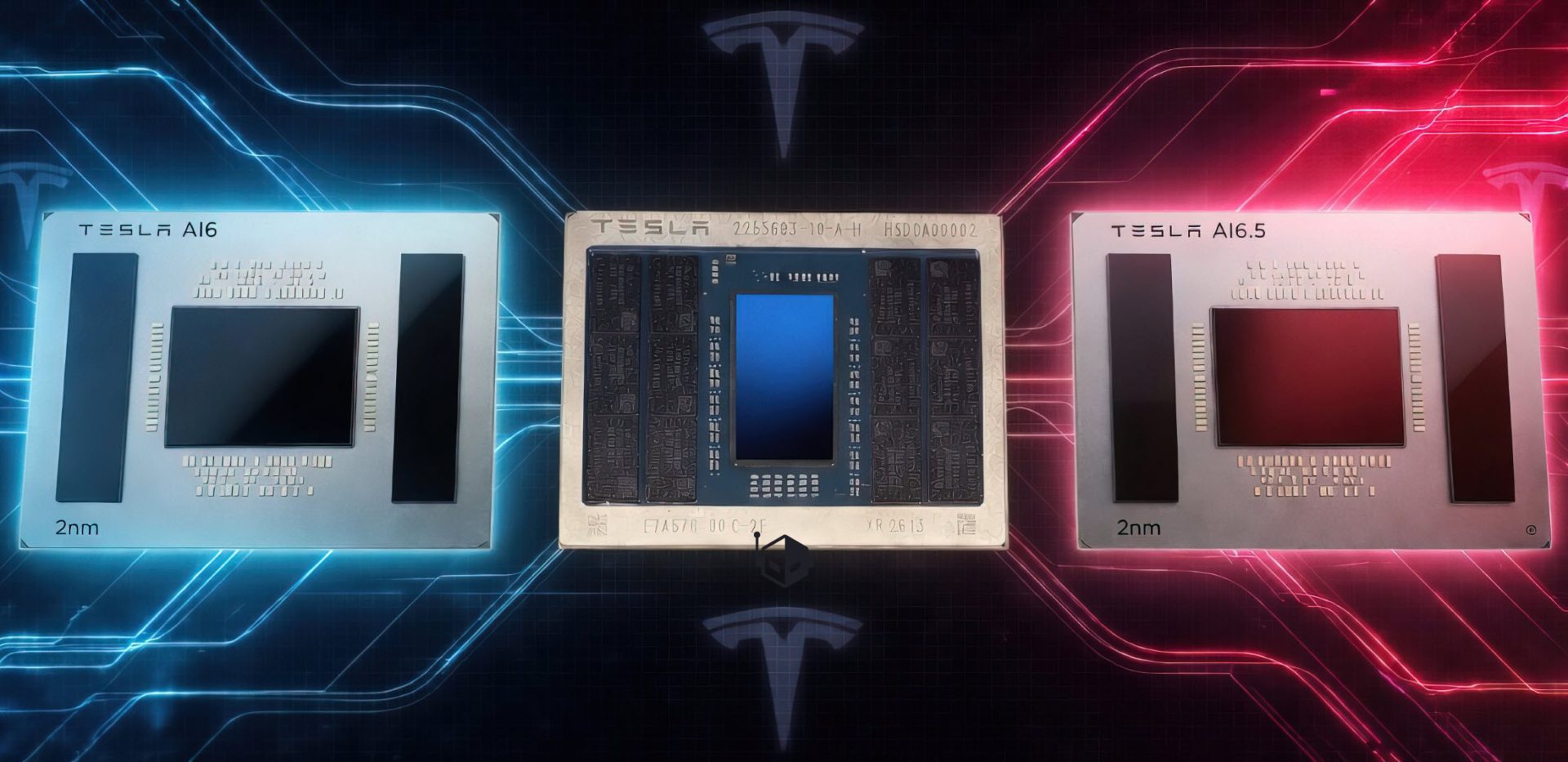

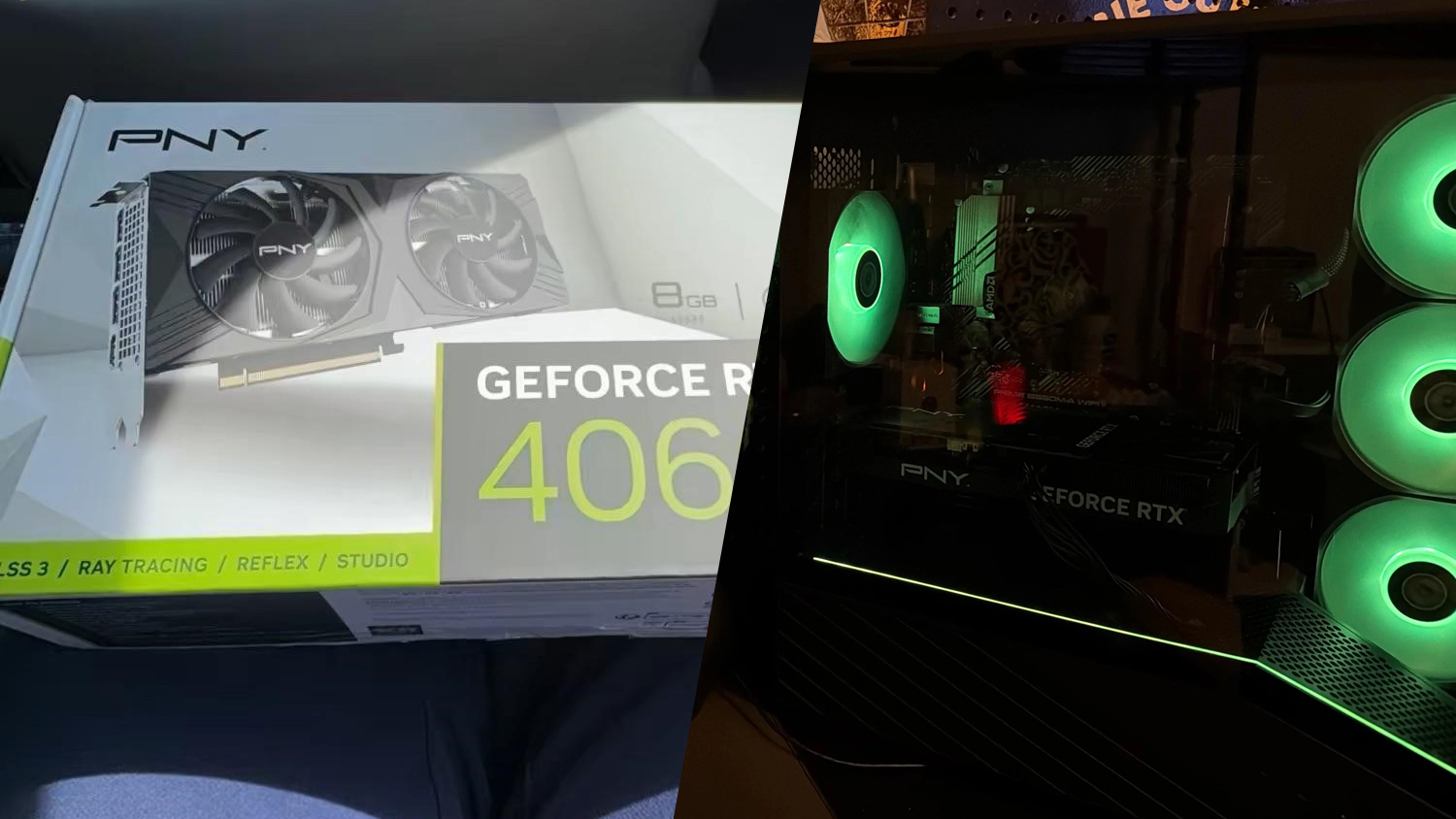

The technology is optimized for NVIDIA’s RTX 40 series GPUs, though backward compatibility with older hardware remains limited. Pricing details for DLSS 5-enabled titles have yet to be released, leaving IT planners in a holding pattern as they assess budget and infrastructure needs.

AI Artifacts Spark Debate

The debate over AI-generated distortions is not new, but DLSS 5’s more aggressive approach has amplified it. Earlier versions were praised for delivering near-native resolution with minimal quality loss. Now, developers report noticeable artifacts in certain scenes, particularly those involving fast motion or intricate environments.

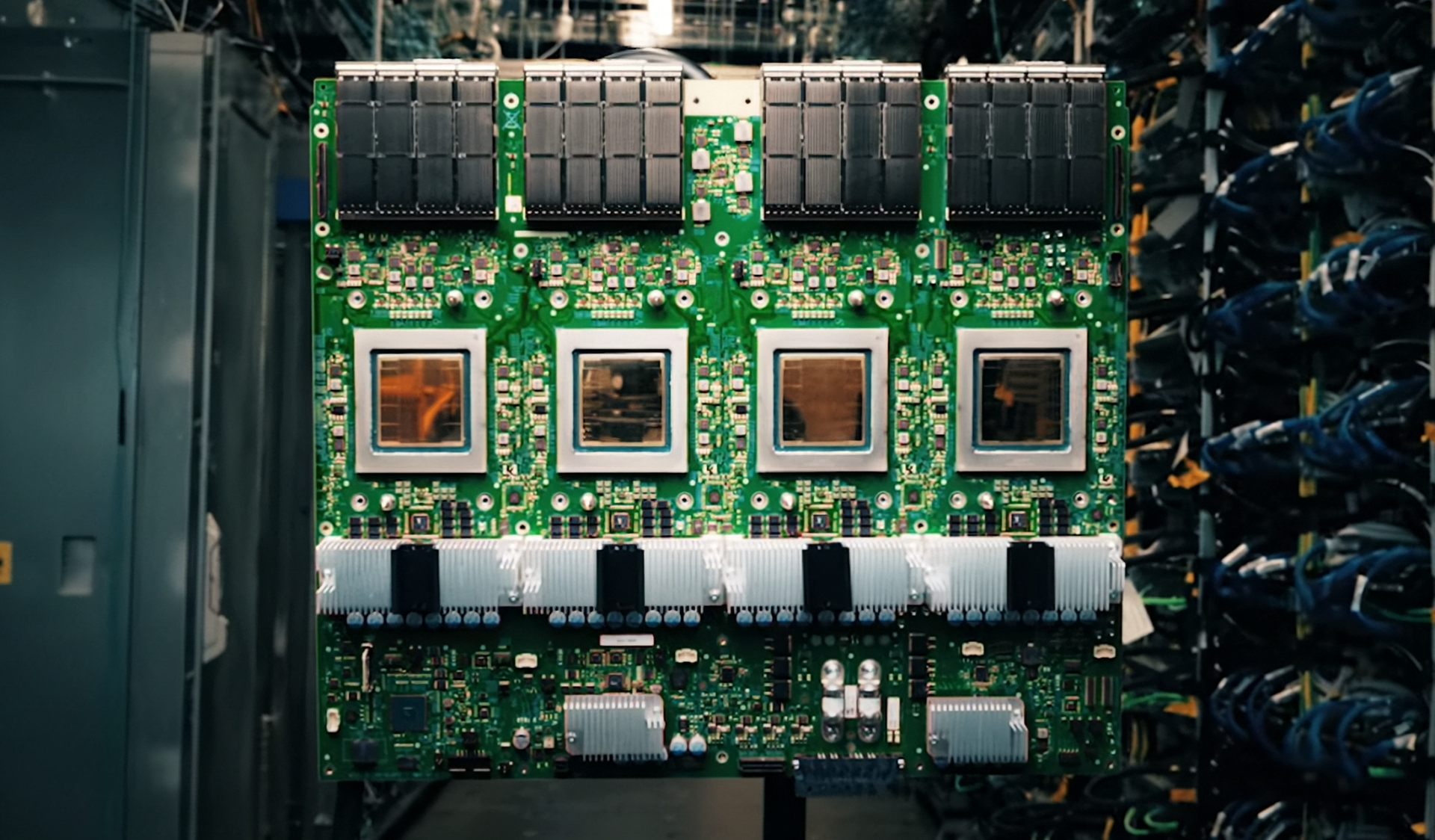

This divide highlights differing priorities: Developers prioritize visual polish, while IT teams focus on operational efficiency. AI-assisted rendering introduces new variables—higher bandwidth usage, increased server load, and potential latency spikes in cloud setups. The reliance on specialized hardware like Tensor Cores adds another layer of complexity to infrastructure planning.

IT Teams Face a Dilemma

The benefits of DLSS 5 are clear: faster render times, lower power consumption, and higher resolutions without major hardware upgrades. But the trade-offs are significant.

For large-scale rendering farms or cloud-based setups, AI processing increases workload management challenges. Bandwidth demands rise as more data flows through AI algorithms, and server loads can spike during peak periods. Additionally, the need for Tensor Cores complicates upgrade paths, forcing IT teams to balance immediate gains against long-term costs.

Developers are also divided. Some studios have adopted DLSS 5 for its performance advantages, while others remain cautious, awaiting refinements or alternative solutions. This hesitation could delay widespread adoption, leaving IT teams in a limbo between legacy systems and next-generation technologies.

A Milestone with Caveats

DLSS 5 is undeniably a milestone—a significant leap in performance that pushes the boundaries of what’s possible in real-time rendering. But like all advancements, it comes with trade-offs.

For IT teams, the question isn’t just whether to adopt this technology, but how. The balance between speed and visual quality, cost and efficiency, will determine its long-term success. What is confirmed: DLSS 5 delivers on performance, with measurable improvements in ray tracing and stability. What remains uncertain: the lasting impact of AI artifacts on workloads and the timeline for broader industry adoption.