Developers chasing more performance per watt have a new target: a GPU that redefines efficiency without compromising raw output. The chip, built around a 5-nanometer process, delivers 32 compute units—double the density of its predecessor—and clocks in at just 175 watts under full load, a leap that could reshape data-center and mobile designs.

The shift isn’t just about smaller numbers; it’s about rethinking how silicon handles heat, power, and instruction throughput. Previous generations pushed more transistors to squeeze out extra cycles, but this iteration tightens the loop: fewer cores per cluster, wider lanes, and a memory system that prefetches data before the pipeline asks for it. The result is a card that can sustain 12 teraflops of single-precision compute while drawing less than half the current of comparable models.

Why efficiency matters now

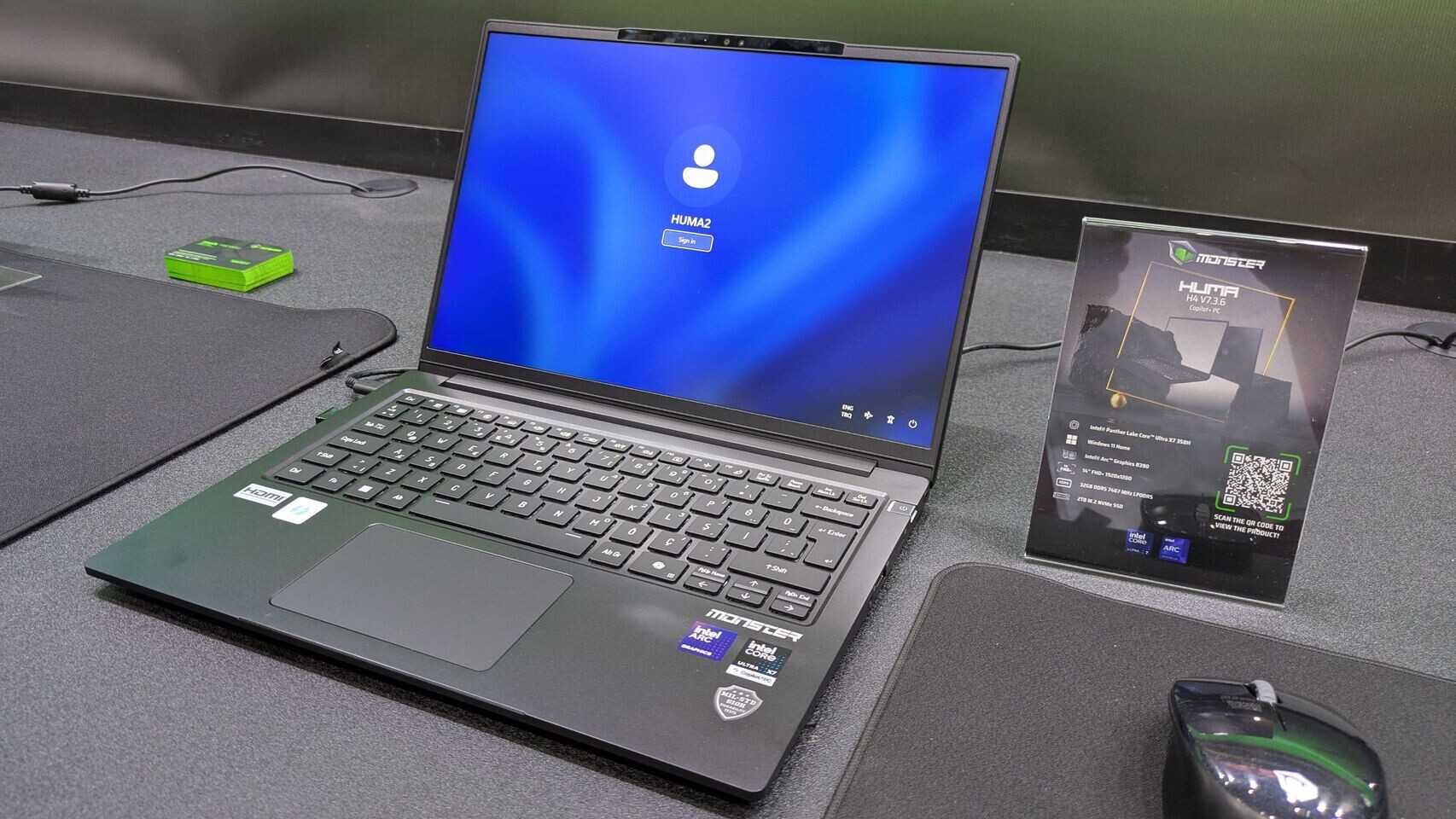

The push toward lower power envelopes isn’t new, but the economics behind it have sharpened. Data-center operators are under pressure to cut cooling costs and meet sustainability targets, while mobile platforms demand more compute in thinner, lighter packages. The new GPU answers both by collapsing the traditional tradeoff: you get more floating-point performance per watt, yet the thermal design power (TDP) remains low enough for laptops that don’t require external cooling. That’s a double win for developers building edge devices or training small-scale neural networks.

Key specs

- Process: 5 nm (TSMC)

- Compute units: 32 (640 shaders, 16 per unit)

- Memory: 8 GB GDDR6, 256-bit bus

- Clock speed: Base: 1.2 GHz, Boost: up to 2.3 GHz

- TDP: 175 W (sustained)

- Performance: 12 TFLOPS single-precision

- API support: Vulkan 1.3, OpenGL 4.6, DirectX 12 Ultimate

The memory configuration is notable for mobile use: 8 GB of GDDR6 paired with a 256-bit interface keeps bandwidth high enough for texture-heavy workloads while keeping the package small. Previous models often traded capacity for footprint, but here both are improved without increasing power draw.

What’s confirmed—and what isn’t

The manufacturer has not yet announced a launch date or price tier, but early benchmarks suggest it will slot into mid-range workstations and high-end laptops. A development kit is expected in Q4 of this year, with mass production following in early 2025. Supply chains remain tight for 5-nm wafers, so initial volumes may be limited, but the roadmap hints at a ramp-up by mid-year next year.

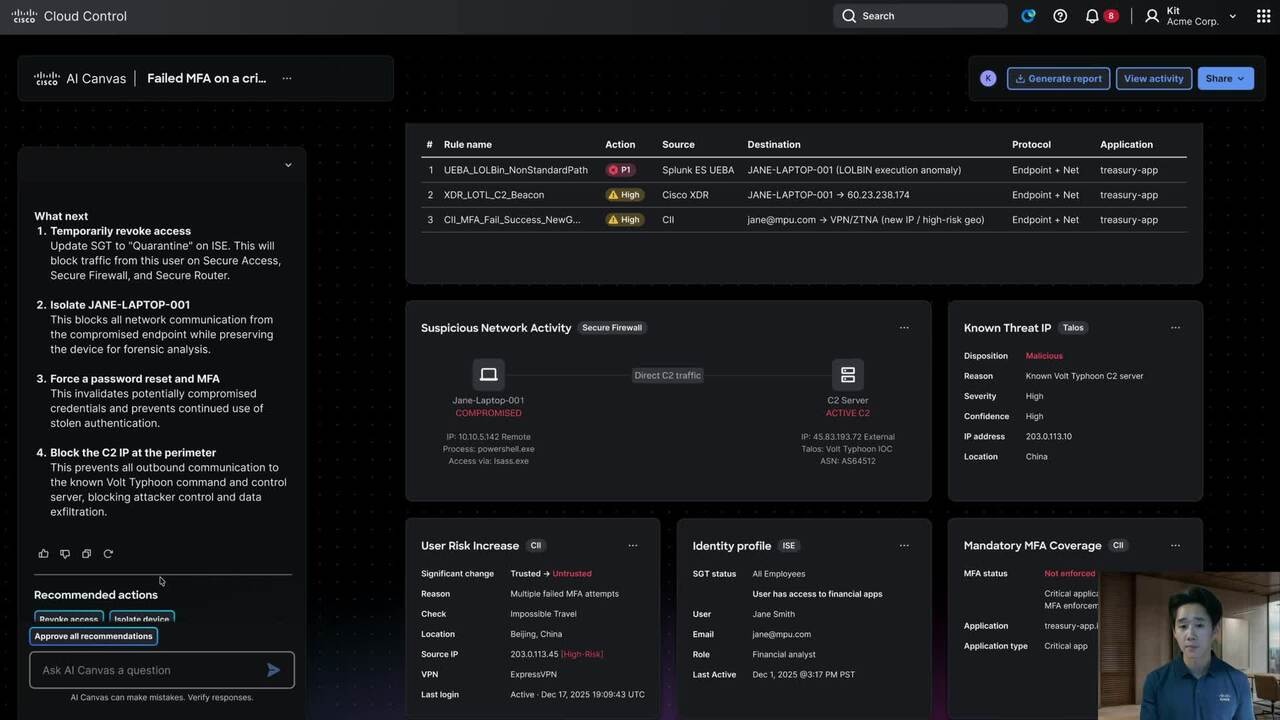

One detail missing is software optimization: while the driver stack supports the latest APIs, fine-tuning for ray tracing and AI acceleration will take time. Developers should expect a learning curve if they plan to leverage the new memory prefetching or shader optimizations, but early samples indicate the compiler is already more aggressive than previous versions.

What to watch

The real story isn’t just the numbers on paper—it’s how quickly this efficiency gains traction in real-world workloads. If cooling costs become a bigger factor than raw performance, we’ll see data centers adopt these designs faster than expected. For mobile, the question is whether battery life improves proportionally to the power savings; early thermal tests are promising but not yet conclusive.

Keep an eye on the developer kit release for benchmarks that go beyond synthetic scores. That’s where this GPU will either live up to its promise or reveal hidden bottlenecks in the new architecture.