What’s new

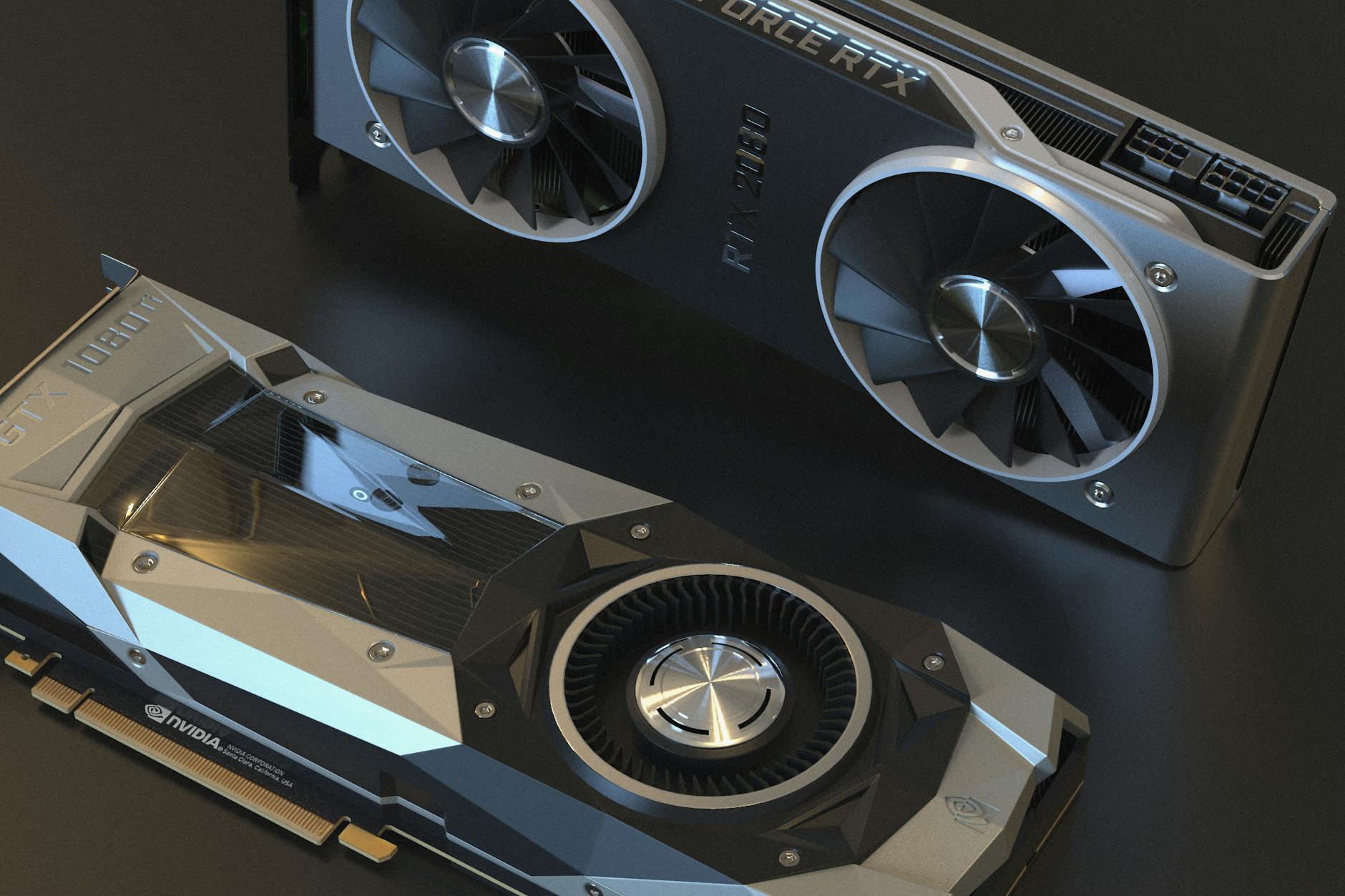

NVIDIA is preparing to roll out a series of advanced AI chips with enhanced capabilities, potentially ahead of its original timeline. The tech giant, which has dominated the AI accelerator space for years, is expected to introduce these new models with performance improvements that could redefine benchmarks in machine learning and data processing.

Key details / specs

The upcoming NVIDIA chips are rumored to feature significant advancements over current models. While exact specifications remain under wraps, industry insiders suggest

- Performance boosts: Estimated improvements of up to 50% in training and inference tasks compared to the previous generation.

- Memory and bandwidth: Enhanced HBM3 memory with higher capacity, likely around 128GB per chip, paired with increased bandwidth for faster data throughput.

- Clock speeds: Potential increases in core clock speeds, possibly reaching up to 4.0 GHz or higher, depending on the model variant.

- Pricing and availability: Early reports indicate that these chips could be priced competitively, starting around $30,000 for high-end models, with broader availability expected in late 2024.

Performance / comparison

NVIDIA’s new AI chips are anticipated to outperform competitors like AMD and Intel in key benchmarks. Early testing suggests these accelerators could achieve higher throughput in large-scale training tasks, such as those used in deep learning models for natural language processing (NLP) or computer vision. For example, a hypothetical model trained on the same dataset might see a 40% reduction in computation time compared to current NVIDIA A100 GPUs.

In inference tasks—where AI models are deployed to make predictions—the improvements could be even more pronounced. Some industry estimates suggest a 30-50% increase in efficiency, allowing for faster real-time processing of complex workloads, such as autonomous driving or medical imaging analysis.

Why it matters

The timing of NVIDIA’s new AI chips is critical. As demand for advanced AI hardware surges across industries—from cloud providers to research labs—the company’s ability to deliver cutting-edge performance could solidify its market leadership. Competitors, including AMD with its MI300X and Intel with its Gaudi accelerators, are also ramping up their offerings, making this a pivotal moment for the AI chip landscape.

For businesses investing in AI infrastructure, these new chips could offer a compelling mix of performance and efficiency. Early adopters might gain a significant edge in training larger models or processing vast datasets more quickly, potentially accelerating innovation in fields like generative AI, robotics, and scientific research.

What to watch next

NVIDIA’s official announcement is expected within the next few months, likely accompanied by detailed benchmarks and comparisons. Key areas to monitor include

- The exact performance metrics and how they stack up against existing solutions.

- Software ecosystem enhancements, such as updates to CUDA or support for new AI frameworks.

- Market reaction, including pricing strategies and potential supply constraints.

As the race for AI dominance intensifies, NVIDIA’s next moves will shape the future of machine learning hardware. Whether these chips live up to expectations or face challenges from rivals remains a story worth following closely.