The NVIDIA Groq 3 LPX chip marks a shift in how AI workloads are processed, balancing performance and power efficiency in ways that challenge traditional GPU architectures. While it delivers substantial speedups—up to 10x faster than the Groq 2—this gain comes with tradeoffs that developers must weigh carefully.

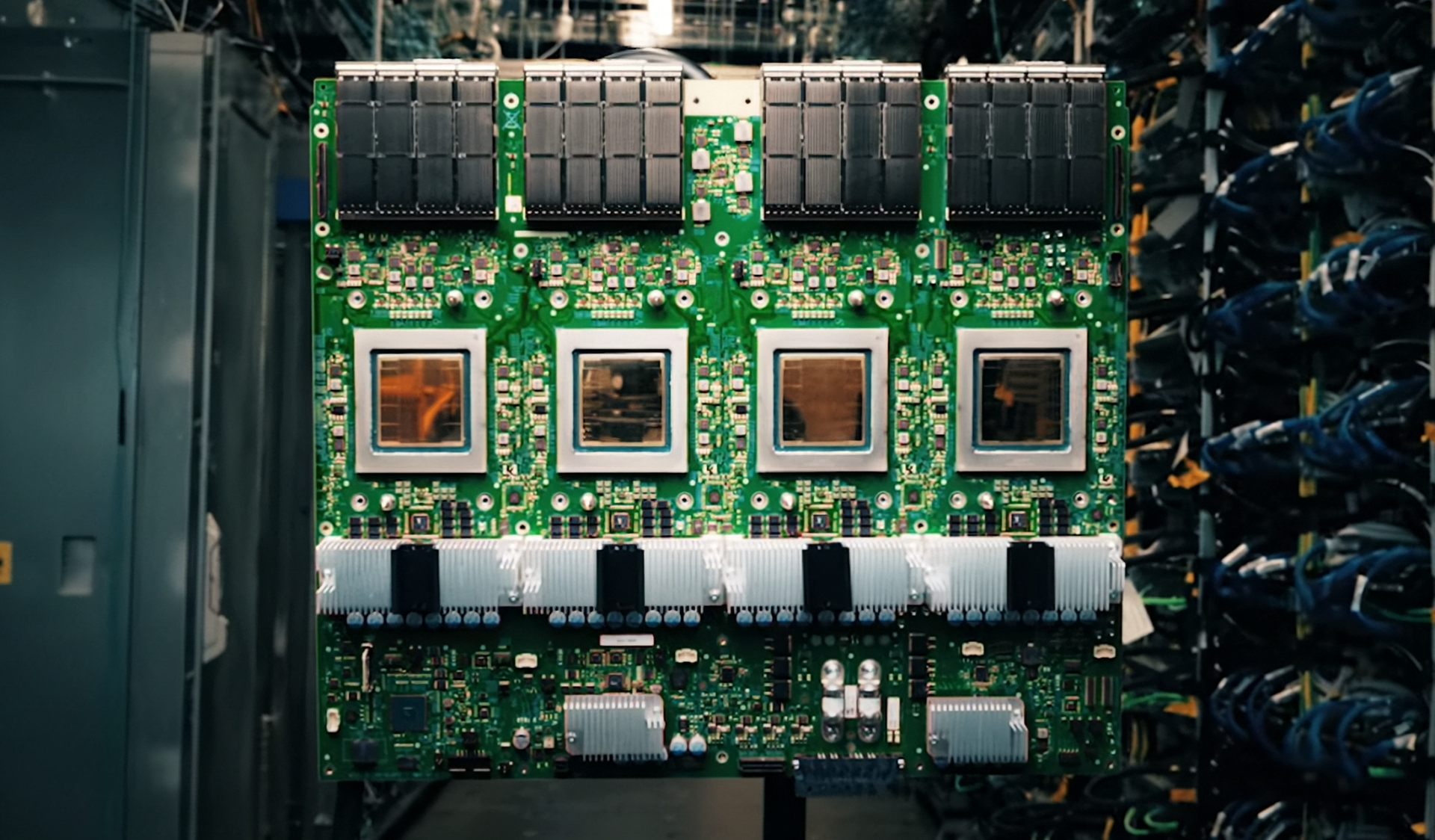

At its core, the Groq 3 LPX is built around a architecture optimized for AI inference, featuring 45 TOPS (tera operations per second) of performance. This leap forward is notable not just for raw speed but for how it redefines efficiency in data center environments. The chip operates at a lower power envelope than comparable solutions, making it an attractive option for workloads where thermal constraints are a concern.

One key tradeoff is platform lock-in. Unlike traditional GPUs that support a broad ecosystem of frameworks and libraries, the Groq 3 LPX is tightly integrated with NVIDIA’s AI software stack. Developers who rely on established tools like TensorFlow or PyTorch may find themselves constrained by this design, even as they benefit from optimized performance for specific workloads.

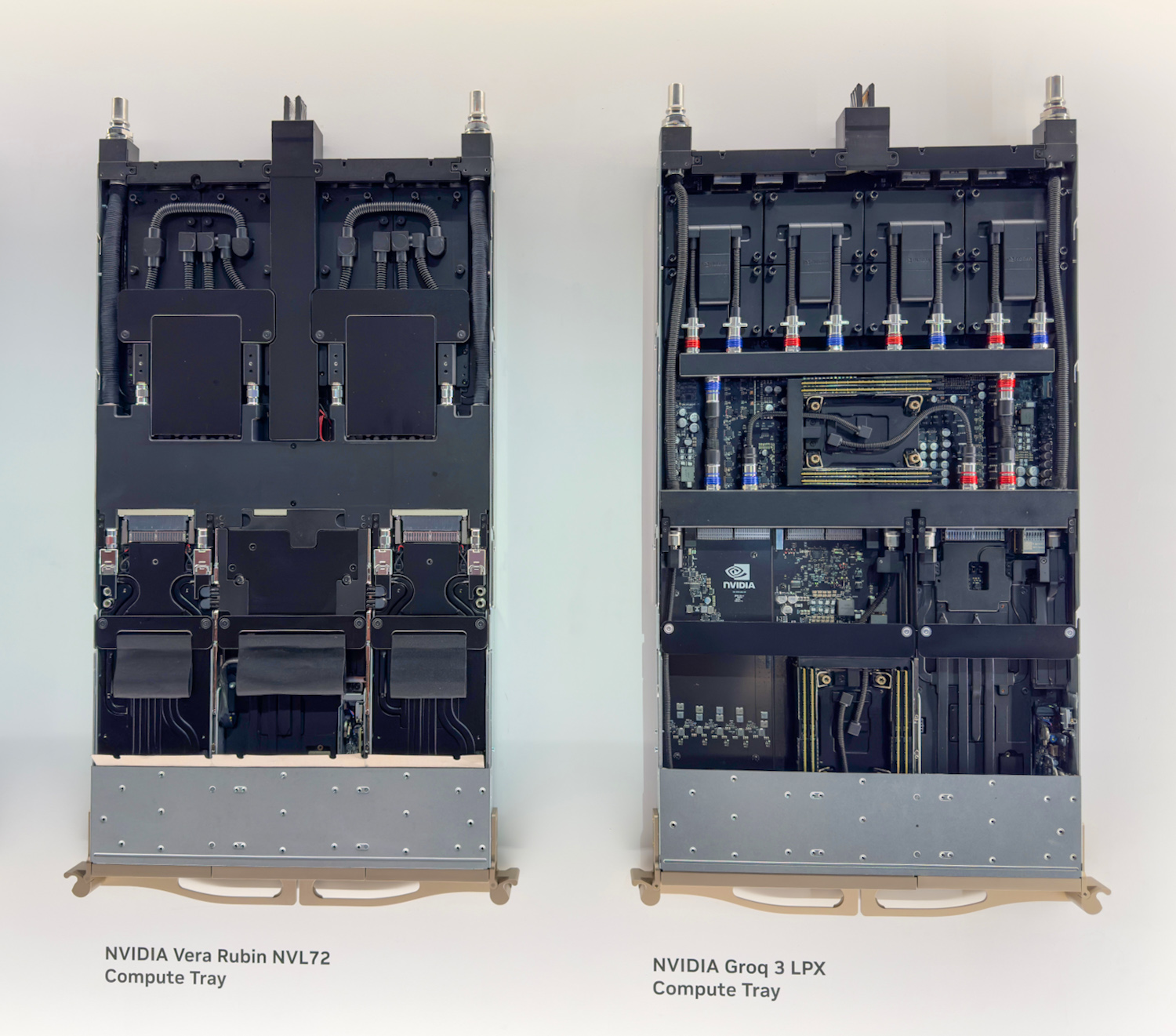

For PC builders and data center operators, the Groq 3 LPX introduces a new variable in system design. Its 96GB HBM2e memory capacity and support for up to 100W TDP mean it can slot into existing infrastructure with minimal adjustments, though compatibility will depend on the broader software landscape.

The chip’s clock speed of 3.5GHz is a departure from NVIDIA’s usual high-frequency approaches, favoring sustained throughput over peak performance. This aligns with AI workloads that prioritize efficiency over raw speed, but it may not suit applications requiring burst processing.

Looking ahead, the Groq 3 LPX could reshape enterprise AI deployments, particularly in edge computing and cloud services where power efficiency is critical. However, its success hinges on whether NVIDIA can expand its software ecosystem to reduce lock-in risks without sacrificing performance gains.