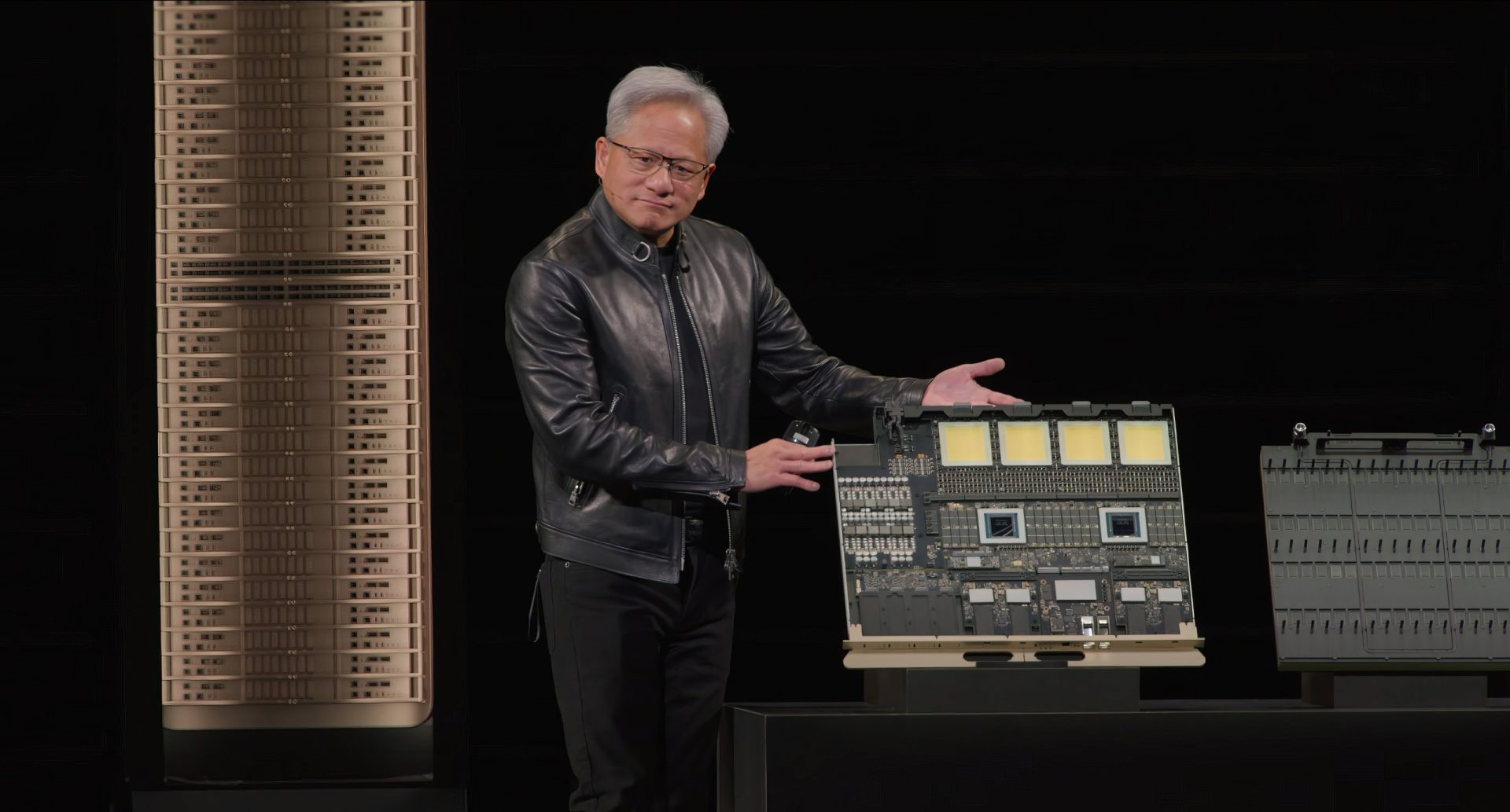

NVIDIA's AI chip strategy is taking a sharp turn through Thailand, creating a supply chain dynamic that power users must now carefully monitor. This detour from direct U.S. shipments comes at a time when global trade relations are under intense scrutiny, particularly regarding hardware distribution. The chips in question—likely A100 or H100 variants—still deliver strong performance with 40GB of HBM2e memory, PCIe 4.0/5.0 interfaces, and clock speeds ranging from 700 MHz base to 1.3 GHz boost frequencies. However, the path these components take to reach data centers and research labs has changed, especially in China where AI acceleration demand is surging.

Power User Considerations: Stability vs. Innovation

- Advanced users should brace for potential lead time fluctuations due to indirect routing.

- Software compatibility (CUDA, TensorRT) remains intact, but hardware availability may become less predictable.

- Long-term performance optimization could be impacted if supply chain disruptions persist.

The Supermicro case—if it stands in investigations—could set a precedent for how U.S. authorities examine hardware exports, potentially affecting even established manufacturers like NVIDIA. While no direct connection to NVIDIA has been confirmed, the broader implications suggest that supply chains will face stricter oversight moving forward.

Key Technical Specifications

- 40GB HBM2e memory configuration (unchanged from original designs)

- PCIe 4.0/5.0 interface support depending on model variant

- Clock speed ranges: 700 MHz base, 1.3 GHz boost for A100 series

The real challenge for power users isn't the hardware itself, but the ecosystem surrounding it. Indirect distribution introduces variables that can affect everything from procurement timing to quality control standards. For AI workloads requiring precise resource allocation, this adds a layer of complexity that wasn't present with traditional supply chains.

Looking Ahead: The Future of AI Hardware Distribution

The next 18-24 months will likely see significant changes in how AI hardware moves globally. NVIDIA's current approach—while technically sound—may force the company to adapt its distribution model more rapidly than originally planned. Developers should keep a close eye on both regulatory developments and supply chain stability, as these factors could redefine the landscape for high-performance computing.

For now, the chips themselves remain at the forefront of AI acceleration technology. The journey to get them is what's becoming increasingly complex—and that's a challenge power users can't afford to ignore.