NVIDIA's latest advancements in AI inferencing are poised to deliver a substantial performance leap, with benchmarks suggesting up to a 35x improvement. This isn't just another incremental update; it represents a fundamental shift that could redefine how developers approach large-scale model deployment.

The focus here is on the practical implications of this acceleration. For developers, the promise is clear: faster processing times and more efficient resource utilization. However, the real-world benefits—such as reduced latency and improved scalability—are still being tested in production environments. The question isn't just about raw performance metrics; it's about how these gains translate into tangible improvements for end-users and workflows.

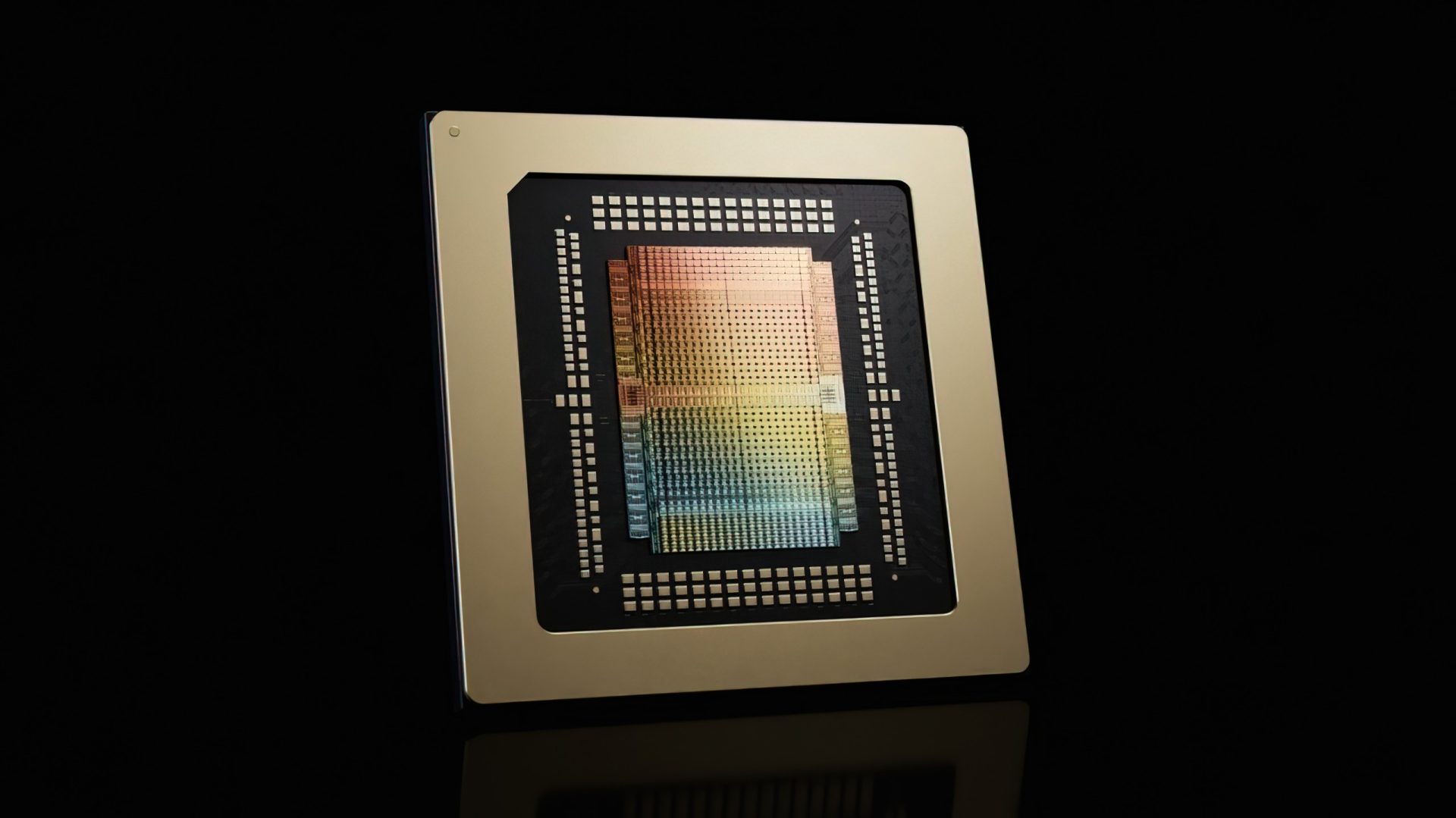

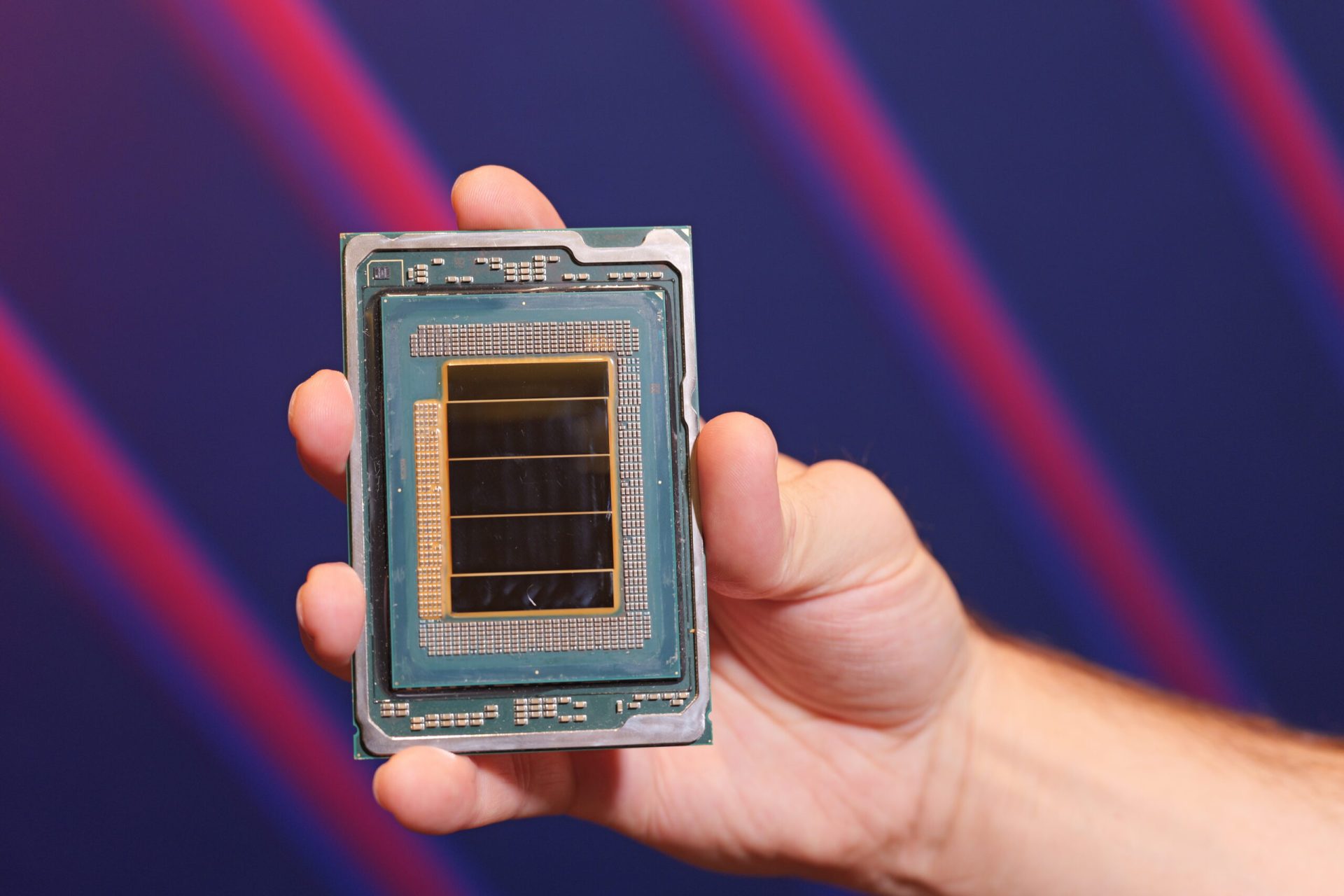

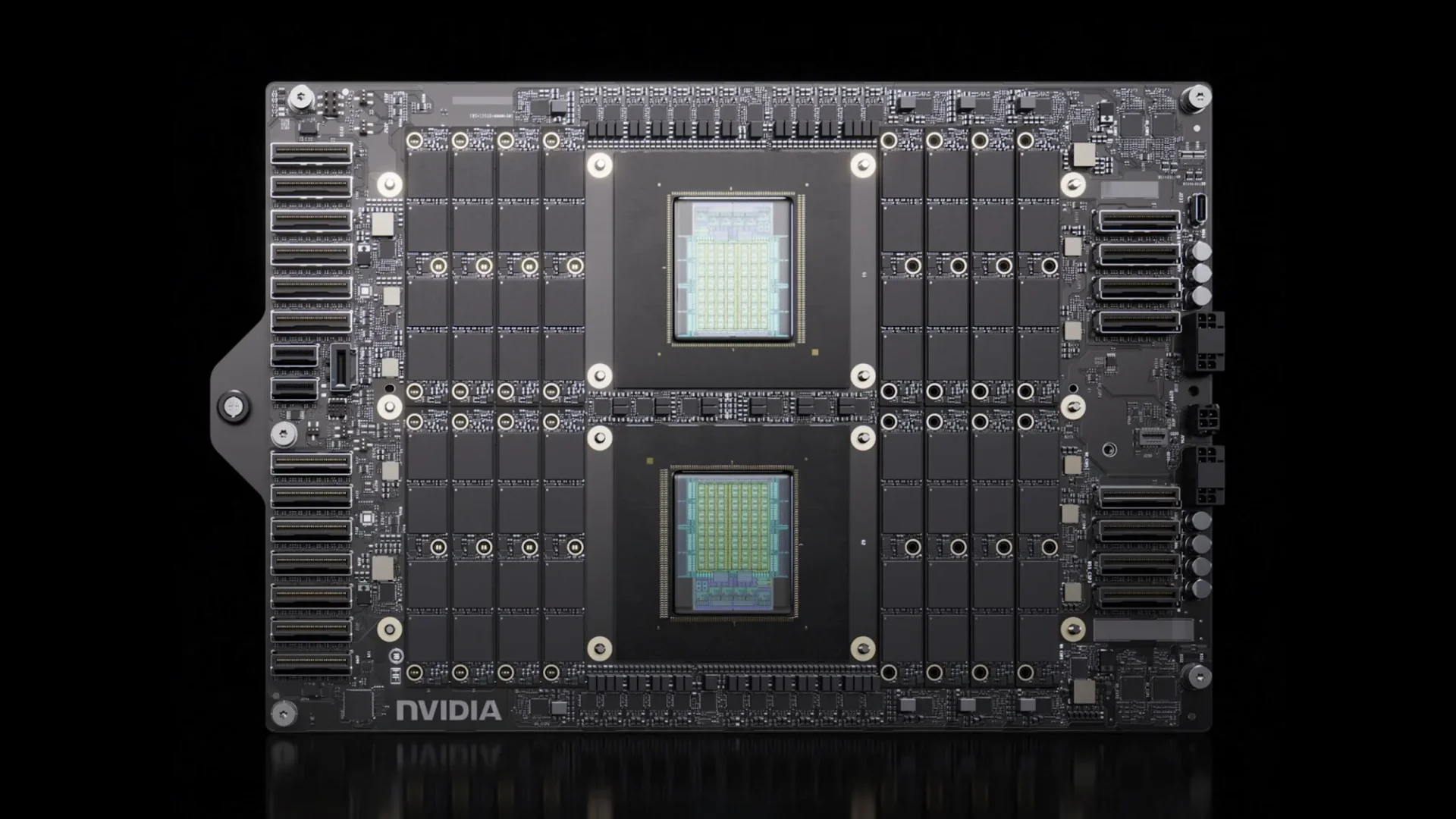

At its core, this development hinges on two key elements: the hardware backbone and the software ecosystem that supports it. NVIDIA's new platforms are designed to handle trillion-parameter models with unprecedented efficiency. But the devil is in the details—how these systems integrate into existing infrastructure, and whether they can deliver consistent performance without introducing new bottlenecks.

One of the standout features is the ability to process large-scale models with minimal overhead. This is particularly relevant for enterprises and research institutions that rely on massive datasets and complex architectures. The challenge lies in ensuring that this acceleration doesn't come at the cost of platform lock-in, a common pitfall in the AI hardware space. Developers need tools that offer flexibility without sacrificing performance.

Looking ahead, the implications are both promising and cautious. On one hand, the potential to accelerate AI workloads by such a significant margin could unlock new possibilities for real-time applications, from autonomous systems to advanced analytics. On the other, the competitive landscape is already heating up, with players like Groq making strides in similar territory. NVIDIA's move could set a new benchmark, but it will need to prove its mettle in head-to-head comparisons.

The practical takeaway for developers is clear: this advancement could be a game-changer for those working on high-performance AI systems. However, the focus should remain on how these improvements integrate into existing workflows and whether they can deliver consistent, reliable performance without introducing new dependencies or limitations. The race to dominate the AI inferencing space is on, and NVIDIA's latest move is a significant step forward—but it's just the beginning.