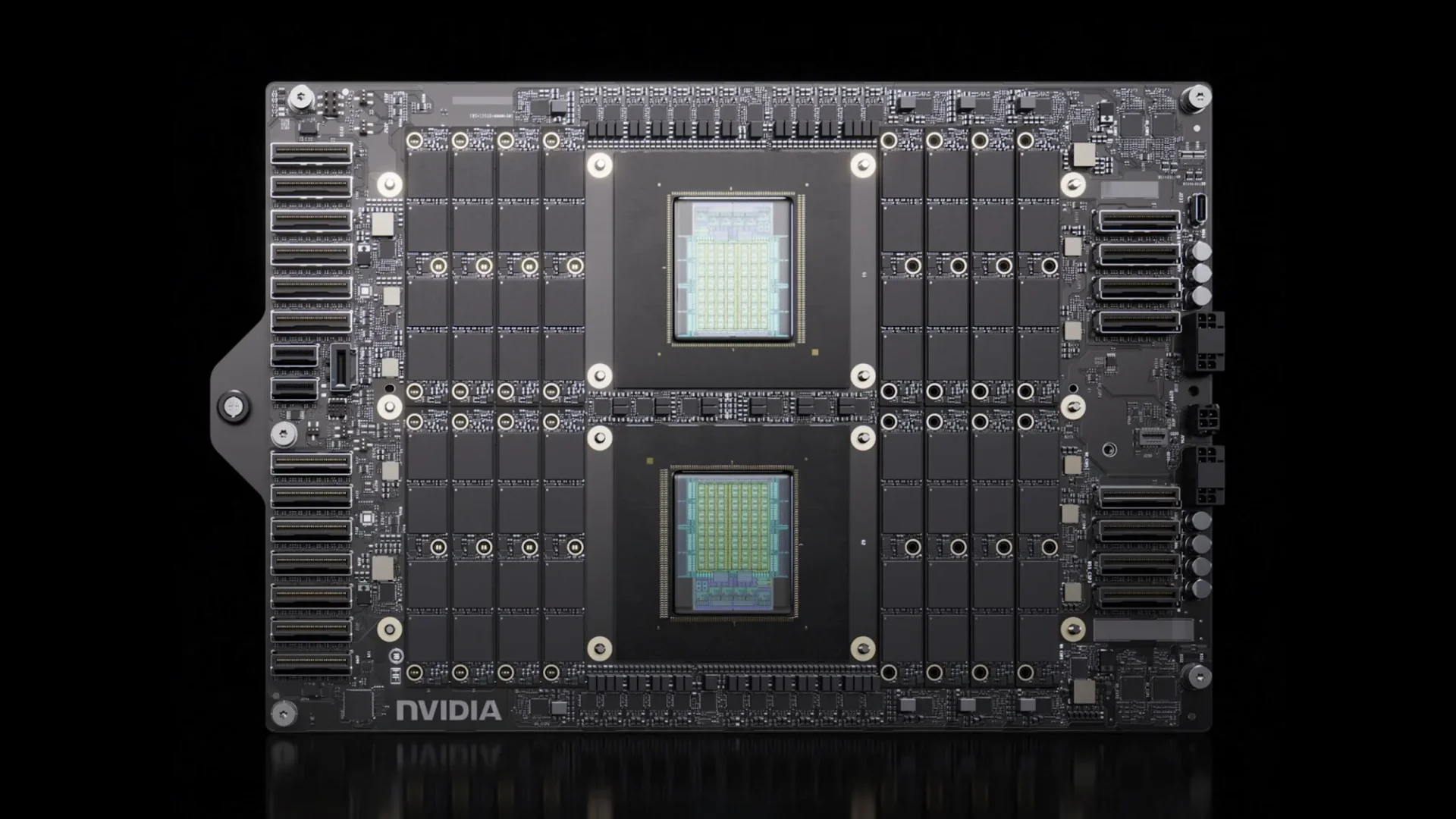

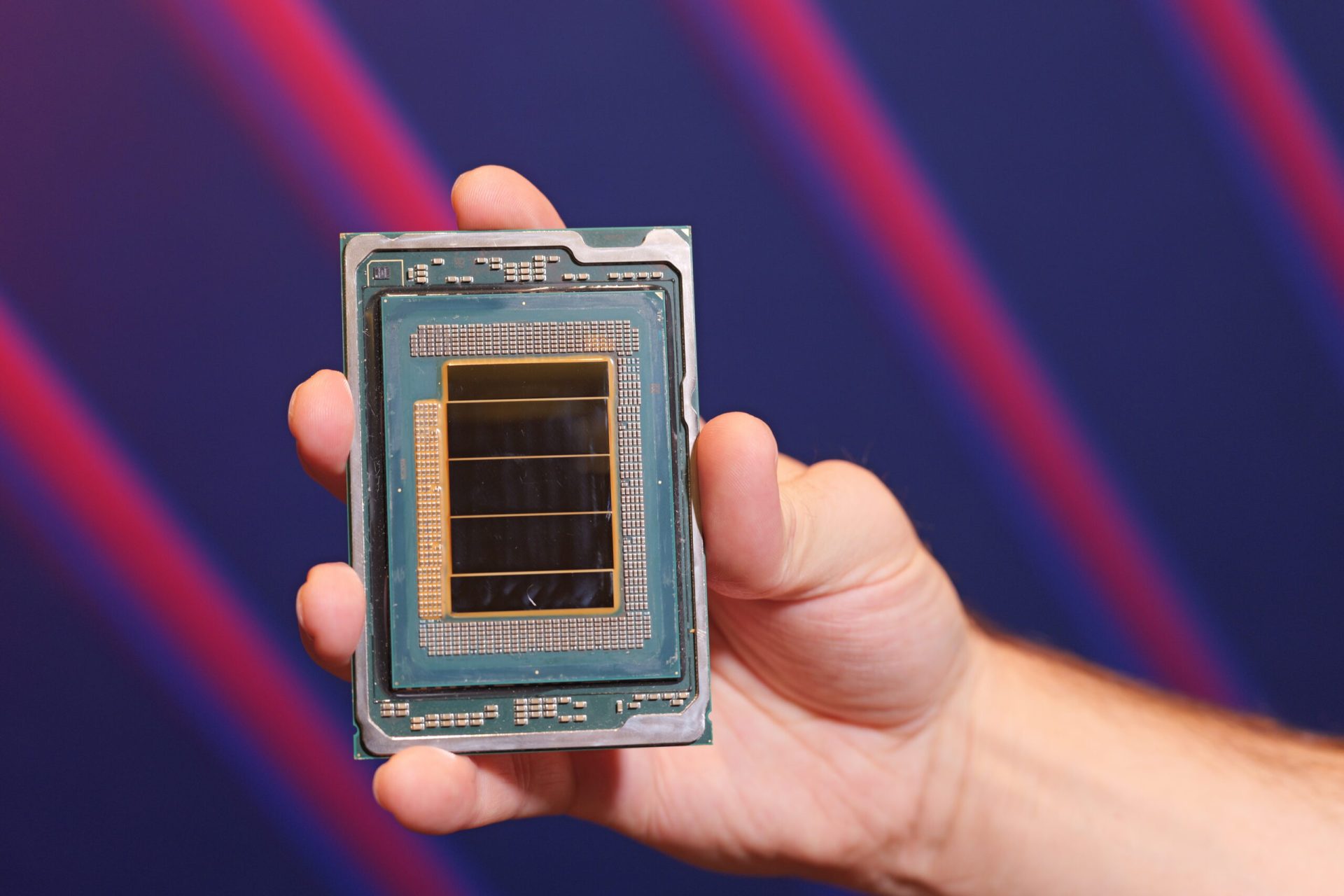

Industry NVIDIA Taps Taiwanese Nanya Technology’s LPDDR5X Memory For Vera Rubin Platform, Offering 3x Capacity & Over 50% Bandwidth Boost Hassan Mujtaba • at EDT Add on Google Taiwan-based manufacturer is reportedly the country's first to offer LPDDR5X memory for NVIDIA Vera Rubin platforms as the green team diversifies its supply chain to power its Agentic AI powerhouse. NVIDIA Diversifies Its Supply Chain, Adding Taiwan-Based Memory Maker For Vera Rubin's LPDDR5X Solution NVIDIA will require a lot of memory, both low-power and high-bandwidth, to fuel the growing needs of Agentic AI with its Vera Rubin platforms. Related Story NVIDIA Beats Everyone To DeepSeek V4 With Day-0 Blackwell Support, Pushing 3,500 Tokens Per Second On 1.6T ModelsWe know that NVIDIA's Vera Rubin makes use of two types of memory. The Vera CPUs use LPDDR5X DRAM while Rubin GPUs use HBM4 DRAM. Both of these have different purposes. HBM4 is compact, offering much higher bandwidth, while LPDDR5X is power efficient and offers higher densities. While HBM4 is harder to produce and only a few major firms, Samsung, SK Hynix, and Micron, can produce it, LPDDR memory is widely deployed. This allows NVIDIA to diversify its supply chain partners. We know that Micron and SK Hynix have developed SOCAMM2 memory for Vera Rubin platforms using their LPDDR5X solution, but NVIDIA is searching for more supply chain partners. Based on a report from UDN, it looks like NVIDIA has found one in Taiwan. Nanya Technology is a Taiwan-based memory manufacturer that produces LPDDR5/LPDDR5X memory and has been selected as a supply chain partner for NVIDIA's Vera Rubin. Nanya Technology has become the first Taiwanese manufacturer to enter NVIDIA's AI server main memory system, breaking the previous dominance of Korean and American companies and marking a new milestone for Taiwan's memory industry. UDN This is a big win for Nanya Tech, as most Taiwanese memory manufacturers who were involved in PC memory businesses were unable to meet the specifications for AI platforms. To address this matter, TSMC guided local companies to help with manufacturing and process optimizations. And we can see the result today with Nanya Technologies being selected to produce memory for the fastest next-gen AI system on the planet. NVIDIA Vera Rubin platforms with LPDDR5X will deliver a major boost over Grace Blackwell (GB300) servers. Each Vera Rubin Superchip will pack 1.5 TB of memory operating at 1.2 TB/s speeds, a 3x increase in capacity and a 50% increase in bandwidth versus the previous generation. The Vera CPUs will also be deployed for rack-scale AI, offering 256 Vera chips per rack, up to 400 TB of memory, and up to 315 TB/s of bandwidth. Vera is just as crucial as Rubin as Agentic AI shifts the core focus from GPUs to CPUs. With growing CPU demands and requiring more memory, despite KV cache being compressed by 90% in newer models, the need to have multiple global supply chain partners is crucial, and NVIDIA has made the right moves to meet its demand by selecting Taiwan-based memory makers in addition to its global partners. NVIDIA Vera CPU RackNVIDIA Vera CPUConfiguration256 Vera CPUs1 Vera CPUCores | Threads22,528 NVIDIA Olympus cores45,056 threads88 NVIDIA Olympus cores176 threadsMemory CapacityUp to 400 TBUp to 1.5 TBAggregate BandwidthUp to 315 TB/sUp to 1.2 TB/sN/S NetworkingNVIDIA BlueField-4 DPUN/ACoolingLiquid CooledN/A About the : A Software Engineer by training and a PC enthusiast by passion, Hassan Mujtaba serves as 's for hardware section. With years of experience in the industry, he specializes in deep-dive technical analysis of next-generation CPU and GPU architectures, motherboards, and cooling solutions. His work involves not only breaking news on upcoming technologies but also extensive hands-on reviews and benchmarking. Follow on Google to get more of our news coverage in your feeds. Further Reading Qualcomm’s Datacenter CPU Rumor Comes Just In Time As Agentic AI Goes In Hyperdrive Mode Meta Is Adding Tens of Millions of AWS Graviton Cores To Its Compute Portfolio As Agentic AI Becomes “Almost As Big a CPU Story As A GPU Story” Rambus Quietly Builds The Missing Piece Of AI Servers As SOCAMM2 Becomes The Favorite AI Memory Standard JEDEC Previews LPDDR6 Memory With SOCAMM2 Modules & 512 GB Capacities, Clearing the Path for Next-Gen AI Servers Read all on NVIDIA Taps Taiwanese Nanya Technology’s LPDDR5X Memory For Vera Rubin Platform, Offering 3x Capacity & Over 50% Bandwidth Boost

27 Apr 2026, 09:41 AM

•

733 words

•

4 min

•

~4 min left

Key takeaways

- Industry NVIDIA Taps Taiwanese Nanya Technology’s LPDDR5X Memory For Vera Rubin Platform, Offering 3x Capacity & Over 50...

- NVIDIA Diversifies Its Supply Chain, Adding Taiwan-Based Memory Maker For Vera Rubin's LPDDR5X Solution NVIDIA will requ...

- Related Story NVIDIA Beats Everyone To DeepSeek V4 With Day-0 Blackwell Support, Pushing 3,500 Tokens Per Second On 1.6T...

Share this article