NVIDIA’s Blackwell Ultra platform is redefining AI inference efficiency, offering up to 50 times better performance per watt compared to its predecessor, the Hopper architecture. This breakthrough isn’t just about raw speed—it’s a full-stack redesign that optimizes hardware, software, and system architecture to deliver dramatic cost reductions for AI workloads, particularly in low-latency and long-context applications like agentic coding assistants.

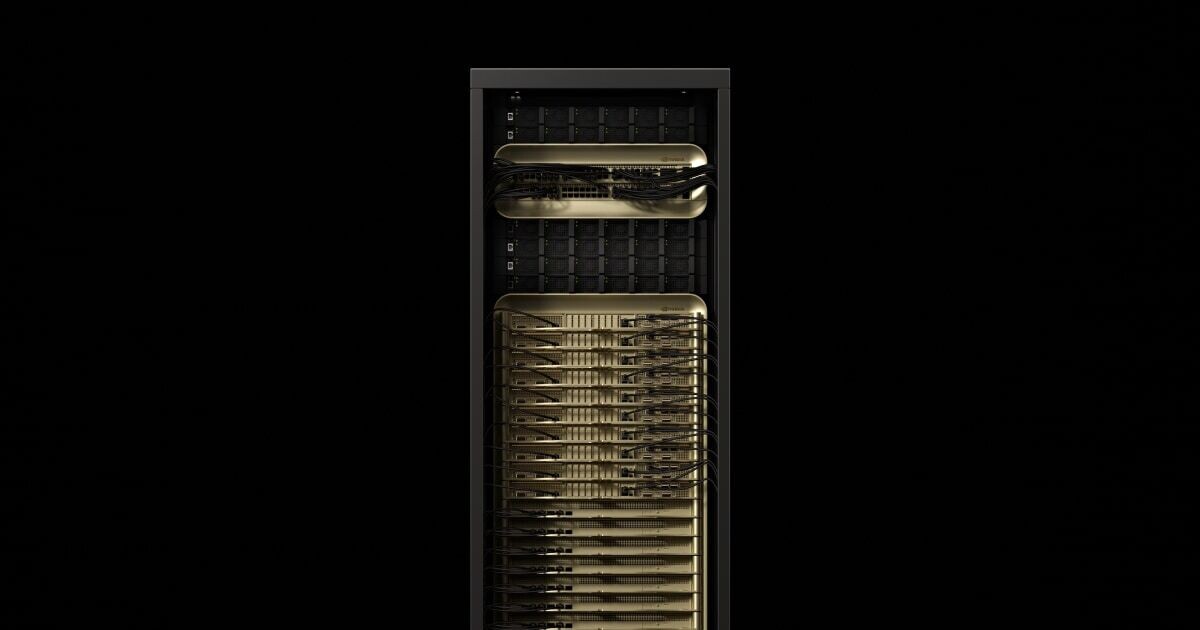

The GB300 NVL72 system, powered by Blackwell Ultra, achieves these gains through a combination of architectural innovations, including 1.5x higher NVFP4 compute performance and 2x faster attention processing. These improvements enable AI agents to reason across entire codebases in real time while maintaining millisecond-level responsiveness—a critical requirement for interactive workflows.

At a glance

- 50x throughput per megawatt: Blackwell Ultra outperforms Hopper in efficiency, with the most significant gains in low-latency workloads.

- 35x lower cost per million tokens: Real-world savings for AI inference providers, especially in agentic and coding-assistant applications.

- 1.5x cost advantage for long-context workloads: GB300 NVL72 delivers better economics for 128,000-token inputs compared to GB200.

- Software-hardware codesign: NVIDIA’s TensorRT-LLM, Dynamo, and NVLink optimizations unlock continuous performance improvements.

- Production adoption: Cloud providers like Microsoft, CoreWeave, and OCI are already deploying GB300 NVL72 for agentic AI workloads.

- Future-proofing: The Rubin platform, set to follow Blackwell Ultra, promises another 10x leap in throughput per watt for mixture-of-experts inference.

Why this matters

The shift toward agentic AI—where systems autonomously execute multi-step reasoning tasks—demands both low latency and high throughput. Traditional inference architectures struggle with the computational overhead of long-context processing, often leading to prohibitive costs or slow response times. Blackwell Ultra addresses this by rethinking memory access, kernel optimization, and inter-GPU communication. For example, NVLink Symmetric Memory allows direct GPU-to-GPU data transfer, while programmatic dependent launch reduces idle time during kernel execution.

These advancements translate directly to business outcomes. AI platforms like Baseten, DeepInfra, and Together AI have already reduced their cost per token by up to 10x using Blackwell. With GB300 NVL72, that efficiency gap widens further, enabling providers to offer real-time interactive experiences at scale. The platform’s ability to handle 128,000-token inputs with 1.5x lower costs than GB200 makes it particularly compelling for coding assistants that must analyze entire codebases—a task that would otherwise be computationally infeasible.

Looking ahead

While Blackwell Ultra is already making waves, NVIDIA’s roadmap doesn’t stop here. The upcoming Rubin platform, combining six new chips into a single AI supercomputer, is poised to deliver another quantum leap. For mixture-of-experts inference, Rubin aims to achieve 10x higher throughput per megawatt, cutting token costs by another order of magnitude. This could redefine the economics of frontier AI models, which currently require massive GPU clusters for training.

The adoption of Blackwell Ultra by industry leaders signals a broader trend: AI infrastructure is evolving to meet the demands of next-generation applications. As agentic AI becomes more prevalent—driven by the surge in software-programming queries (now accounting for up to 50% of AI workloads, per OpenRouter)—the need for efficient, low-latency inference will only grow. Blackwell Ultra isn’t just an incremental upgrade; it’s a blueprint for how AI systems will scale in the coming years.