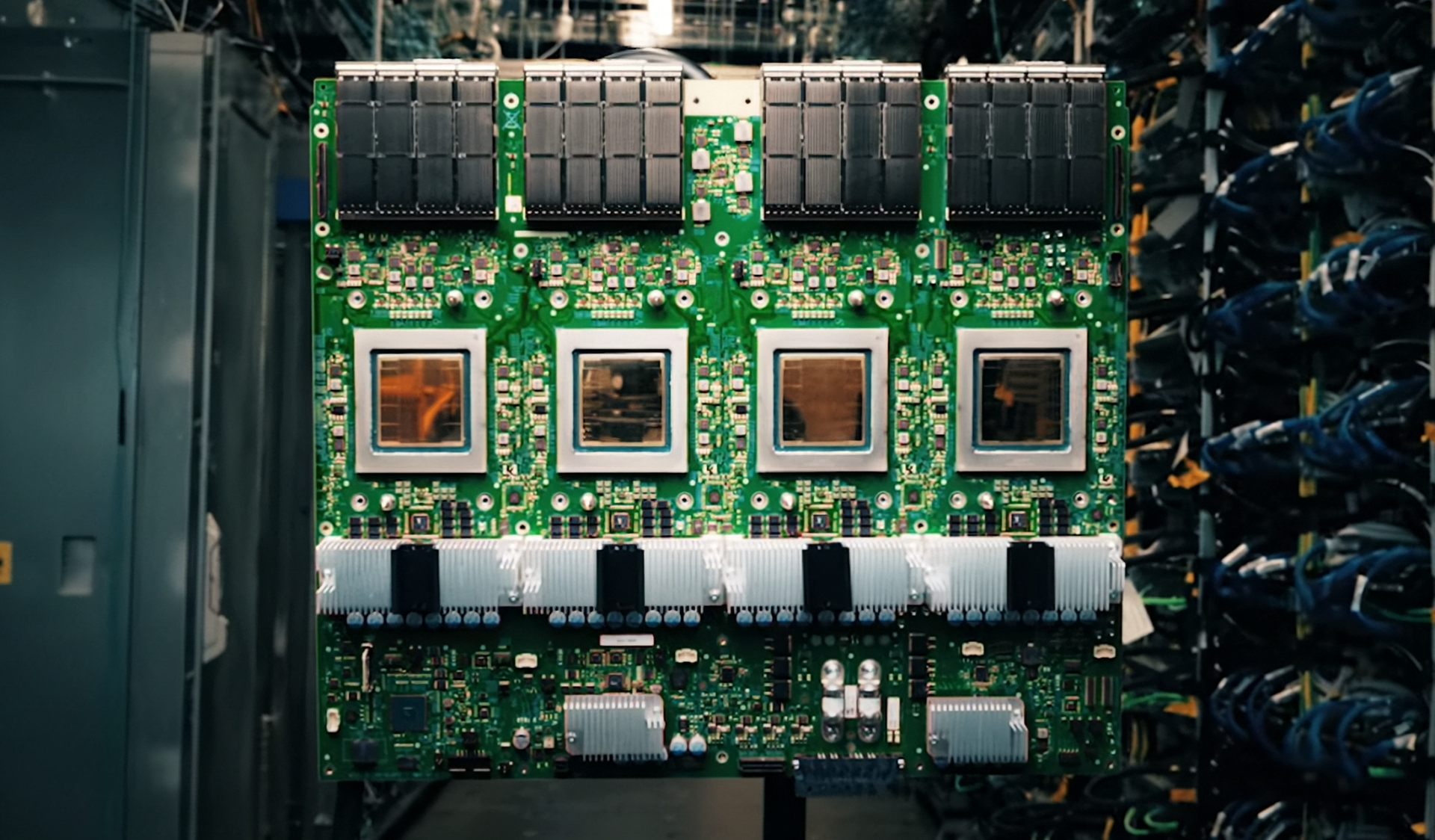

The H200 GPU, once sidelined by geopolitical constraints, is now back in production—a development that could redefine NVIDIA's supply chain dynamics. This move comes alongside the introduction of a Groq-based solution, hinting at a strategic realignment for data center workloads.

For power users, this shift brings immediate operational benefits: lower latency and improved efficiency. The H200's return means access to its 96GB HBM3e memory and 82TOPS FP16 performance without supply uncertainties. Meanwhile, the Groq partnership introduces a new path for AI inference, potentially reducing reliance on proprietary NVIDIA accelerators.

That’s the upside—here’s the catch. The Groq solution, while promising cost savings, may not yet match the H200's raw throughput in training scenarios. Users prioritizing end-to-end NVIDIA workflows should weigh whether this is a complementary tool or a compromise on performance.

Looking ahead, NVIDIA’s ability to stabilize its China operations will determine how quickly these gains materialize. If production scales smoothly, the H200 could become a cornerstone for both AI training and inference. But without clarity on long-term supply chains, some operators may remain cautious about committing to large-scale deployments.

The Groq collaboration, though still in its early stages, suggests NVIDIA is hedging against potential bottlenecks. Whether this becomes a standard alternative or remains a niche offering depends on how quickly it integrates with existing CUDA ecosystems—a process that’s still unconfirmed but under active development.