Dubbed the NVLink 6 spine, this architecture acts as the nervous system of Rubin, enabling direct, high-speed communication between GPUs and CPUs within the rack. The result? A system capable of sustaining 260 terabytes per second of bandwidth—a figure that dwarfs traditional PCIe-based interconnects. This level of throughput isn’t just theoretical; it directly impacts how quickly AI models can process data in real time, a critical factor for applications like autonomous systems, generative AI, and large-scale simulations.

The spine’s design is equally innovative. By eliminating the need for traditional switches or bridges, NVIDIA reduces latency while increasing reliability. For hyperscalers running 24/7 operations, this means fewer interruptions and more predictable performance. The spine also supports NVLink 6’s 900 GB/s per-link bandwidth, allowing multiple GPUs to work in unison as if they were a single, massive processing unit.

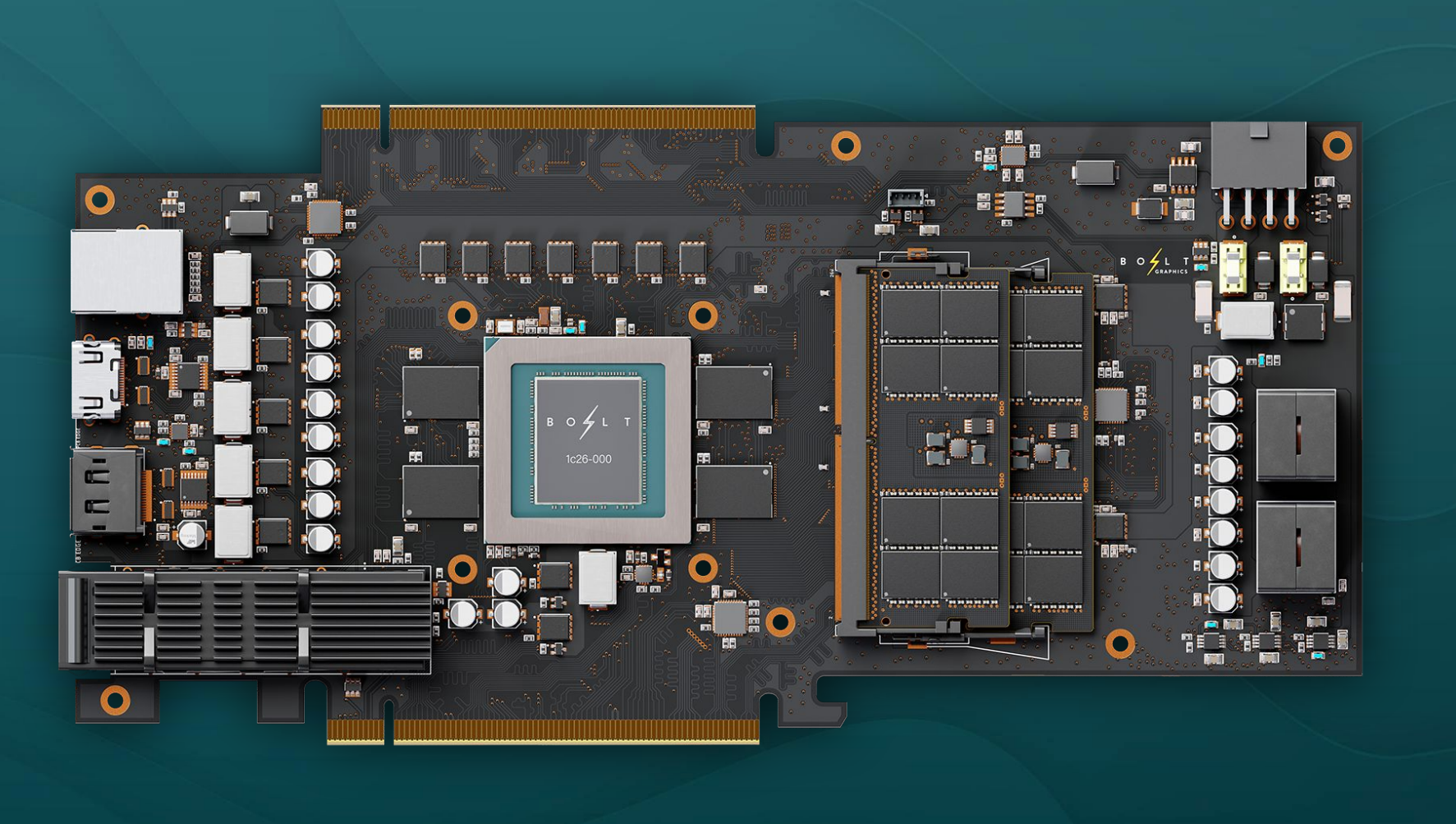

But the true innovation lies in how Rubin balances these components. The system integrates six custom chips, including both GPUs and CPUs, into a single rack. This isn’t just about packing more power into a smaller space—it’s about creating a symbiotic relationship between compute and memory. Traditional AI clusters often struggle with memory bottlenecks, where data movement between DRAM and GPUs becomes a limiting factor. Rubin’s HBM4 stack, with its 1.2 TB/s bandwidth per GPU, mitigates this by keeping data closer to where it’s needed.

For hyperscalers, this translates to more efficient training cycles. Large language models, for example, require massive datasets that must be shuttled between memory and compute units repeatedly. Rubin’s architecture reduces this overhead, potentially cutting training times by as much as 30% for certain workloads. The impact on inference is even more pronounced: by minimizing latency between memory and compute, Rubin could enable near-instantaneous responses for real-time applications like fraud detection or dynamic pricing engines.

The implications for data center design are profound. Rubin’s modular liquid cooling system, which wraps around both GPUs and CPUs via cold plates, isn’t just a cooling solution—it’s a statement on sustainability. Traditional air-cooled setups consume vast amounts of energy and require extensive infrastructure to manage heat. Rubin’s approach reduces water usage by up to 40% while maintaining peak performance, a critical advantage as AI workloads demand more power. The system’s zero-downtime maintenance capability further cements its appeal: hyperscalers can replace components without interrupting operations, a feature that could save millions in lost productivity.

Yet, Rubin isn’t just about raw performance metrics. It represents a shift in how AI infrastructure is architected. By integrating memory, compute, and cooling into a single, cohesive unit, NVIDIA is challenging the industry to rethink the boundaries of what’s possible. The system’s 6nm process node for custom chips ensures efficiency at the transistor level, while the SOCAMM modules (System-on-Chip Accelerated Memory Management) optimize data flow in ways that traditional architectures cannot.

The real-world impact of Rubin could be felt most acutely in industries where AI is mission-critical. For example, in autonomous driving, where real-time processing is non-negotiable, Rubin’s low-latency memory and compute integration could enable more sophisticated decision-making in self-driving systems. Similarly, in healthcare, where AI models analyze vast datasets for diagnostics, Rubin’s efficiency could accelerate breakthroughs in personalized medicine. Even in cloud services, where cost per inference is a major concern, Rubin’s architecture could slash operational expenses by up to 10x, making advanced AI more accessible to smaller enterprises.

Of course, no system of this complexity is without challenges. Rubin’s custom design means it won’t be a drop-in replacement for existing AI clusters. Hyperscalers will need to adapt their data centers to accommodate its unique requirements, from liquid cooling infrastructure to NVLink 6 connectivity. But for those willing to make the investment, the payoff could be transformative. Rubin isn’t just another AI accelerator—it’s a blueprint for the next generation of hyperscale computing.

As NVIDIA prepares to roll out Rubin, the question isn’t whether it will deliver on its promises, but how quickly the industry will embrace this new paradigm. With AI workloads growing exponentially, the need for systems like Rubin has never been more urgent. Whether in training massive models or deploying real-time inference at scale, Rubin could redefine the limits of what’s achievable—one rack at a time.