Phison Electronics has unveiled a new approach to managing AI workloads at the edge, leveraging flash memory to extend the effective capacity of GPU-driven systems. The aiDAPTIV technology, demonstrated at an industry event, intelligently allocates and retains data across multiple memory tiers—GPU VRAM, system RAM, and high-endurance flash—allowing organizations to run larger models and longer inference tasks on local hardware without upgrading GPUs.

This solution addresses a growing bottleneck in AI deployment. As demand for on-premises AI systems rises, the need for substantial memory resources often outpaces available hardware configurations. aiDAPTIV mitigates this by treating flash storage as an active tier for sustained paging and context retention, effectively expanding usable memory while maintaining data privacy and infrastructure efficiency.

How It Works

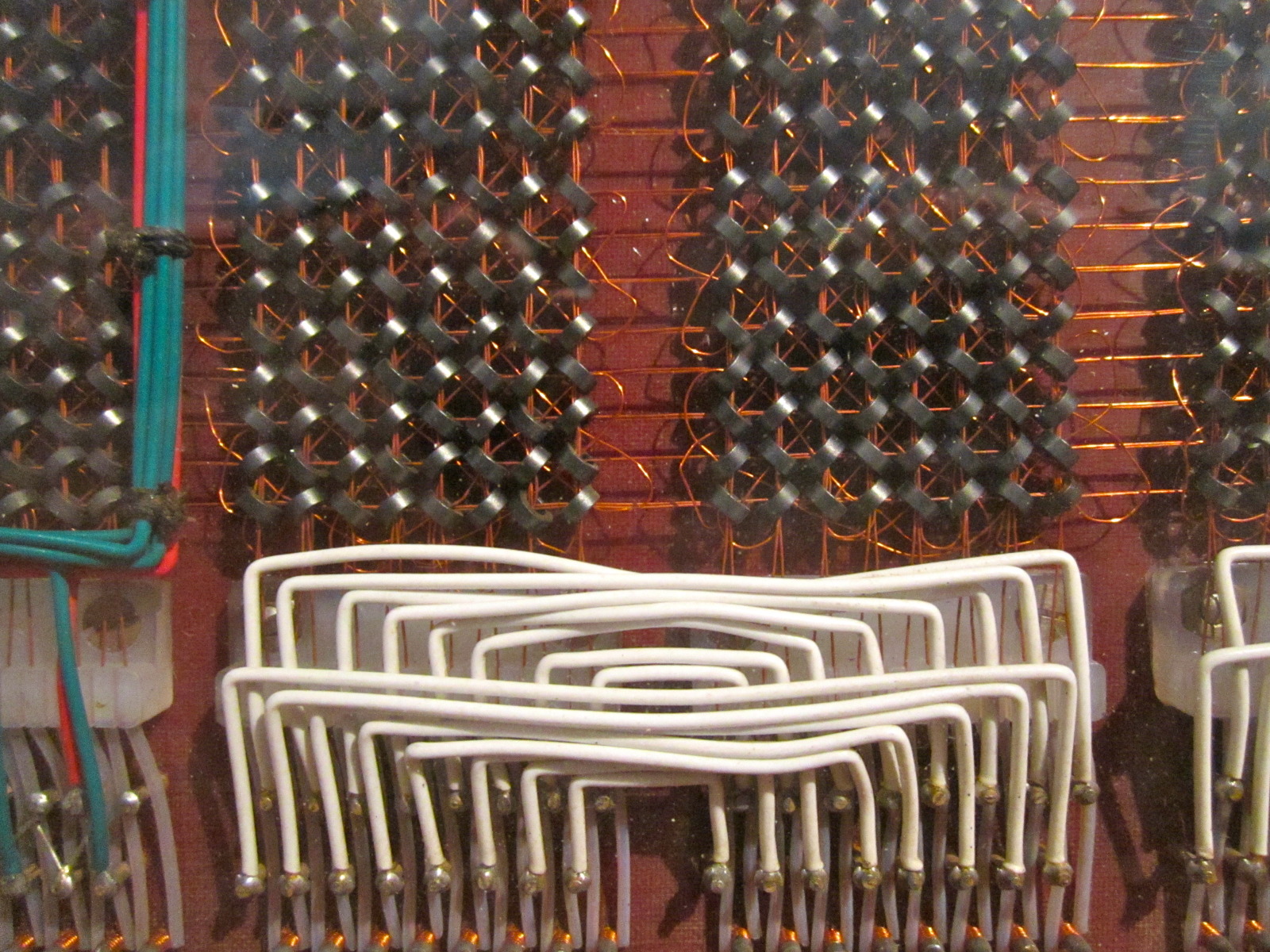

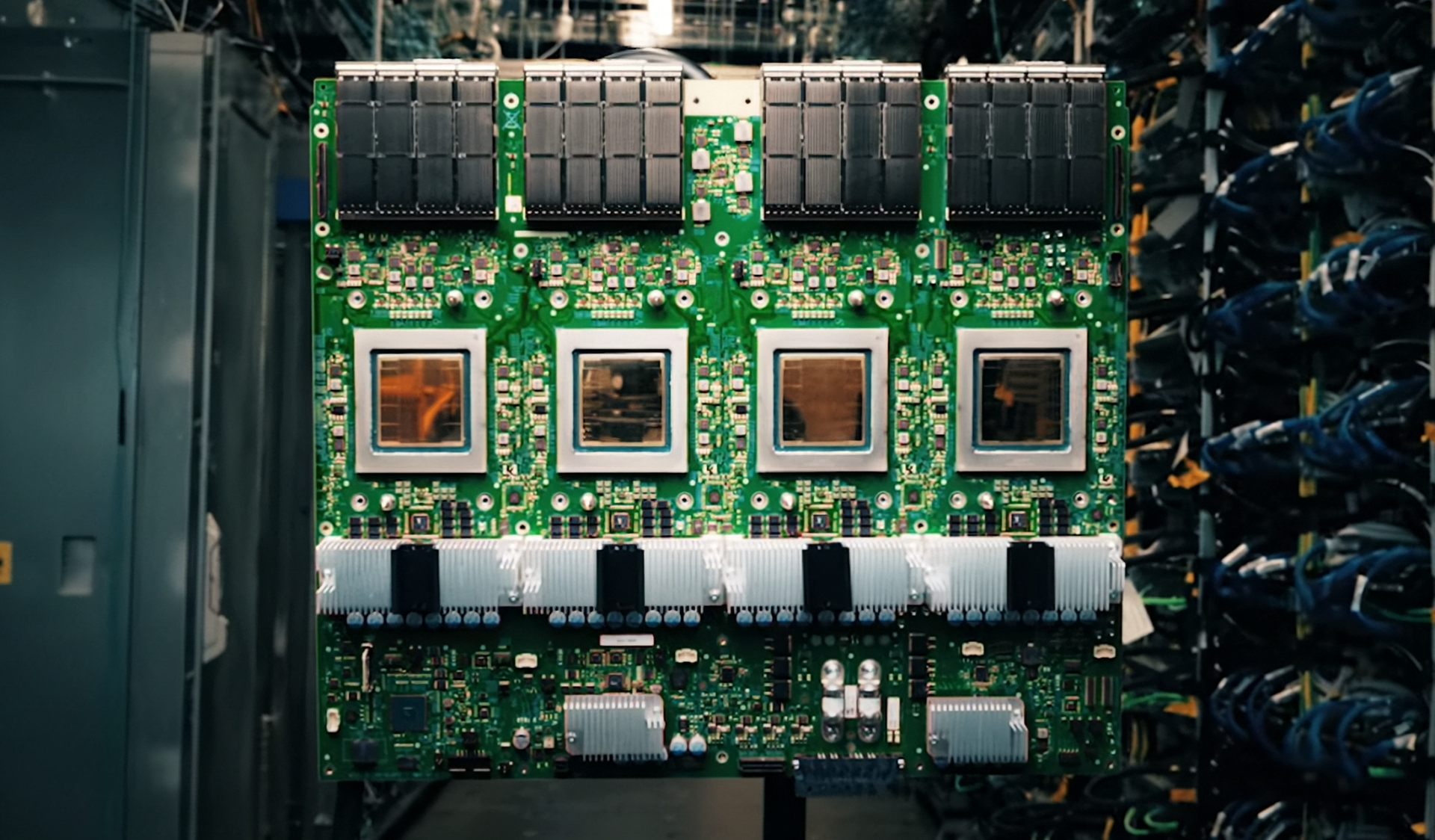

The technology integrates with Phison’s Pascari SSDs, which are optimized for prolonged endurance and rapid data access. When paired with NVIDIA’s latest GPU architectures—such as the RTX 5090 or Blackwell-based processors—aiDAPTIV dynamically manages memory-intensive tasks like fine-tuning and long-context inference. Demonstrations at the event showed systems handling workloads that would typically exceed native GPU memory limits, without requiring additional hardware.

Practical Impact

For small businesses and enterprises, this shift could reduce dependency on cloud-based AI services, lowering latency and data exposure risks. Instead of relying solely on GPU VRAM or system RAM, aiDAPTIV allows local systems to tap into flash storage for extended memory capacity, enabling more complex models to run on existing hardware. This also smooths infrastructure planning by avoiding costly GPU upgrades for scaling workloads.

The technology is being showcased alongside NVIDIA’s Blackwell processors and RTX 50 Series GPUs, which are designed for high-performance inference in data center environments. While the focus remains on local AI deployment, the underlying principle—multi-tier memory management—could influence how future GPU-driven systems are architected to support even larger models.

The immediate benefit is clear: organizations can deploy more capable AI workflows without immediately investing in next-generation hardware. Whether for agentic AI tasks or fine-tuning large-scale models, aiDAPTIV provides a path forward that balances performance and cost, making it a critical development in the ongoing push for local AI computing.