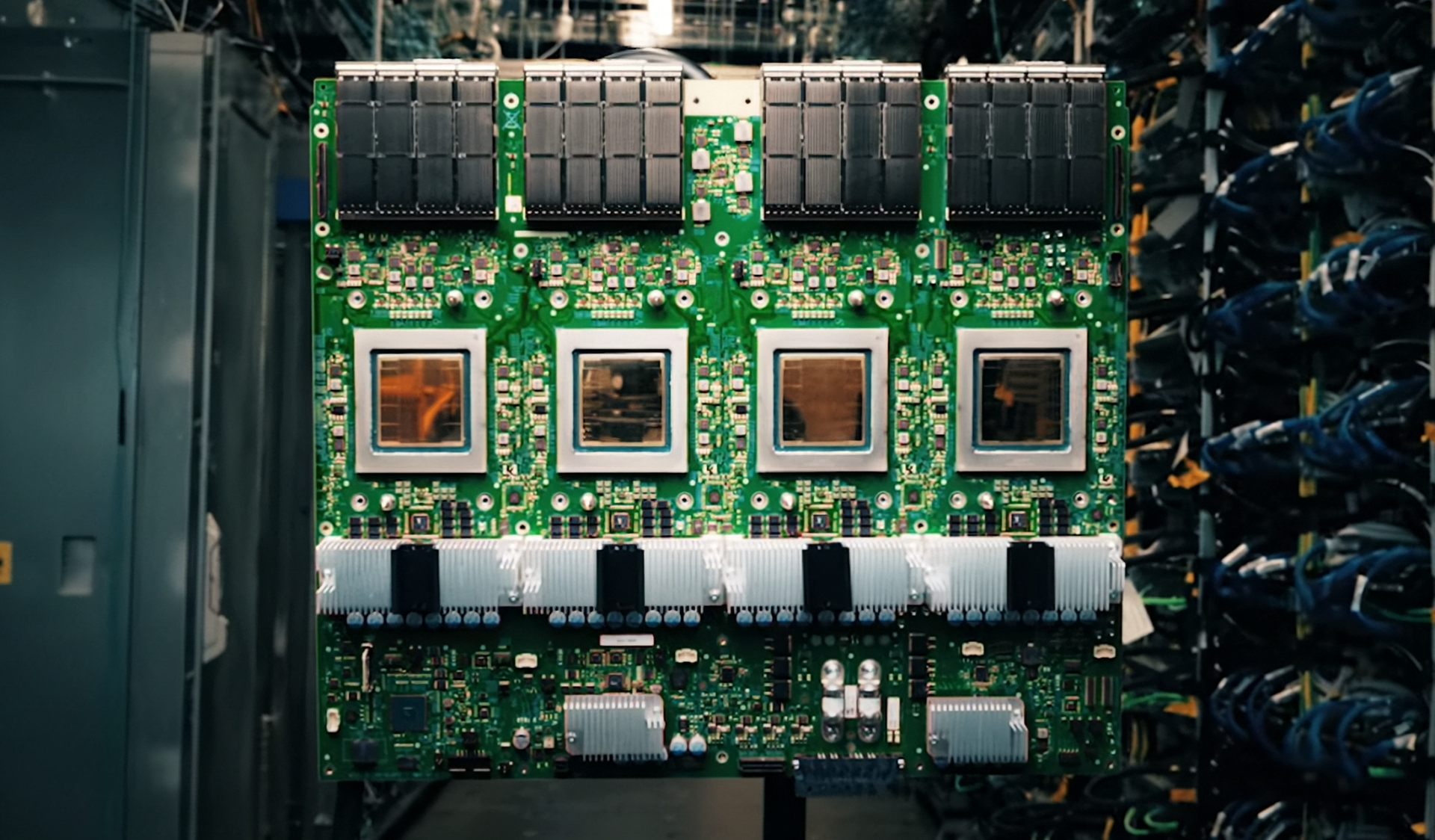

The limitations of traditional memory architectures are becoming painfully clear as AI inference workloads grow more demanding. Even the most advanced GPUs operate at reduced capacity when 70% of tasks are constrained by memory bandwidth rather than compute power. Penguin Solutions is addressing this imbalance with a novel CXL-based cache server that integrates 11 TB of memory into a single, production-ready system. This approach promises to eliminate redundant computations and significantly enhance throughput for enterprise AI applications.

However, the technology’s effectiveness will depend on how seamlessly it integrates with established GPU clusters. While it represents a breakthrough in memory efficiency, its full potential may be constrained by current infrastructure limitations. The focus now shifts to whether this solution can scale effectively across different deployment scenarios without leaving behind organizations that lack the necessary compatibility.

Architectural Innovation: A New Layer for Memory

The server introduces a distinct layer of disaggregated memory that operates in parallel with HBM and system DRAM. By combining 3 TB of DDR5 main memory with up to eight 1 TB CXL Add-in Cards (AICs), it achieves speeds that are ten times faster than NVMe-based alternatives. This level of performance is particularly critical for real-time applications such as financial data processing or retrieval-augmented generation (RAG) over large datasets, where latency cannot be compromised.

Hardware Advancements: CXL as the Catalyst

The key innovation lies in how GPUs access this expanded memory. Traditional systems struggle to keep pace with the demands of larger context windows and higher precision models, often leading to repeated data recomputation. Penguin’s solution optimizes KV cache offloading, significantly reducing bottlenecks while maintaining performance levels. This approach is designed to work seamlessly with NVIDIA Dynamo for enhanced efficiency.

- Memory Configuration: 3 TB DDR5 main memory + up to eight 1 TB CXL AICs (total: 11 TB)

- Performance Optimization: Compatible with NVIDIA Dynamo for streamlined KV cache offloading

- Latency Reduction: Sub-millisecond access times, essential for agentic AI and real-time workloads

- Scalability: Supports larger context windows and higher concurrency without GPU memory constraints

While the server maximizes GPU efficiency by allowing clusters to be right-sized, it also requires a fundamental shift in how enterprises approach infrastructure design. Existing setups may need adjustments to fully realize the benefits of CXL technology. Despite these challenges, Penguin Solutions asserts that the cost and power savings could justify the transition.

Application and Limitations

This server is not designed for training but for inference-heavy workloads where memory bandwidth is the primary constraint. Financial institutions, compliance teams, and organizations implementing RAG over large datasets are likely to see immediate improvements in throughput and token speed. However, smaller deployments or those without NVIDIA Dynamo compatibility may encounter significant hurdles. The high performance comes with a substantial upfront cost, which could limit adoption among less resource-intensive operations.

Currently making its debut at the NVIDIA GTC conference, this technology is generating considerable interest. The next phase will determine whether it can move beyond early adopters and become a standard component of AI inference setups. If successful, it could redefine the efficiency of AI inference—but only if the broader ecosystem adapts to support it.