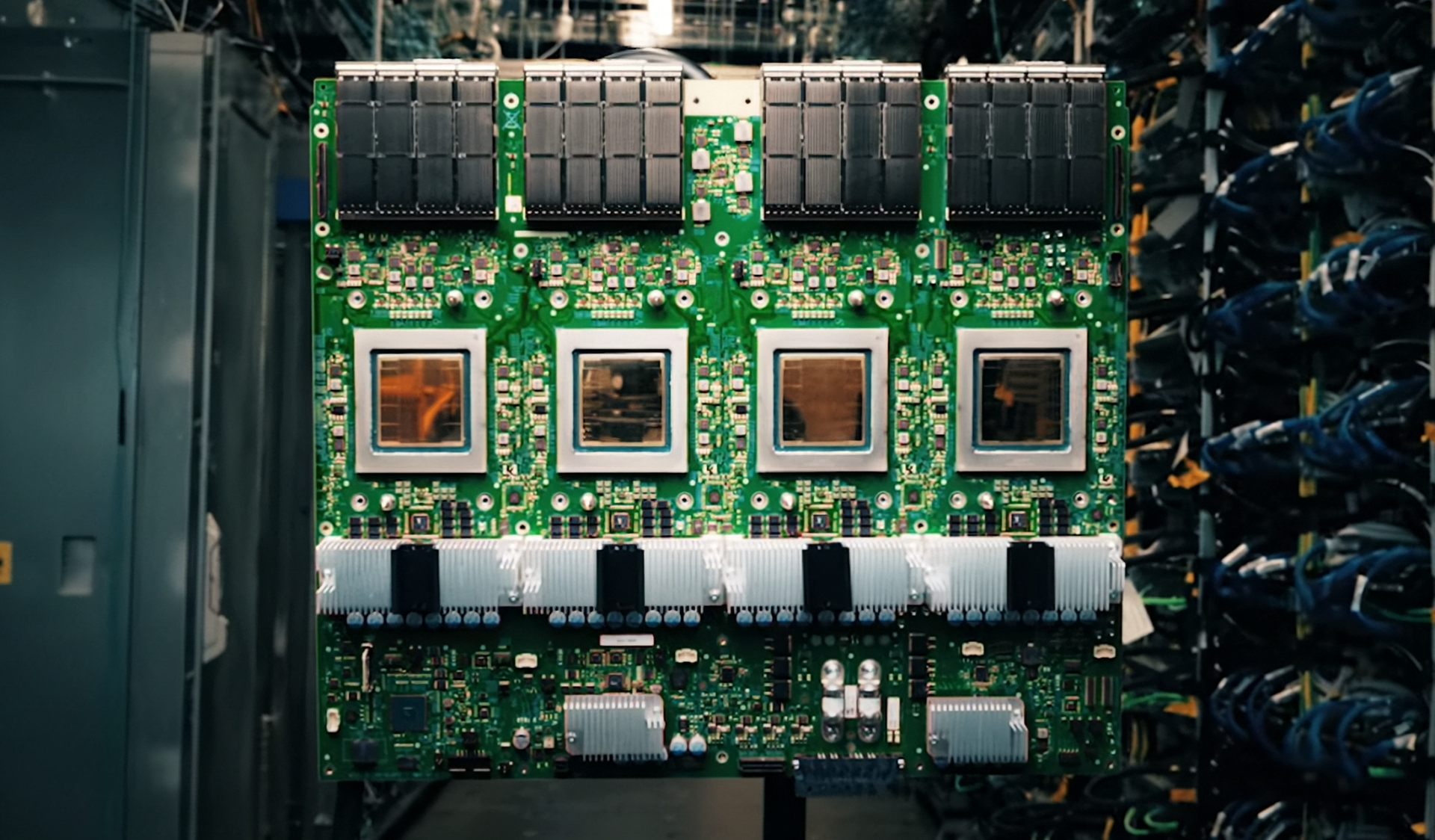

Samsung has unveiled its first High Bandwidth Memory 4 Enhanced (HBM4E) solutions at an industry event, marking a significant leap in memory density and performance for AI-accelerated systems.

The new HBM4E stacks come in two configurations: one with eight layers per stack, offering 8 GB capacity, and another with twelve layers, doubling that to 16 GB. Each 12-high stack achieves a bandwidth of 7.6 TB/s, nearly matching the peak performance of NVIDIA’s latest GPUs. This positions Samsung’s HBM4E as a direct enabler for platforms like NVIDIA’s Hopper and Blackwell architectures, where memory bandwidth is a bottleneck in high-performance computing.

Why the Shift Matters

The introduction of HBM4E stacks reflects broader industry trends toward higher-density, lower-latency memory solutions. For AI workloads—particularly those involving large language models or generative AI—the ability to move data faster between CPU and GPU is critical. However, the transition from HBM3 to HBM4E isn’t seamless. Existing systems built around HBM3 will require hardware upgrades, creating a compatibility challenge for data centers and cloud providers.

Key Specifications

- Capacity: 8 GB (8-high) or 16 GB (12-high)

- Bandwidth: Up to 7.6 TB/s per stack

- Power Efficiency: 40% lower than HBM3, targeting data center deployments

- Compatibility: Designed for NVIDIA’s Hopper and Blackwell platforms, with potential for broader adoption in future AI chips

A practical example of the impact: a user running a large-scale AI training job on a server equipped with HBM4E stacks would notice significantly faster iteration times during model development. The reduced latency between memory and accelerator could shave minutes off each epoch, accelerating research cycles.

Looking Ahead

The real question for buyers isn’t just whether to adopt HBM4E—it’s whether to do so now or wait for the next generation. Samsung’s focus on power efficiency (claiming a 40% improvement over HBM3) suggests these stacks are built with data center economics in mind, but the cost of upgrading existing infrastructure remains an unresolved factor.

For creators and researchers working at the bleeding edge, the choice is clear: HBM4E represents the next step in AI performance. But for those operating on tighter budgets or legacy systems, the risk of compatibility issues may outweigh the benefits. The industry’s ability to standardize around this new memory form factor will determine whether it becomes a must-have upgrade—or just another speed bump on the path to AI scalability.