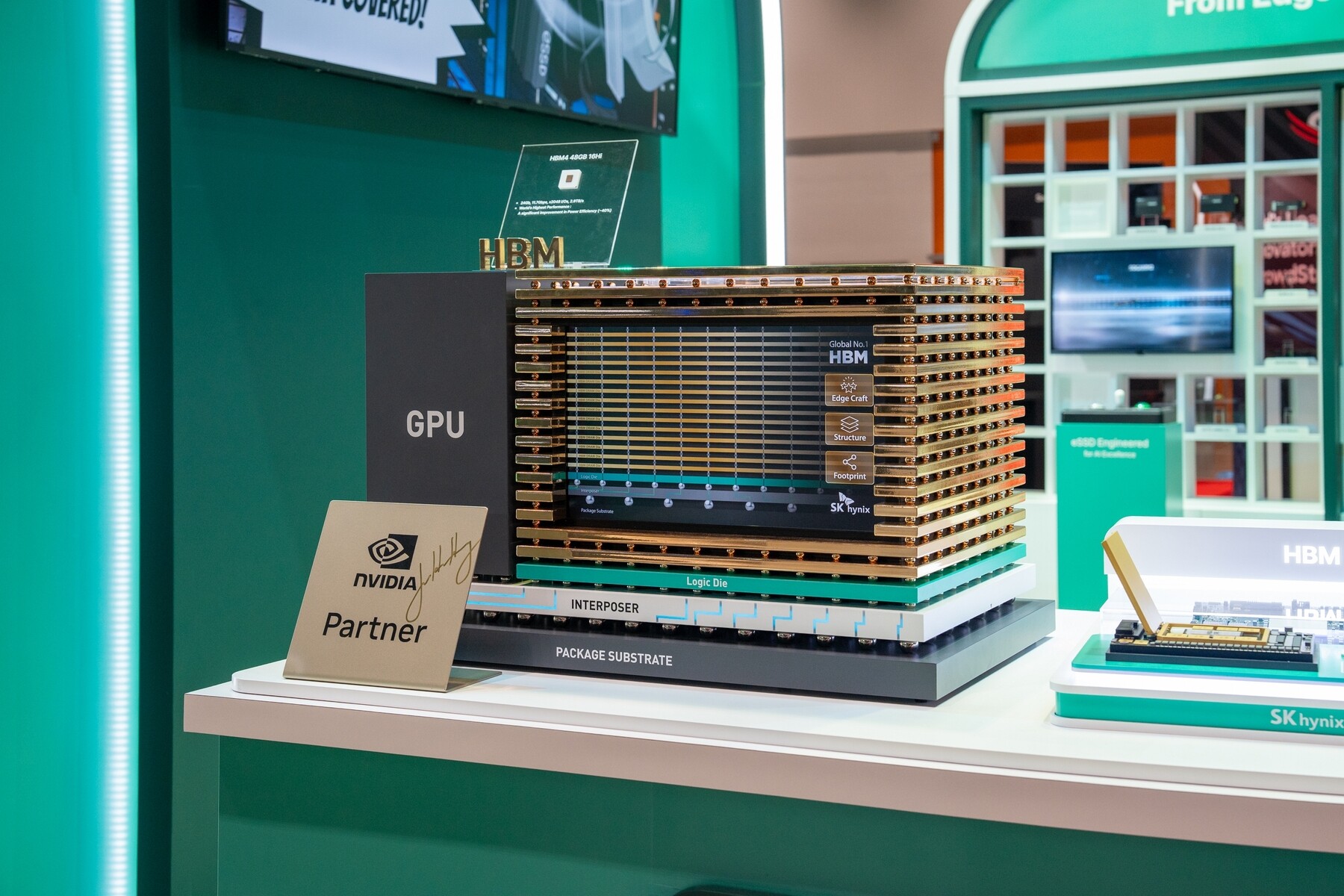

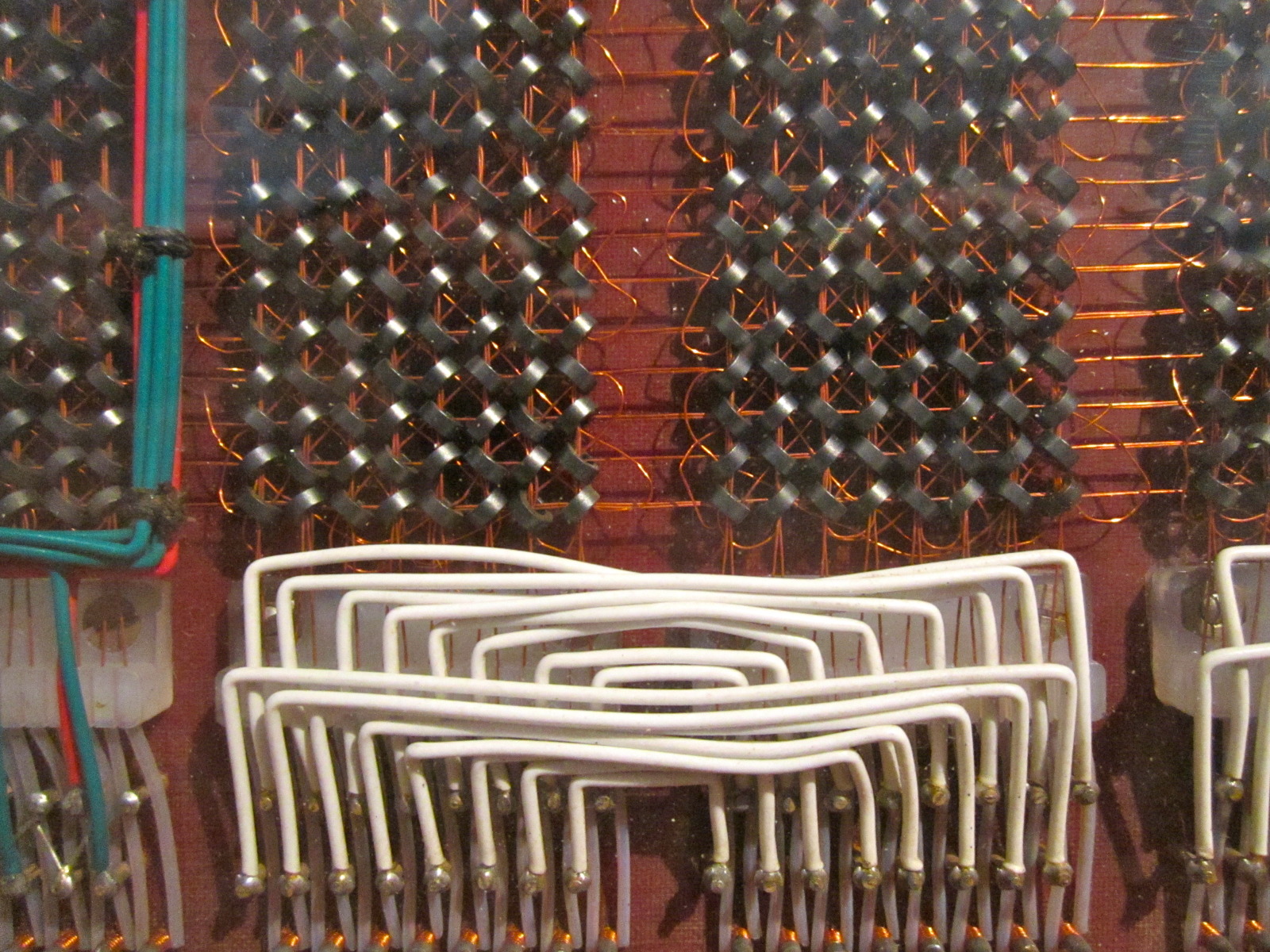

infrastructure is entering a phase where memory performance becomes the bottleneck that defines system architecture. To address this, SK Hynix is deepening its collaboration with NVIDIA at GTC 2026, focusing on memory technologies that directly impact AI training and inference efficiency.

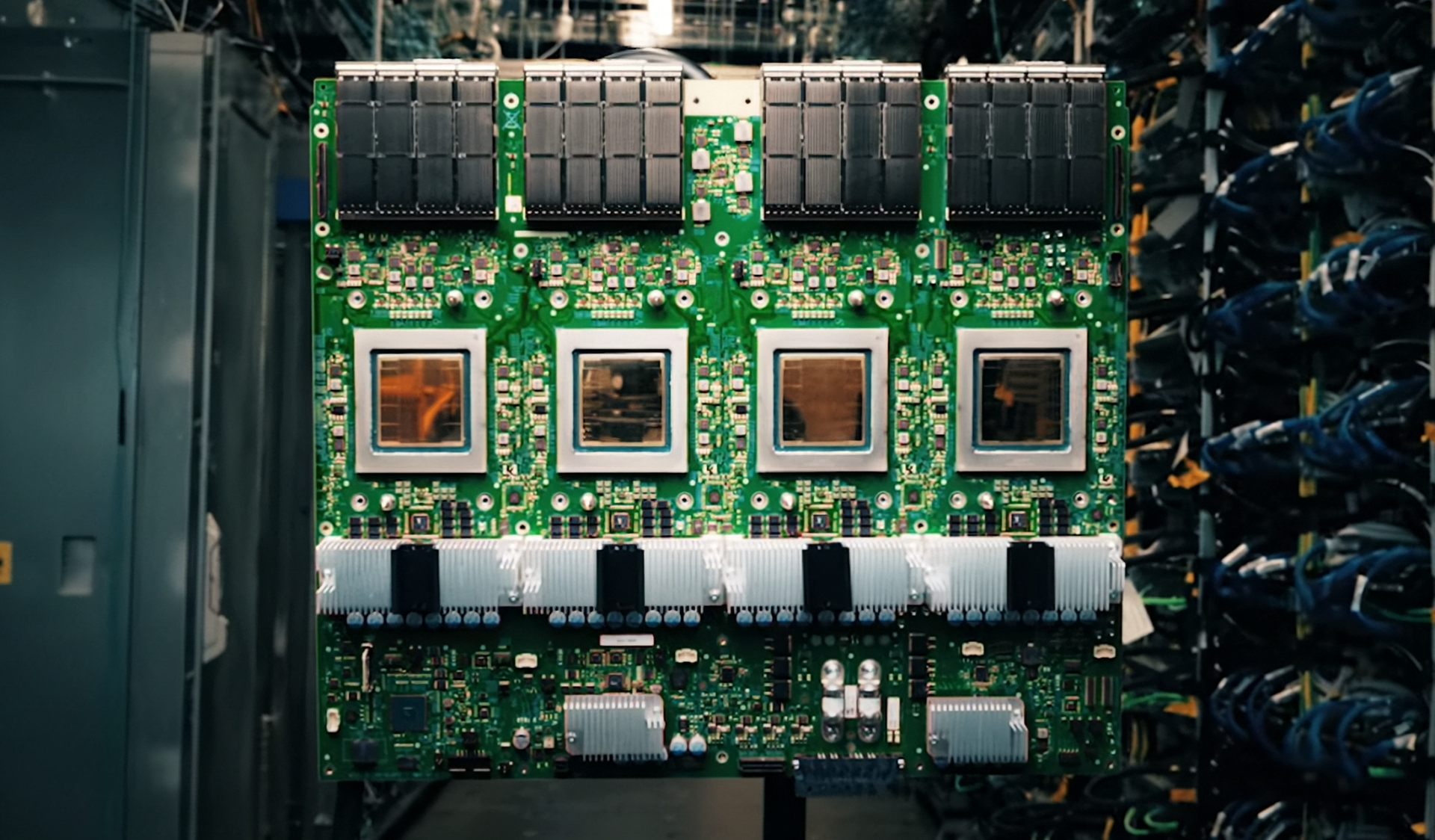

The partnership will highlight how SK Hynix's memory solutions—including HBM4, HBM3E, and LPDDR5X—integrate into NVIDIA's GPU-based accelerators. This is not just about raw capacity; it's about minimizing data latency in systems where every nanosecond matters.

- HBM4 and HBM3E for high-bandwidth AI workloads

- LPDDR5X for desktop AI supercomputers like the DGX Spark

- GDDR7 for graphics and compute applications

The exhibit will also feature a liquid-cooled eSSD, showing how storage and memory can be co-optimized in next-generation systems. For enterprise buyers, this means tighter integration between NVIDIA's software stack and SK Hynix's hardware, which could accelerate upgrade timing for AI infrastructure.

However, the practical impact depends on two key constraints: supply chain stability and thermal management. While SK Hynix is showcasing high-stack TSV technology, real-world deployment will require proof that these memory stacks can sustain prolonged workloads without thermal throttling—a concern for data center operators running 24/7 AI training.

The partnership also raises questions about timing for NVIDIA's upcoming GPU models. Reports suggest the RTX 5090 and RTX 5080 will rely on these memory technologies, but whether they'll reach enterprise buyers before mid-2026 remains unclear. If supply tightens further, upgrade cycles could stretch beyond initial projections.

For now, SK Hynix is positioning itself as the memory layer that will shape NVIDIA's AI ecosystem. Whether this translates into faster, more efficient systems—or just another layer of complexity in procurement—will depend on how quickly these technologies move from demonstration to deployment.