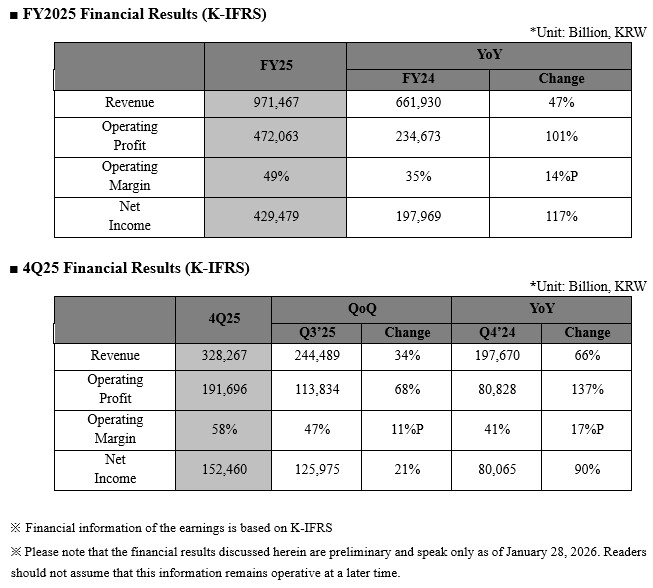

SK hynix has turned its technological edge into record-breaking financial results, posting a 97.1467 trillion won revenue in 2025—a leap that outpaces even its own prior benchmarks. Operating profit nearly doubled year-over-year to 47.2063 trillion won, while net profit hit 42.9479 trillion won, underscoring the company’s ability to capitalize on surging demand for AI-driven memory solutions.

The fourth quarter alone delivered a 34% revenue spike to 32.8267 trillion won, with operating margins soaring to 58%—a testament to SK hynix’s proactive scaling of high-value products amid an AI boom. Unlike competitors, the company has simultaneously ramped up production of both HBM3E and HBM4, positioning itself as the sole supplier capable of meeting the evolving needs of data centers and high-performance computing (HPC) workloads.

Why This Matters: A Memory Supplier Transformed into an AI Infrastructure Partner

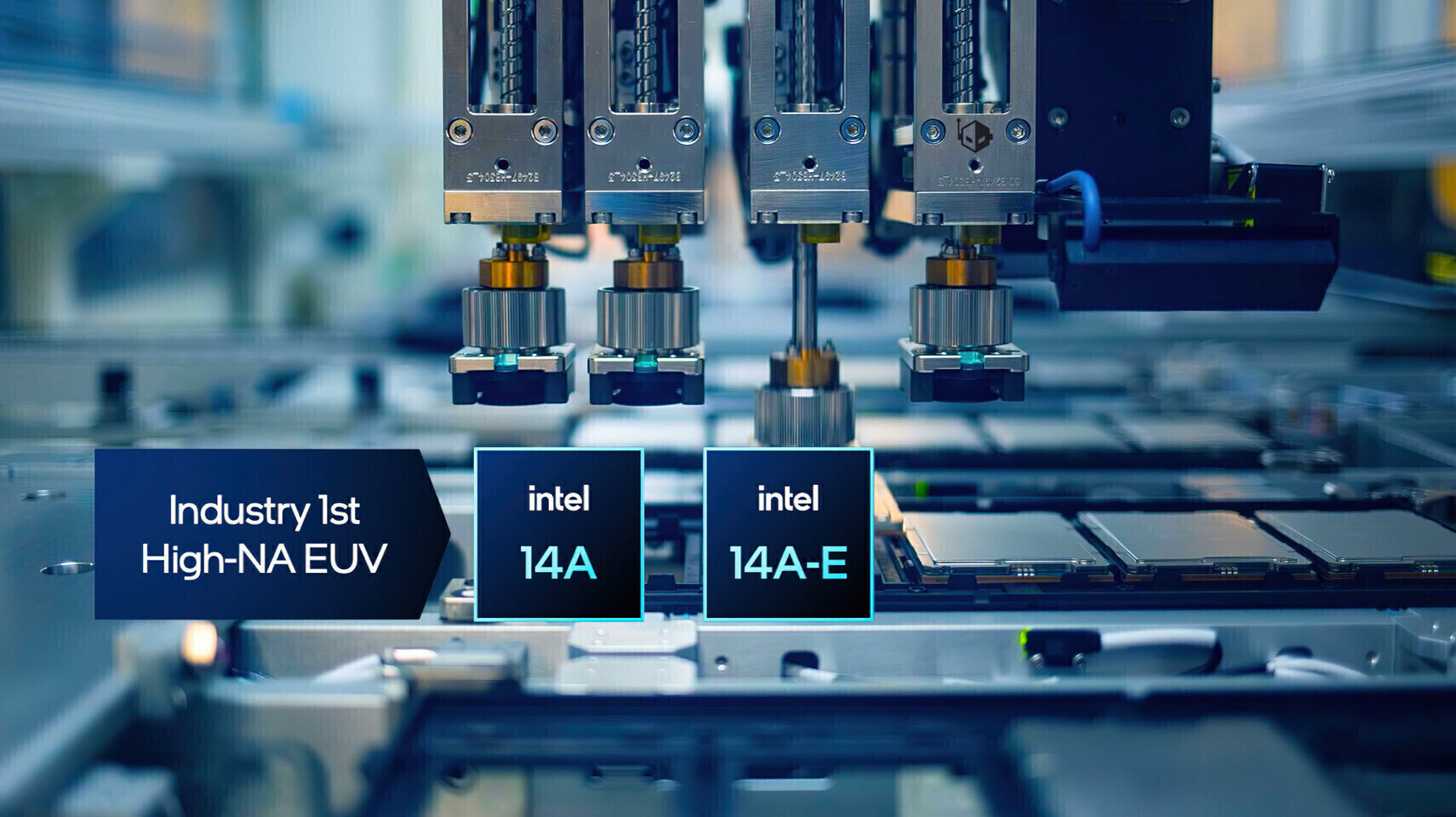

SK hynix’s strategy hinges on three pillars: leadership in next-gen memory, expanded server-grade DRAM, and scalable NAND solutions. The company’s decision to mass-produce HBM4—a first in the industry—aligns with the shift from AI training to inference, where memory bandwidth and capacity become critical. Meanwhile, its 256 GB DDR5 RDIMM, built on fifth-generation 10 nm-class (1b) DRAM, addresses the growing appetite for high-capacity server modules. For NAND, the rollout of 321-layer QLC products and eSSD solutions targets the burgeoning data storage demands of AI workloads.

How It Stacks Up: Outpacing Rivals in AI Memory

While competitors scramble to catch up, SK hynix’s financials and product roadmap reveal a clear advantage. The company’s 1cnm DRAM process—its sixth-generation 10 nm advancement—enables denser, more efficient memory chips, a critical factor in the race to power next-gen GPUs like the GeForce RTX 5090, which is rumored to command a $5,000 price tag due to AI-driven demand. Unlike Micron’s recent retreat from consumer markets, SK hynix is doubling down on partnerships, including collaborations to optimize Custom HBM and GDDR7 for graphics and AI acceleration.

Key Moves: What’s Next for SK hynix

- HBM4 Mass Production: Having completed preparation in September 2025, SK hynix is now scaling up HBM4 to meet customer requests, reinforcing its dominance in high-bandwidth memory.

- DDR5 and GDDR7 Expansion: The company plans to accelerate adoption of its 1cnm process, with plans to introduce SOCAMM2 and GDDR7 solutions tailored for AI and graphics applications.

- NAND Leadership: With 321-layer QLC and eSSD products, SK hynix is addressing AI data center storage needs, leveraging Solidigm’s expertise to deliver high-performance, cost-efficient solutions.

- Global Manufacturing Push: Construction of new fabs in Cheongju and Yongin, along with advanced packaging facilities in Indiana, U.S., ensures a flexible, scalable supply chain to adapt to demand fluctuations.

- Shareholder Returns: A 3,000 won per-share dividend—the highest in the company’s history—combined with a 15.3 million share buyback worth 12.2 trillion won, signals confidence in sustained growth.

President Song Hyun Jong framed the results as a shift from mere product supplier to AI infrastructure partner, emphasizing the company’s role in enabling customers to meet performance demands. With memory shortages projected to persist through 2028, SK hynix’s ability to balance investment, profitability, and shareholder value positions it as a cornerstone of the AI economy.