Snap’s approach to feature development hinges on rapid experimentation, testing thousands of variables across millions of users before any update reaches the main app. This process generates vast amounts of data—over 10 petabytes daily—that must be processed within tight deadlines. To meet this demand, Snap has migrated its core data infrastructure from CPU-based systems to NVIDIA’s GPU-accelerated computing framework, significantly cutting costs and runtime while maintaining scalability.

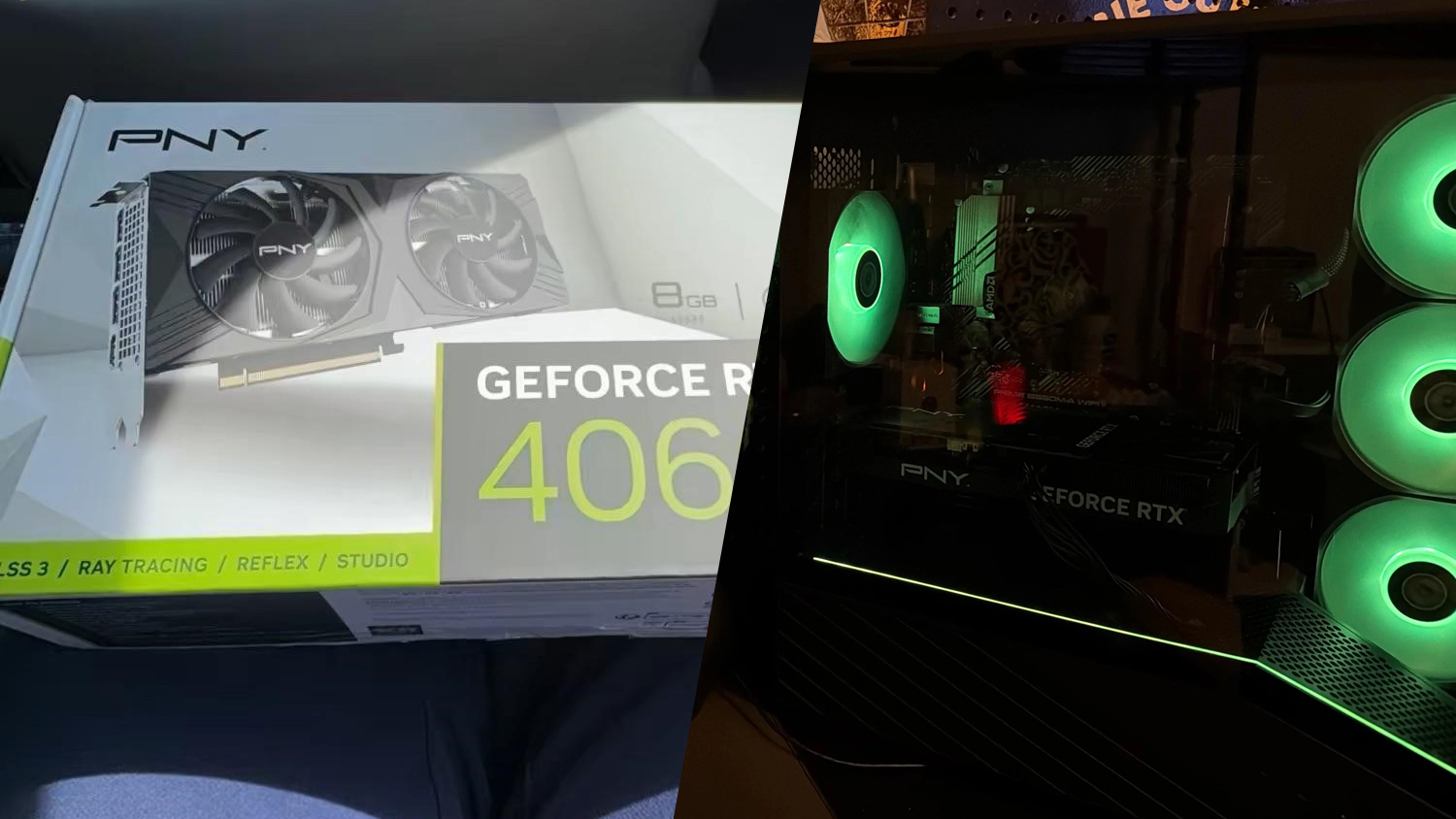

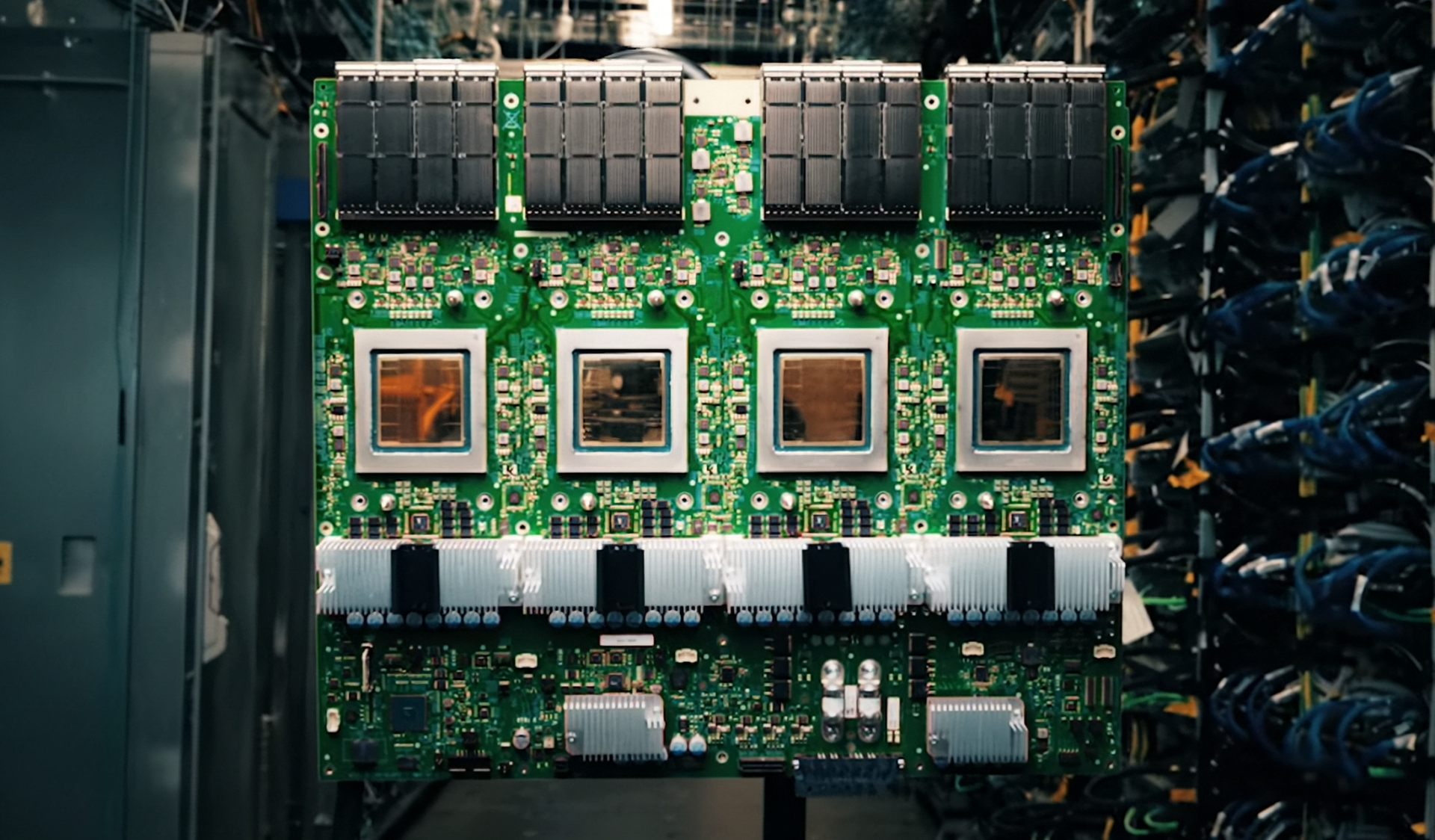

The shift leverages NVIDIA’s cuDF library, which integrates with Apache Spark to run distributed workloads on GPUs. Unlike traditional CPU-based processing, this setup allows Snap to achieve 4x faster runtime for the same volume of data, reducing its daily compute costs by up to 76% while requiring fewer machines. The change is part of a broader move toward GPU-accelerated pipelines, which also includes NVIDIA’s CUDA-X libraries and Google Cloud’s Kubernetes Engine for infrastructure management.

Performance and Cost Efficiency

The transition has delivered measurable improvements. Snap’s internal data shows that workloads now run on just 2,100 concurrent GPUs instead of the originally projected 5,500, thanks to optimized pipelines built on Google Cloud’s G2 virtual machines powered by NVIDIA L4 GPUs. This efficiency gain is particularly notable given the scale of Snap’s operations—processing thousands of experiments monthly, each analyzing metrics like engagement and monetization.

Looking Ahead

While the immediate benefits are clear, Snap’s team is exploring further integration of GPU acceleration across additional production workloads. The potential for broader adoption suggests that this shift could become a model for other companies with high-volume data processing needs. However, challenges remain in balancing cost savings with long-term scalability, especially as user growth and feature complexity continue to rise.

The move underscores how open-source acceleration libraries are reshaping enterprise computing, offering a path to performance gains without proportional hardware investments. For Snap, the results so far are promising—but the full impact will depend on whether these optimizations can scale beyond its current pipelines.