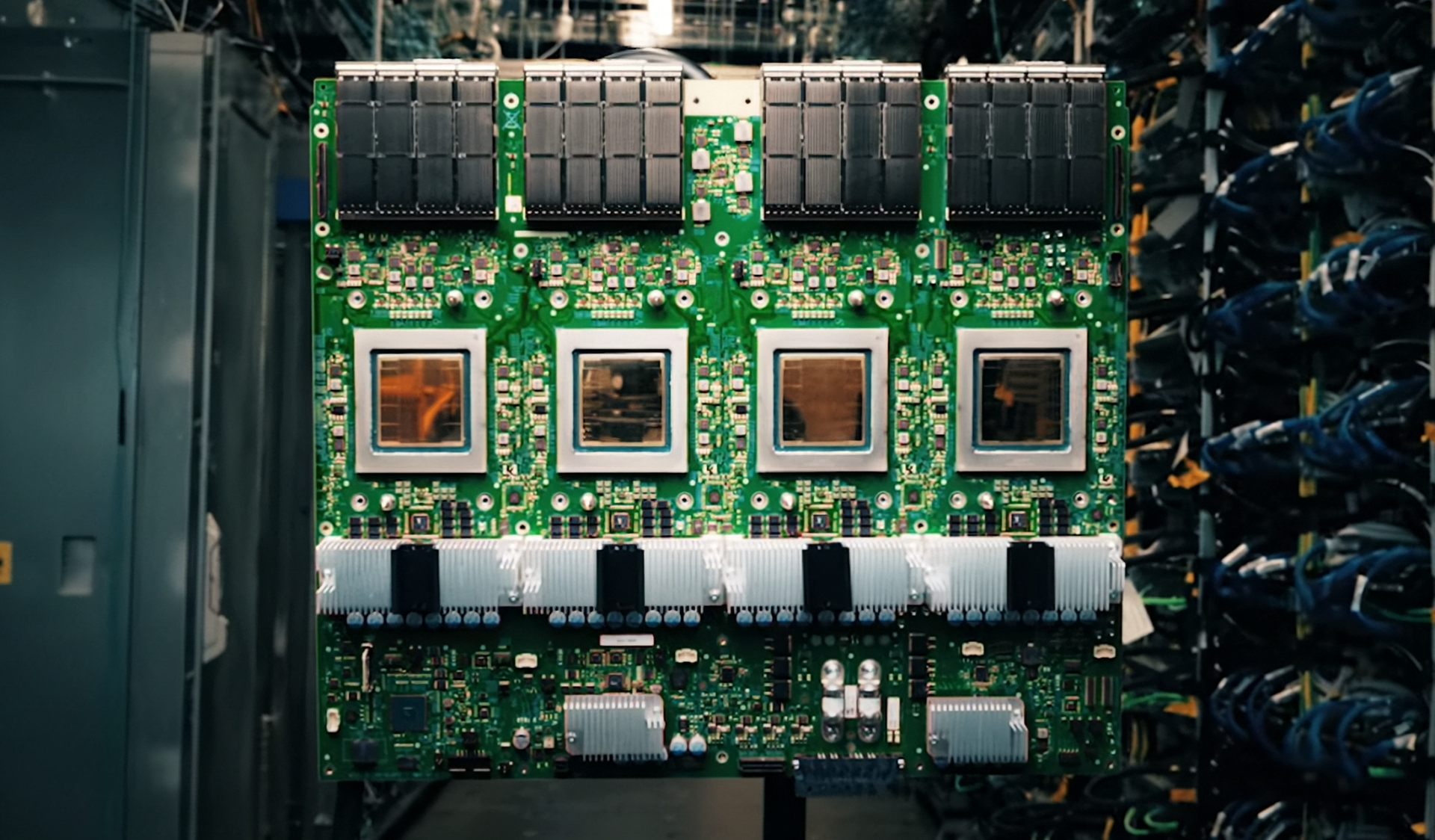

The shift toward AI-driven architectures is redefining storage requirements. NAND flash, once relegated to secondary roles, is now stepping into a performance-critical layer that demands deterministic latency and quality-of-service guarantees—similar to traditional memory tiers.

Silicon Motion’s latest enterprise SSD controllers aim to meet this evolving need by integrating advanced workload isolation, dynamic optimization, and support for both TLC and QLC NAND. These solutions are tailored for AI inference systems where storage must operate as an active tier within the memory hierarchy, ensuring sustained bandwidth without compromising data integrity.

What Changed: From Background to Critical

Previously, enterprise SSDs were optimized for capacity and endurance but rarely prioritized latency-sensitive operations. Today’s AI workloads—particularly those leveraging NVIDIA’s Inference Context Memory Storage (ICMS) initiative—require storage that can handle dynamic KV cache extensions with precision. Silicon Motion’s MonTitan controllers address this by dynamically adjusting workload behavior, enhancing isolation, and managing tail latency to maintain efficient GPU utilization.

Key Specs

- Controller Models: SM8466, SM8366, SM8308

- NAND Support: TLC and QLC (for nearline and warm data)

- Form Factors: E1.S, E1.L, E3.S, E3.L, U.2 (AI server-optimized)

- Boot Solutions: PCIe NVMe BGA SSDs with SM8008 controller

The controllers feature patented PerformaShape technology, which optimizes performance under mixed AI workloads while maintaining balanced power and throughput. This is particularly relevant for near-GPU storage tiers where sustained IOPS and low latency are essential.

Implications: A New Storage Tier Emerges

The integration of high-performance NAND into AI memory hierarchies introduces a new layer of complexity. Unlike traditional SSDs, these controllers must now compete with DRAM-like performance while maintaining endurance in long-running inference tasks. This shift is evident in Silicon Motion’s focus on deterministic quality-of-service and multi-tenancy support—features previously uncommon in enterprise storage.

For AI infrastructure providers like VAST Data, this means tighter integration between intelligent data management software and hardware. The result is a more scalable architecture where NAND-based storage can handle massive datasets without bottlenecks, directly impacting GPU efficiency.

The Road Ahead: What’s Confirmed vs. Unknown

Silicon Motion has confirmed the availability of these controllers for AI server deployments, with reference design kits (RDKs) supporting leading enterprise form factors. However, real-world adoption hinges on how quickly AI frameworks adapt to storage-aware caching and workload isolation—an area still in development.

The most significant change is the redefinition of NAND’s role: no longer just a capacity-driven medium, but an active tier that must deliver DRAM-like responsiveness. This will shape the next generation of AI infrastructure, where storage efficiency directly translates to computational gains.