The release of Moonshot AI’s Kimi K2.5 isn’t just another milestone in open-source AI—it’s a stress test for the entire ecosystem. Weighing in at 595GB, the model’s sheer scale has sparked debates about what ‘open’ truly means when most developers can’t even run it. The lab’s recent Reddit AMA exposed the raw reality behind frontier AI: debugging failures, grappling with diminishing returns from sheer size, and a growing demand for models that fit on consumer GPUs rather than supercomputers.

The session also served as a rare glimpse into how labs like Moonshot are rethinking progress. With scaling laws hitting limits, the team is betting on Agent Swarm, a system that coordinates up to 100 sub-agents to tackle tasks collaboratively—suggesting the future of AI might lie in distributed intelligence rather than monolithic models. But for enterprises and hobbyists alike, the bigger question remains: When will ‘open’ stop being a theoretical promise and start delivering usable performance?

Why Kimi K2.5’s Size Is Both Its Strength and Its Achilles Heel

Kimi K2.5’s 595GB footprint isn’t just a stat—it’s a dividing line. While the model outperforms competitors like Anthropic’s Claude on benchmarks like HLE and MMMU Pro, its practical deployment hinges on hardware most developers don’t possess. The AMA laid bare the frustration: users aren’t just asking for ‘smaller’ models. They’re demanding versions that fit on a single high-end GPU or modest multi-GPU setups, with parameter counts in the 8B–70B range—sizes that balance capability with accessibility.

Moonshot acknowledged the gap, hinting at future models in the 200B–300B range as a compromise. But even those would require aggressive quantization or distributed training—hardly a drop-in replacement for hobbyist use. The tension is clear: open-source AI’s promise of democratization risks becoming a luxury for those with data-center budgets.

Key Specs and What They Mean for Real-World Use

- Model Size: 595GB (full weights). Existing smaller variants (e.g., mixture-of-experts models) available on Hugging Face.

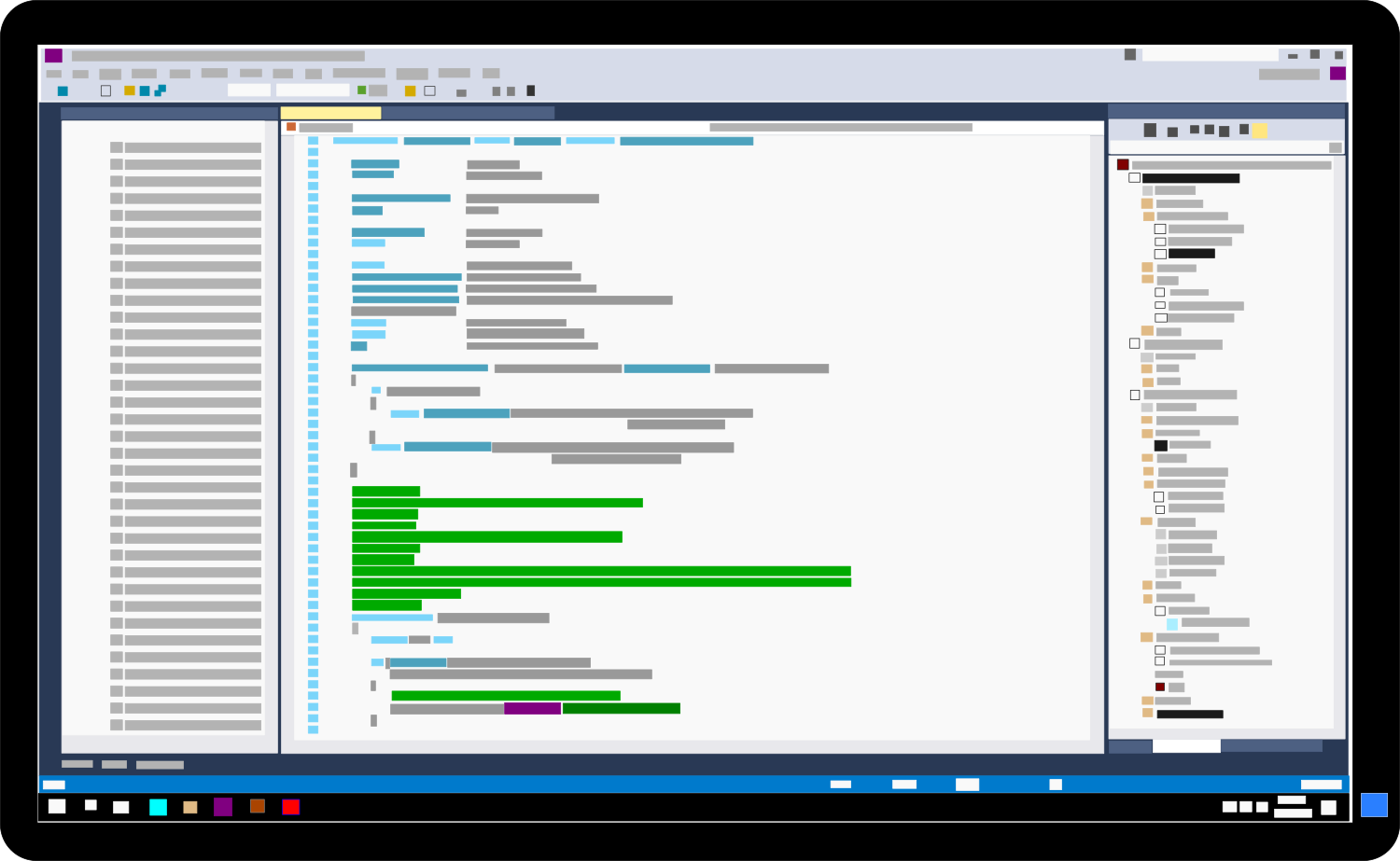

- Agent Swarm: Supports up to 100 parallel sub-agents with isolated memory per agent to prevent context degradation.

- Claimed Speedup: ~4.5x on parallelizable tasks, but depends on task decomposition and orchestrator efficiency.

- Training Focus: Shift from broad pretraining to reinforcement learning (RL) for agent-specific behaviors.

- Personality/Style: Drift observed between versions; team cites reward-model evolution as the challenge.

- Next-Gen Hints: Kimi K3 may integrate linear-attention architecture for long-context efficiency and continual learning for sustained agent performance.

The specs tell only part of the story. Agent Swarm’s design—where sub-agents operate with independent memory—addresses a critical bottleneck in multi-agent systems: context rot. Traditional orchestration floods a central coordinator with logs and partial outputs, leading to degraded performance. Moonshot’s approach mirrors enterprise-grade microservices: bounded outputs, clean control planes, and task-specific sub-agents. But the real-world impact depends on stability. The team promises to open the orchestration framework ‘very soon,’ though no timeline was confirmed.

Who Should Care—and Why

Enterprises: Organizations evaluating AI for workflow automation will find Agent Swarm’s structured outputs and tool-use capabilities compelling. The shift toward RL-driven training suggests future models may prioritize task-specific reliability over raw benchmarks—a critical factor for production deployment.

Developers: Hobbyists and small teams will be watching for smaller, quantized variants. The AMA’s focus on debugging and failure management underscores that frontier AI isn’t about flashy releases—it’s about iterative, hardware-aware engineering. Moonshot’s mention of linear attention in K3 hints at a future where long-context handling becomes more efficient, but only if the underlying math holds at scale.

Researchers: The lab’s candid discussion of scaling limits and ‘soul’ (a metaphor for personality/reward alignment) reflects a broader industry reckoning. As data quality lags behind compute growth, labs are exploring alternative scaling paradigms—like Agent Swarm’s test-time parallelism—as a way to bypass traditional pretraining bottlenecks.

The Hard Truth: Debugging Is the Real Frontier

The most revealing moment came when a Moonshot engineer described research as ‘mostly about managing failure.’ The team recounted months spent debugging an experimental architecture (Kimi Linear) before it passed validation—a process that highlights why most AI innovations never see the light of day. For enterprises, this means vendor reliability isn’t just about benchmarks. It’s about whether a lab can sustain the kind of rigorous, iterative testing that turns promising ideas into production-ready systems.

Kimi K2.5’s 595GB size is a symptom of this challenge. The model’s capabilities are impressive, but its accessibility is limited by the same constraints that plague open-source AI: hardware dependency, debugging overhead, and the gap between theoretical openness and practical deployment. The AMA didn’t just market a product—it laid out the industry’s next battleground: turning ‘open’ into something that actually runs.

Availability and pricing have not been confirmed for smaller model variants or the Agent Swarm orchestration framework.