Enterprises chasing AI at scale now face a critical tradeoff: the ability to process vast datasets faster than ever comes with a price tag that pushes the boundaries of what most organizations can afford. MSI’s latest portfolio, built around NVIDIA’s Blackwell GPUs and DGX Station architecture, aims to bridge this gap by offering data center-class performance in both server and workstation form factors—though the question remains whether the value justifies the cost.

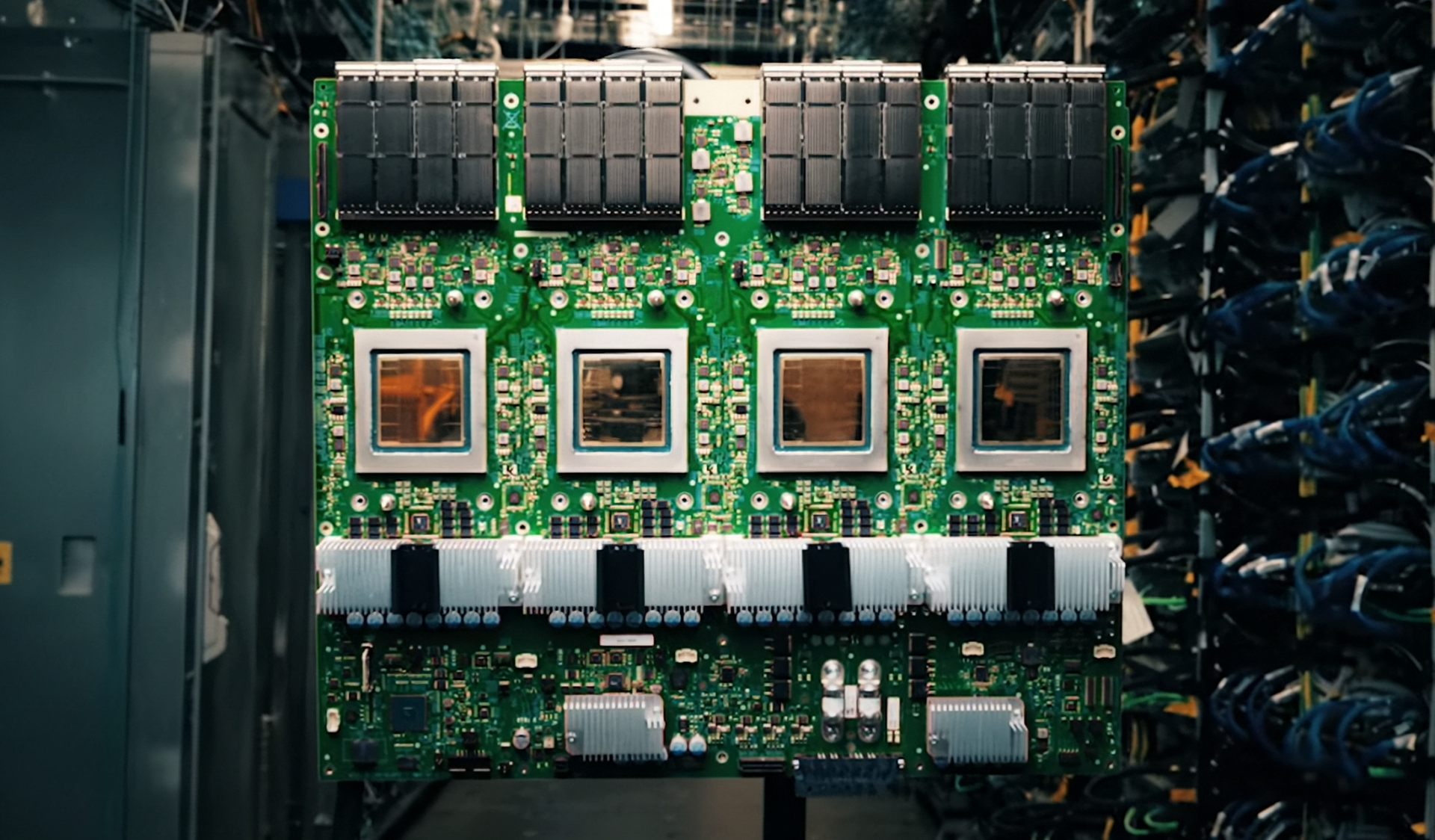

The foundation of MSI’s push lies in the NVIDIA MGX platform, a modular architecture designed for AI training, large-scale inference, and high-performance computing (HPC). Unlike traditional GPU servers, MGX-based systems allow for flexible CPU choices—ranging from Intel Xeon to AMD EPYC 9005 series processors—and support configurations that would make even the most demanding AI clusters envious. For example, the CG481-S6053, a dual-socket AMD EPYC server, crams in eight PCIe 5.0 GPU slots, 24 DDR5 DIMM slots (supporting up to 748 GB of memory), and eight 400G Ethernet ports via NVIDIA ConnectX-8 SuperNICs. This isn’t just about raw specs; it’s about creating a system that can handle the thermal demands of AI workloads without breaking a sweat, thanks to advanced liquid-cooling designs.

But while MSI touts ‘exceptional scalability and performance density,’ the real-world implications are worth examining. The CG481-S6053, for instance, is positioned as a powerhouse for compute-intensive AI clusters, yet its 748 GB of DDR5 memory—while impressive—comes with a significant premium. For organizations already stretching their budgets to deploy Blackwell-based GPUs (like the RTX PRO 6000 Server Edition), adding such high-capacity RAM may not always be a straightforward upgrade path. The same goes for storage, with the system supporting up to twenty PCIe Gen 5 NVMe bays, offering ultra-fast data throughput but at a cost that could make smaller enterprises hesitate.

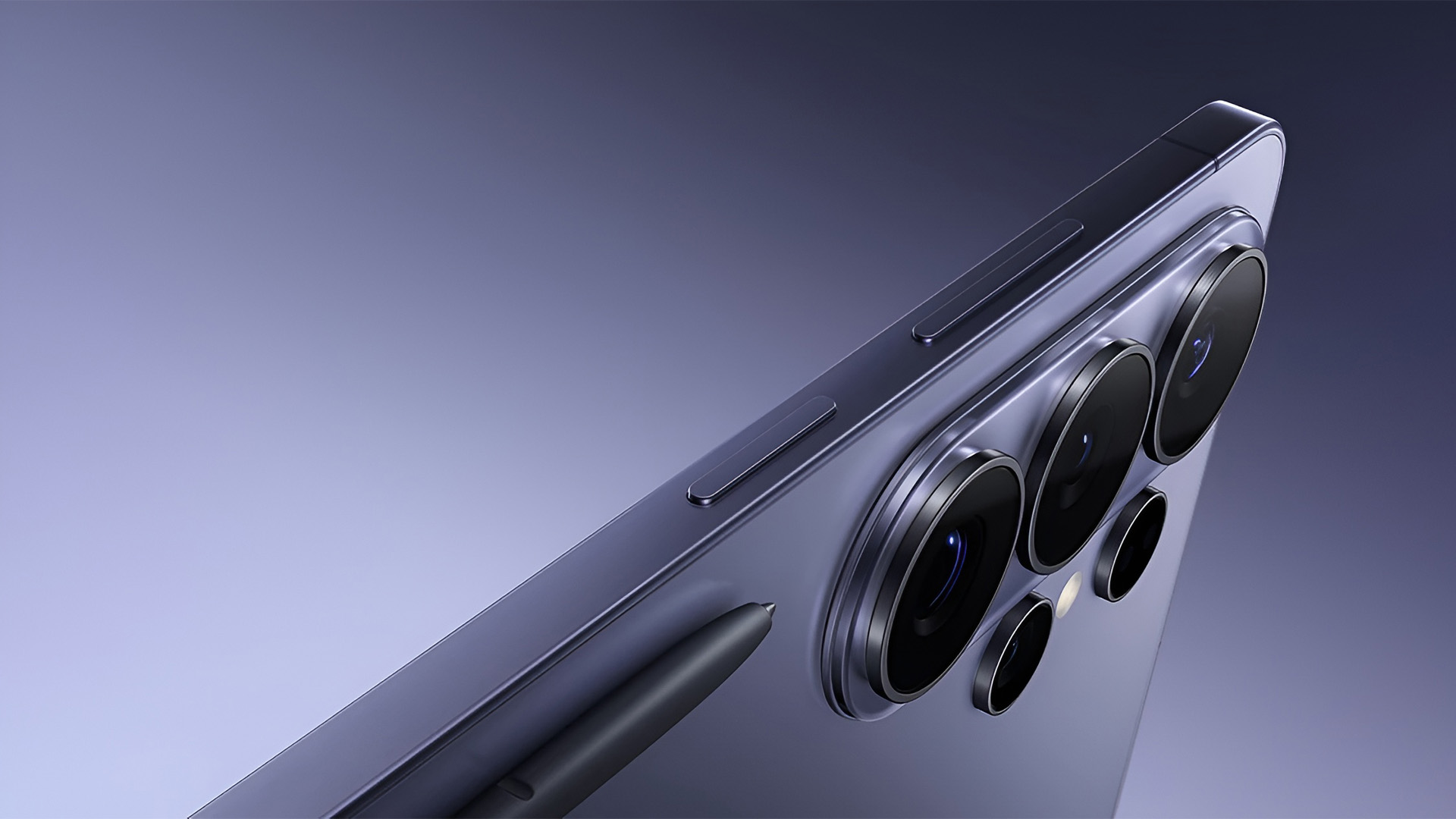

MSI isn’t stopping at servers. The XpertStation WS300, a workstation built on NVIDIA’s DGX Station architecture, brings data center-level acceleration to the desktop—or at least, to the desks of AI researchers and developers. Packing a Grace Blackwell Ultra Desktop Superchip with 748 GB of large coherent memory, dual 400GbE networking ports, and a 1600 W power supply, this system is essentially a plug-and-play supercomputer for AI model development. The appeal is clear: data scientists no longer need to rely solely on remote clusters or cloud instances to run complex simulations or fine-tune models. However, the WS300’s pricing—likely in line with NVIDIA’s premium DGX Station lineup—will likely limit its adoption to enterprises with deep pockets.

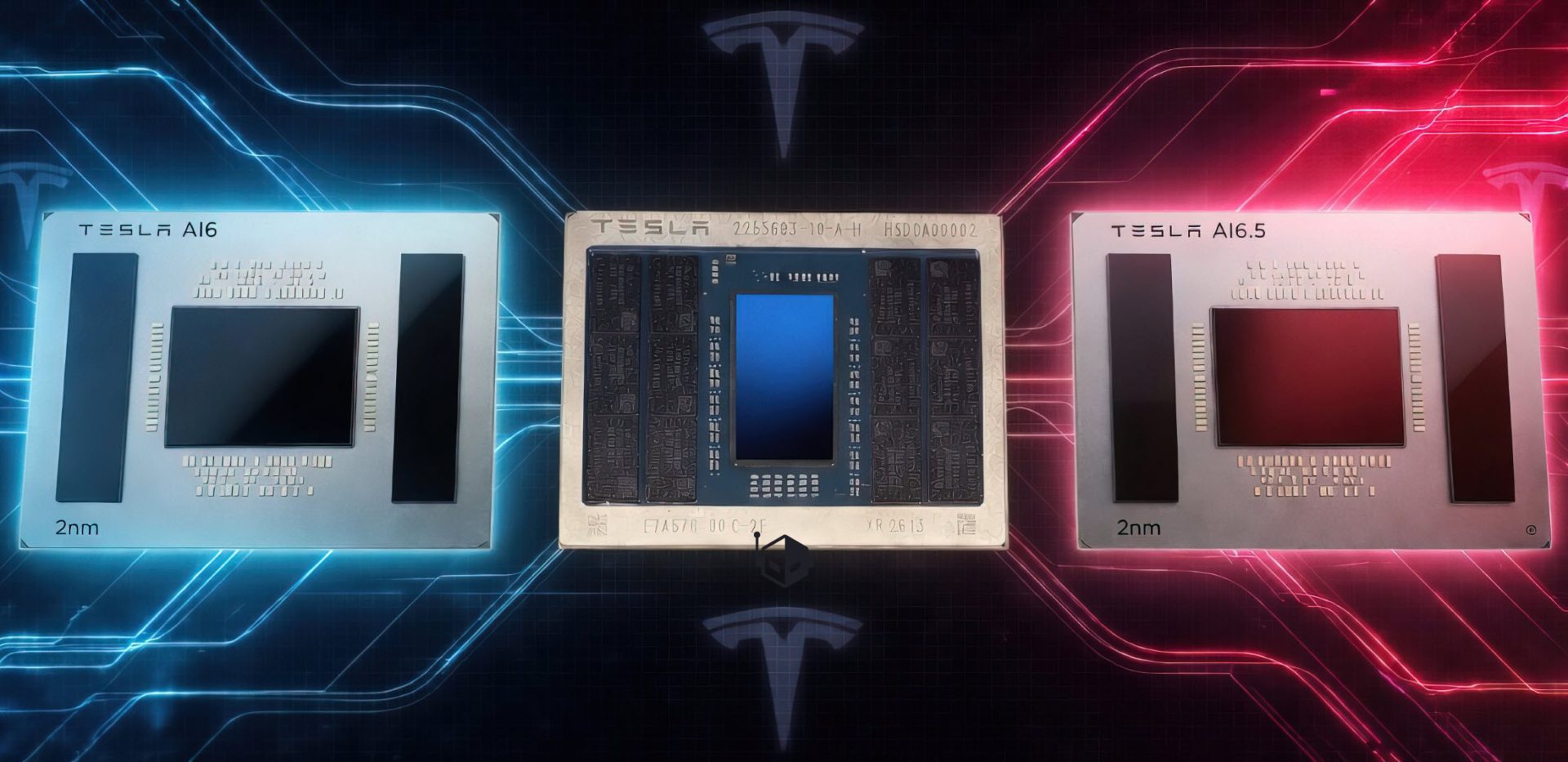

There’s also the question of long-term viability. NVIDIA’s Blackwell architecture is still relatively new, and while it promises efficiency gains (thanks to 2 nm process nodes for compute clusters and 3 nm for I/O), the real-world performance benefits remain unproven at scale. Competitors like AMD, with their RX 9070 XT and upcoming Zen 6 processors, are also pushing boundaries in AI-optimized hardware, but without the same level of ecosystem integration that NVIDIA offers. For now, MSI’s portfolio leans heavily on NVIDIA’s dominance, but whether this will translate into sustained market share—or if AMD can carve out a niche—remains to be seen.

For organizations willing to invest in these systems, the payoff could be substantial. Real-time multi-camera processing for smart cities, automated video analytics, and high-density AI training are all within reach with MSI’s new platforms. But the cost of entry is steep, and the question isn’t just about whether these systems deliver on their promises—it’s whether they represent a net positive for enterprise AI budgets, or if they’re merely another step in an already expensive race to the top.