Autonomy isn’t just about software anymore. It’s about physics—how robots perceive, navigate, and interact with a messy, unpredictable world. NVIDIA is betting big on Physical AI, a new paradigm where open-source models and simulation frameworks become the backbone of robotic intelligence. The move marks a shift from proprietary research to collaborative development, where engineers, researchers, and businesses can co-build the next generation of autonomous systems.

The Omniverse as a Robotic Sandbox

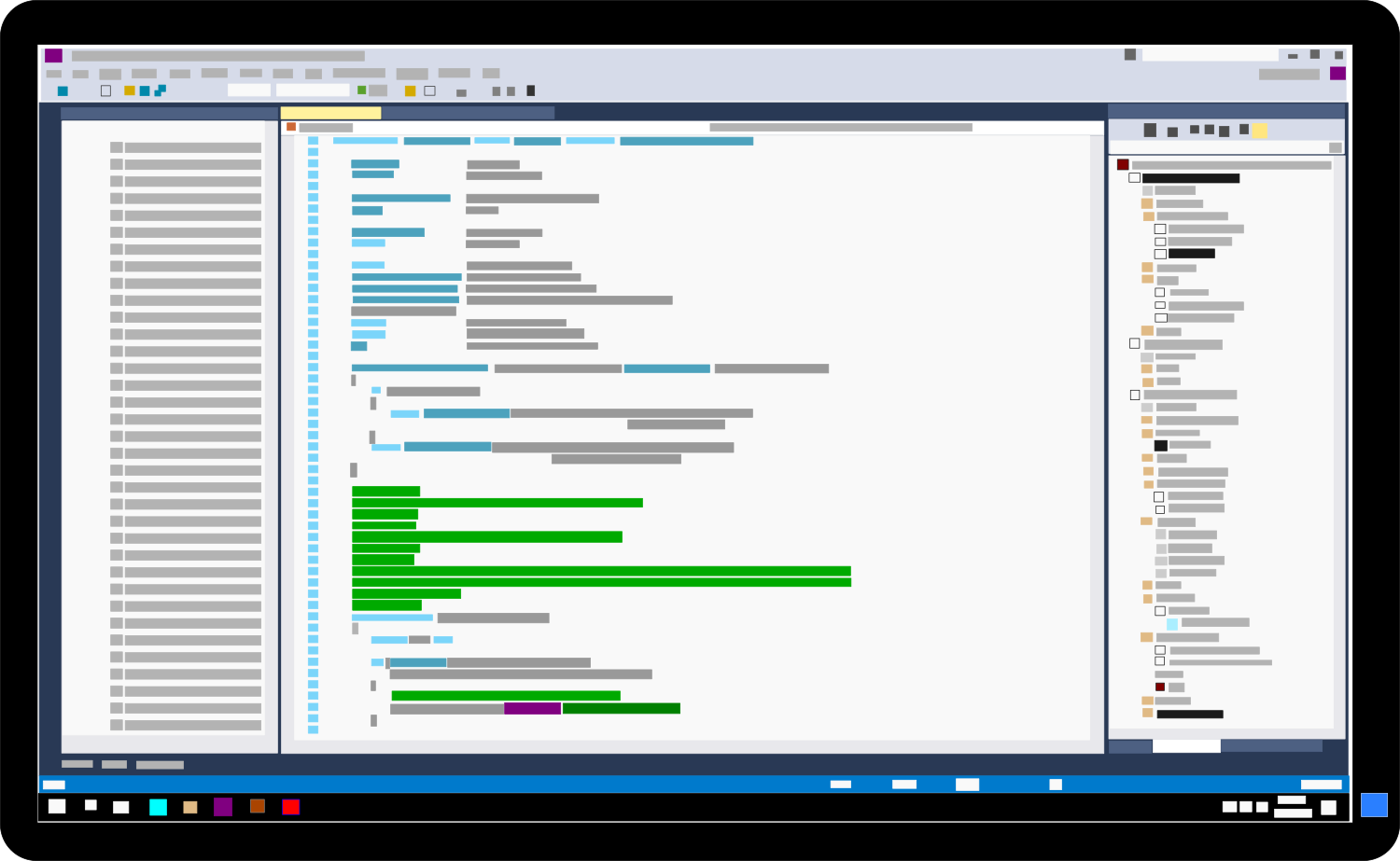

At the heart of this push is NVIDIA’s Omniverse platform, now expanded to include Isaac Sim, a physics-accurate simulation environment designed to train robots in virtual twins of real-world scenarios. Unlike traditional AI training, which often relies on static datasets, Physical AI thrives on dynamic, physics-driven interactions—where a robot must adapt to shifting weights, unpredictable surfaces, or even human interference.

Key to this ecosystem are two newly open-sourced tools

- NVIDIA Isaac Sim – A high-fidelity simulator that replicates real-world physics for robotic training, now available for free under an open-source license.

- NVIDIA Physical AI Models – Pre-trained AI models optimized for tasks like grasping, manipulation, and navigation, ready for deployment across industries.

These tools aren’t just for research labs. They’re built to integrate with existing robotic hardware, from warehouse automation to medical surgery assistants, reducing the time and cost of bringing autonomous systems to market.

Why Open-Source? The Ecosystem Imperative

Historically, robotics development has been fragmented—each company or research group building its own simulation pipelines, datasets, and AI models. The result? High costs, slow innovation, and a lack of standardization. NVIDIA’s strategy flips this script by offering a shared foundation.

For autonomous vehicles, this means training self-driving systems in simulated cities before they hit the road. For industrial robots, it translates to faster deployment of AI-powered arms that can handle delicate tasks like assembling electronics or sorting recyclables. Even medical robotics could benefit—surgical assistants trained in virtual operating rooms before ever touching a patient.

The open-source approach also levels the playing field. Startups and academia no longer need NVIDIA’s hardware to experiment; they can prototype in the cloud or on their own workstations. This democratization could spawn breakthroughs in niche applications—like underwater drones or space robotics—where specialized hardware is scarce.

Who Stands to Gain?

The immediate beneficiaries are clear

- Robotics Developers – Access to pre-built AI models and simulation tools cuts development cycles by months, if not years.

- Autonomous Vehicle Makers – Virtual testing reduces the need for physical prototypes, lowering costs and accelerating regulatory approvals.

- Industrial Manufacturers – AI-powered robots can be fine-tuned for specific tasks without manual programming, improving efficiency in factories.

- Research Institutions – Universities and labs gain a standardized platform to collaborate on Physical AI advancements.

Even competitors in the AI and robotics space may find value in the ecosystem. While NVIDIA’s GPUs remain the preferred hardware for running these models, the open-source frameworks could encourage broader adoption—meaning more developers become familiar with NVIDIA’s tools, potentially driving future sales.

The long-term vision is bold: a world where autonomy isn’t just for tech giants or well-funded startups, but for anyone with a problem to solve. Whether it’s a farmer using AI-powered drones to monitor crops or a hospital deploying robotic assistants for surgery, the tools are now in place to make it happen—collaboratively.

One thing is certain: the race for robotic intelligence just got a lot more open.