The memory wars are heating up, but NVIDIA isn’t rushing to the front lines. As high-bandwidth flash (HBF) modules inch closer to market—with some industry players already in sampling mode—the company remains steadfast on its High Bandwidth Memory (HBM) roadmap. This isn’t just about sticking with what works; it’s a strategic bet that HBM will continue delivering the performance and reliability NVIDIA’s customers demand, particularly in AI, graphics, and high-performance computing.

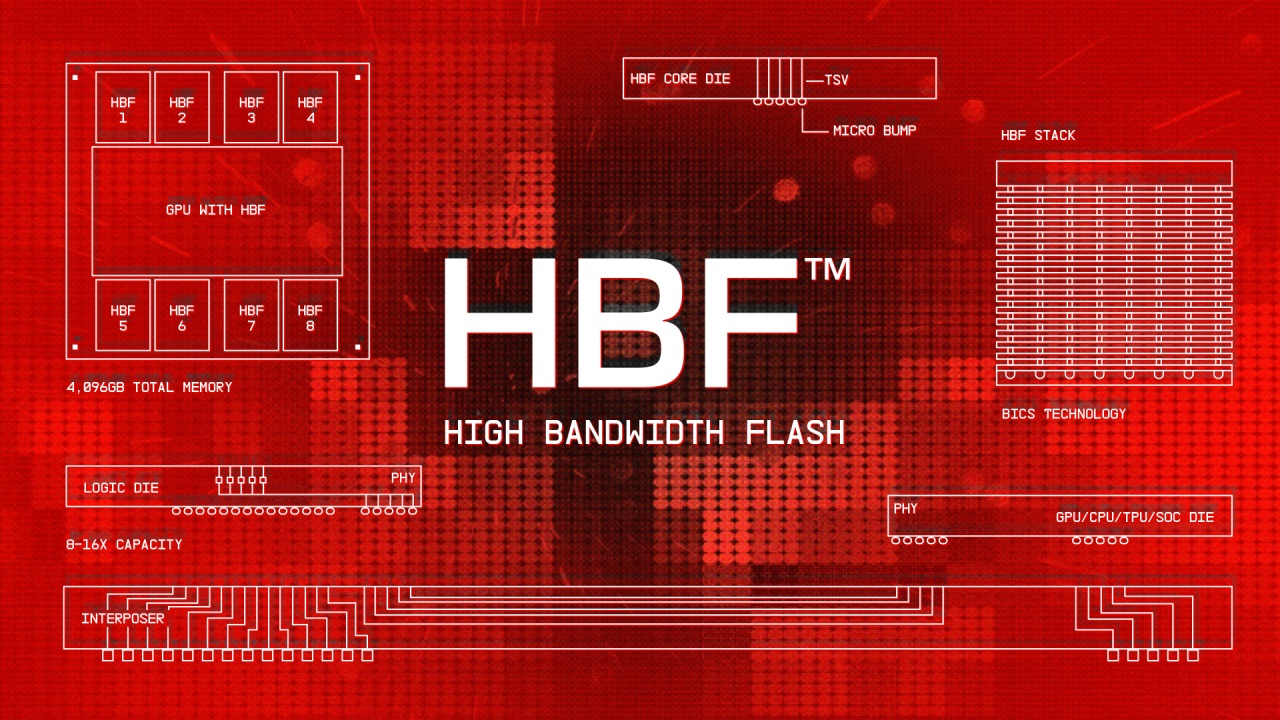

HBF represents a different path—one that blends flash’s scalability with the raw speed of DRAM-like memory. A single 4TB HBF stack could theoretically outperform today’s largest HBM modules, offering bandwidth that pushes the boundaries of what’s possible in data center workloads. But for NVIDIA, the appeal is tempered by practical concerns: power efficiency, thermal management, and compatibility with existing architectures are still unresolved challenges. The company’s decision to hold off isn’t just about avoiding risk; it’s a reflection of its confidence that HBM will evolve to meet future demands without requiring a full-scale pivot.

For now, NVIDIA’s ecosystem—spanning GPUs like those based on the Blackwell architecture—relies on its long-standing partnership with Samsung for HBM. This stack has been battle-tested in data centers and workstations, providing the stability that enterprise customers prioritize. But stability doesn’t mean stagnation. NVIDIA is investing heavily in advancing HBM, pushing for higher densities and efficiency to keep pace with AI’s relentless appetite for performance.

The potential downside? If HBF matures faster than expected, NVIDIA could cede ground to competitors who adopt it early. But the company’s focus on HBM also ensures that its products remain optimized for today’s workloads, avoiding the pitfalls of unproven technology. The question isn’t whether HBF will succeed—it’s whether it can do so without leaving NVIDIA in its wake.

For buyers, the immediate implications are clear: expect continuity. Next-generation GPUs from NVIDIA will continue leveraging HBM, delivering performance that’s been refined over years of development. But the long-term picture is less certain. If HBF proves itself in data centers and supercomputers, it could force a rethink across the industry. For now, though, NVIDIA’s strategy remains one of patience—waiting for HBM to evolve while keeping an eye on the horizon.

The memory landscape is shifting, but NVIDIA’s stance underscores a broader truth: technology adoption isn’t just about potential; it’s about timing, compatibility, and risk tolerance. HBF may be the future, but for now, HBM remains the present—and that’s where NVIDIA is doubling down.