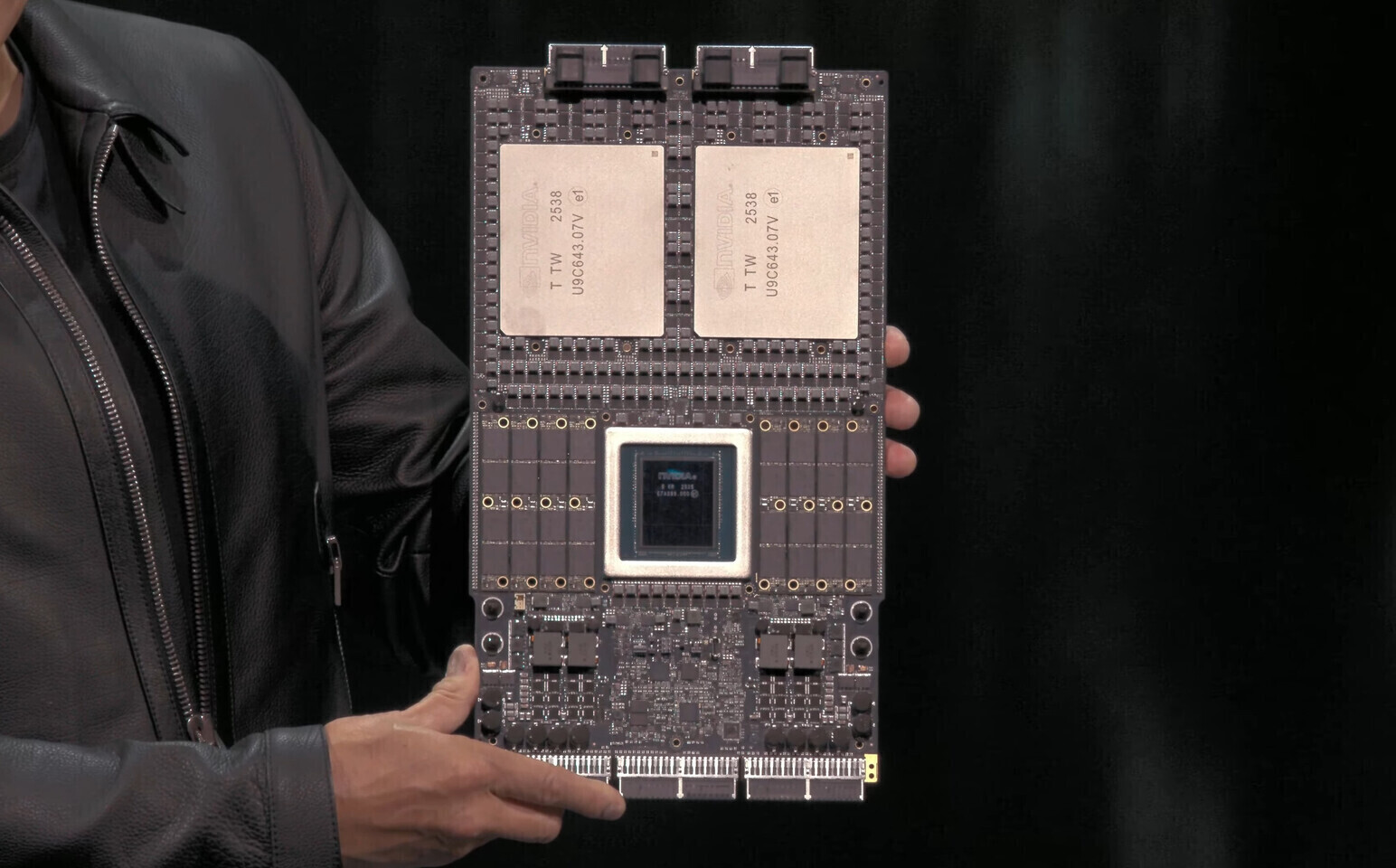

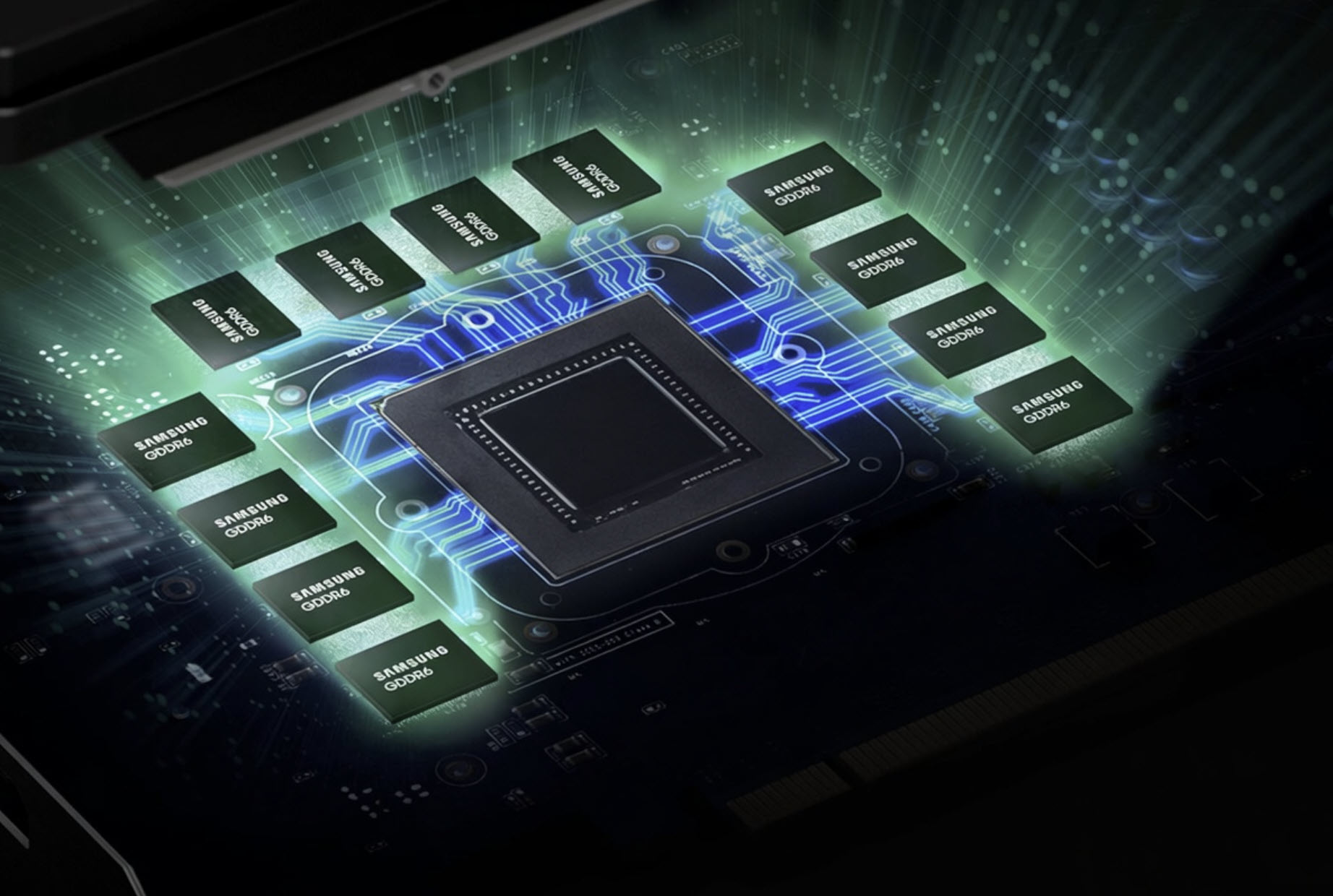

NVIDIA’s push into AI infrastructure is reshaping its supply chain, and the latest development signals a major realignment in high-bandwidth memory. The Vera Rubin platform—set to debut as VR200 NVL72 rack-scale systems later this year—will no longer include Micron as a HBM4 supplier, instead sourcing the critical memory exclusively from SK Hynix and Samsung.

The decision underscores the high stakes of NVIDIA’s demand for performance, with the company escalating system bandwidth from 13 TB/s (announced in March 2025) to a confirmed 22 TB/s at CES 2026. That’s a near-70% jump in memory throughput, achieved through aggressive scaling of HBM4 specifications. For SK Hynix and Samsung, the arrangement means a near-monopoly: SK Hynix is expected to supply 70% of the HBM4 needs, while Samsung covers the remaining 30%. Micron, meanwhile, is being sidelined from this segment entirely.

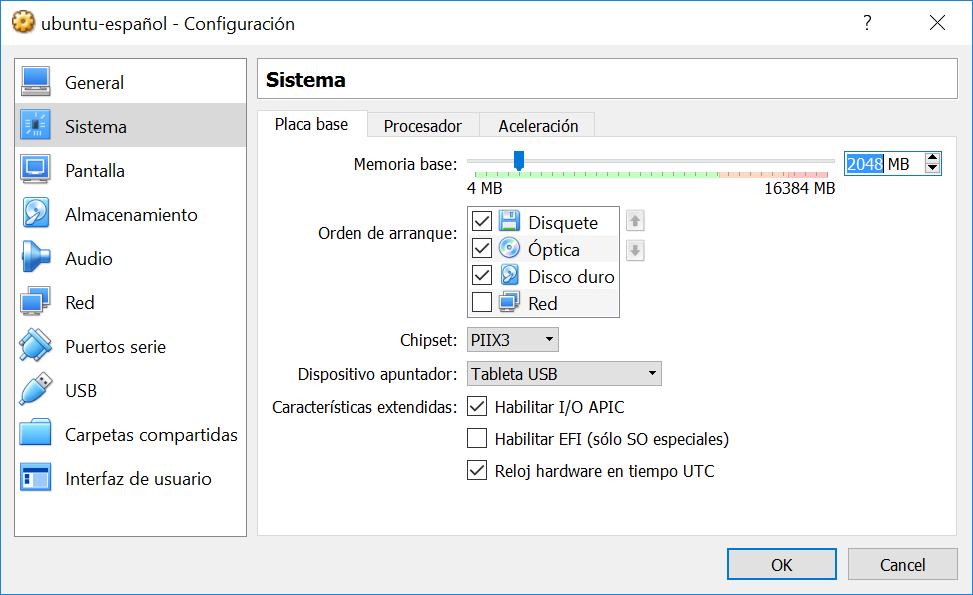

But Micron isn’t left out of the Vera Rubin ecosystem. The company will instead supply LPDDR5X memory for the platform’s CPUs, which can scale up to 1.5 TB per system. This shift aligns with NVIDIA’s broader strategy of modularizing its AI hardware, where Micron remains a key player in SOCAMM2—a form factor for high-density LPDDR5X memory critical for standalone Vera CPUs. These CPUs, designed to compete with Intel Xeon and AMD EPYC, will leverage Micron’s expertise in LPDDR5X, ensuring the company retains a foothold despite losing the HBM4 contract.

Key specs for the VR200 NVL72 and Vera Rubin platform

- HBM4 Memory: Exclusively sourced from SK Hynix (70%) and Samsung (30%). No Micron involvement.

- LPDDR5X Memory: Up to 1.5 TB per CPU, supplied by Micron for SOCAMM2 modules.

- System Bandwidth: 22 TB/s (up from 13 TB/s in 2025, confirmed at CES 2026).

- Target Launch: Late summer 2026 for VR200 NVL72 rack systems.

- Memory Form Factors: HBM4 for AI accelerators, LPDDR5X (SOCAMM2) for CPUs.

The exclusion of Micron from HBM4 may reflect NVIDIA’s push for tighter performance margins, as the company’s bandwidth targets outpaced even its initial projections. While Micron’s absence from HBM4 is notable, its role in LPDDR5X ensures it remains a critical supplier for the platform’s CPU-side memory—a segment where it may even hold an exclusive position. For SK Hynix and Samsung, the deal solidifies their dominance in NVIDIA’s high-end memory supply chain, particularly as demand for AI infrastructure continues to surge.

The Vera Rubin platform isn’t just a technical upgrade; it’s a strategic pivot. By locking in SK Hynix and Samsung for HBM4, NVIDIA is betting on scalability and reliability for its next wave of AI systems. Meanwhile, Micron’s pivot to LPDDR5X highlights how NVIDIA is diversifying its supply chain to avoid single points of failure. With RTX 50-series GPUs (including a rumored $5,000 RTX 5090) already straining memory supply chains, Vera Rubin’s memory strategy could set the tone for how NVIDIA balances performance and partnerships in the years ahead.