The race for AI-driven efficiency is heating up, and the latest hardware announcements signal a fundamental change in how data centers will operate. No longer just a matter of raw power, modern cloud workloads now demand smarter processing—balancing performance with cost per operation. This shift isn’t just about faster clocks; it’s about rethinking how silicon handles complex tasks at scale.

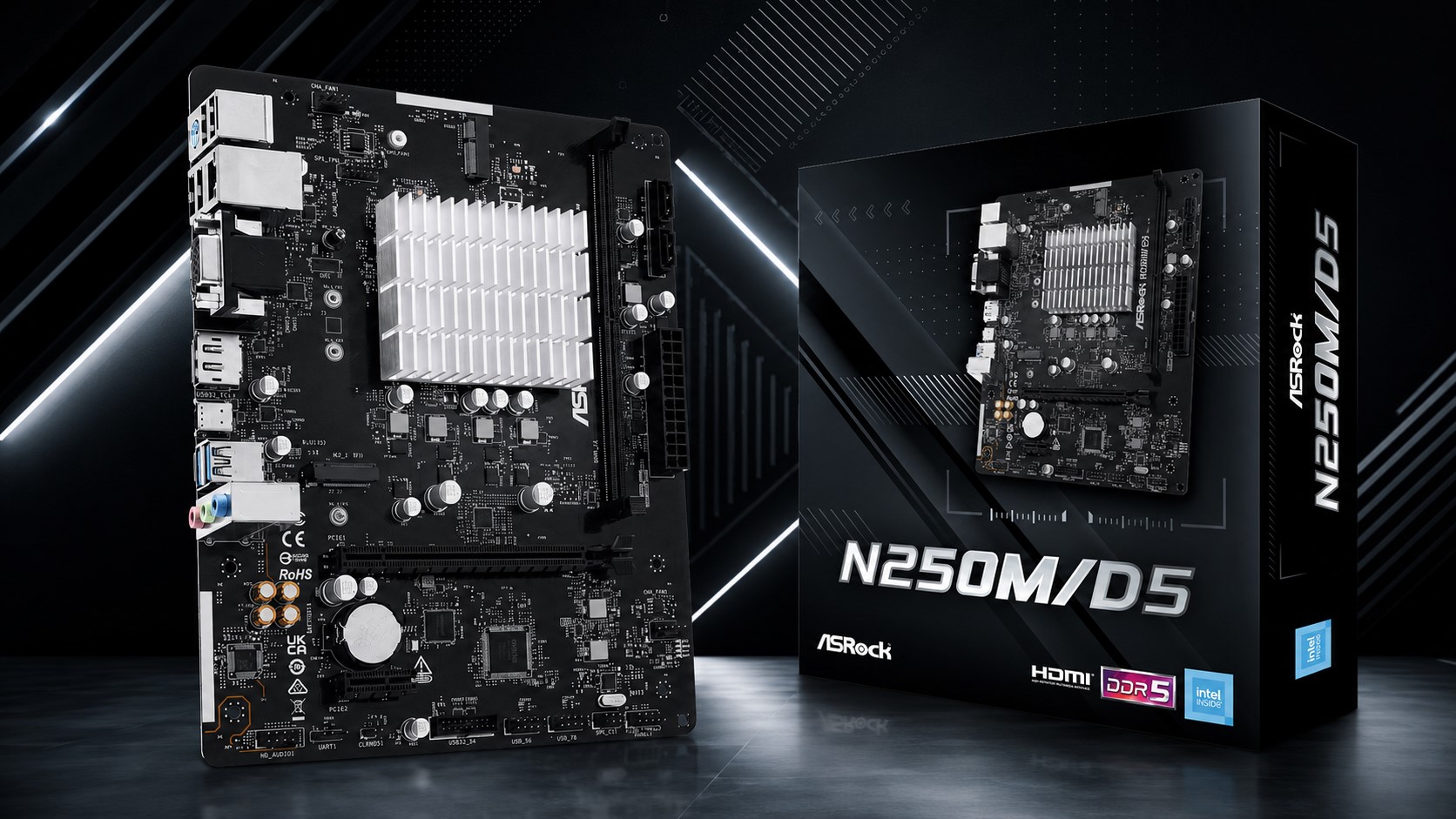

At the heart of this evolution is a new approach to memory and compute integration. Traditional architectures treated RAM as a bottleneck, but today’s designs are blurring that line. A recent hardware update introduces a 24 GB HBM2e memory module paired with a custom AI accelerator, delivering up to 1.5x the throughput on mixed workloads compared to previous generations. The result? Faster inference times without proportional increases in power draw—a critical factor as data centers face mounting cooling costs.

- Key specs:

- 24 GB HBM2e memory, 1.5x throughput on AI workloads

- Custom accelerator for mixed compute tasks

- Targeted at cloud and edge deployments

The implications stretch beyond raw performance. For power users and enterprises, this hardware could unlock new levels of efficiency in training models or running large-scale simulations. But with every leap forward comes the risk of platform lock-in—something to watch as vendors double down on proprietary optimizations.

What’s still unclear is how widely these gains will translate to end-user applications. Early benchmarks show promise, but real-world adoption hinges on software stacks catching up. For now, the focus remains on data center efficiency—a trend that will likely shape the next wave of AI hardware.