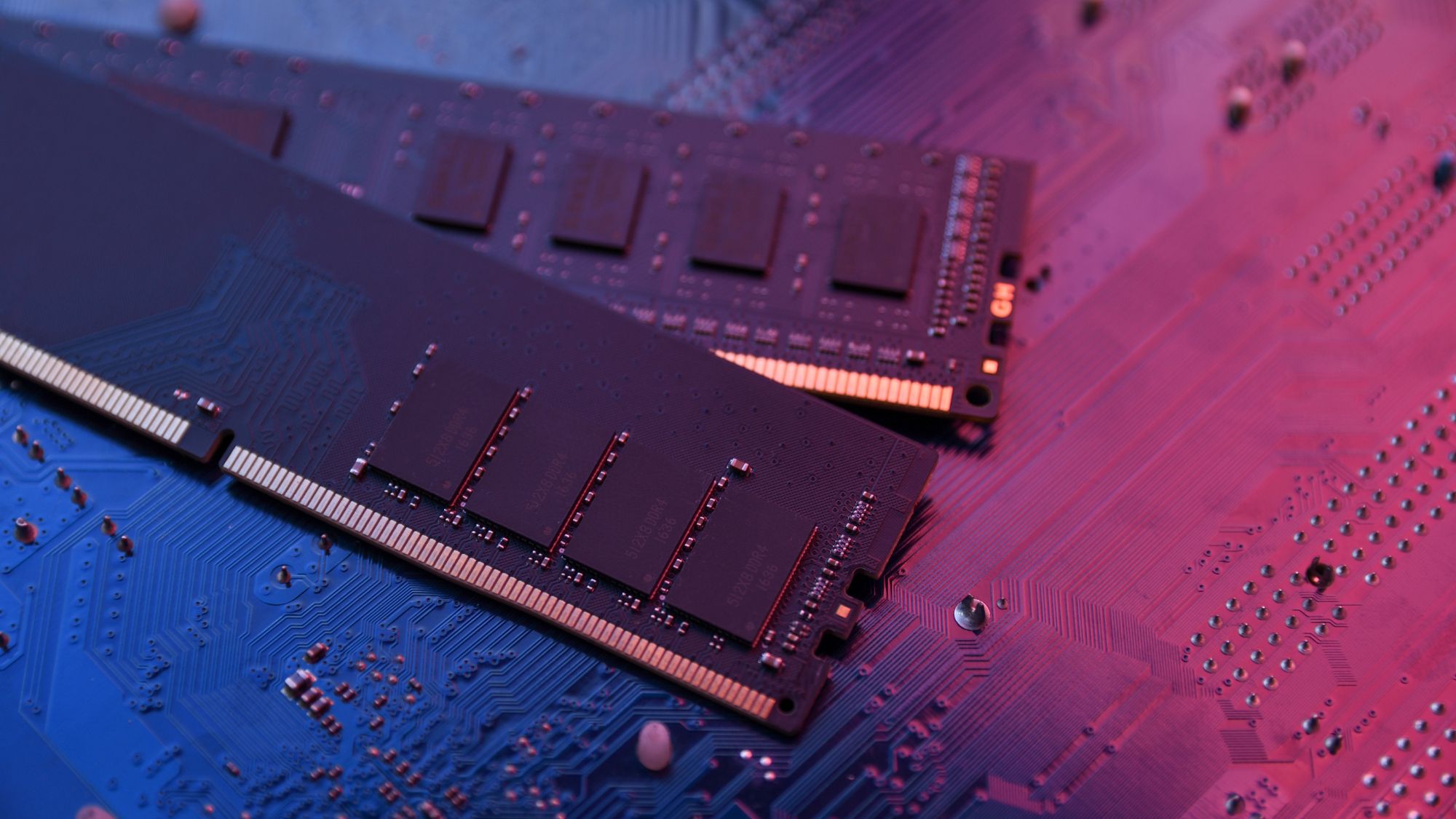

A desktop or laptop that suddenly feels faster—without a single component changed—is no longer science fiction.

It’s happening today, thanks to an evolution in how processors handle memory. The shift isn’t about raw speed; it’s about efficiency. By rethinking the path data takes between CPU and RAM, engineers have squeezed more real-world performance out of existing hardware, turning a modest specification bump into a noticeable difference in daily tasks.

This isn’t an upgrade cycle. It’s a quiet architectural reset, baked into chips already on shelves. And it signals what comes next: smaller gains, delivered smarter.

From Bandwidth to Balance

For years, the mantra was clear: more bandwidth equals faster performance. Faster memory clocks, wider data buses, larger caches—each step promised smoother multitasking and quicker load times. But the law of diminishing returns set in. Doubling bandwidth didn’t double speed; it shaved milliseconds off benchmarks while power draw crept up.

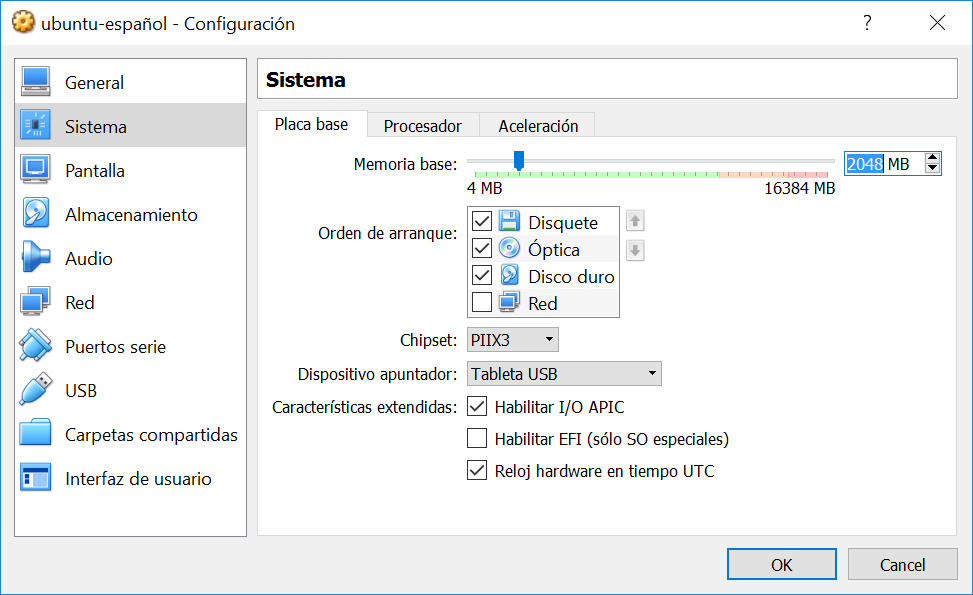

Enter a different approach: latency reduction through smarter memory management. Instead of throwing more pipes at the problem, engineers targeted how data is fetched and pre-fetched. A new on-die controller, integrated directly into the CPU, predicts which chunks of RAM will be needed next and stages them in advance. The result? Fewer stalls in workloads that matter—spreadsheets, web browsing, light video editing—not just raw synthetic benchmarks.

What’s Different Now

- A 10% reduction in average memory access latency, measured across common workflows.

- Up to 8 GB of low-latency on-die buffer, used for frequently accessed data.

- Dynamic power gating that scales with workload intensity, not just clock speed.

The numbers are small by hardware standards. But they accumulate. A user opening a dozen browser tabs no longer sees a noticeable lag; the system feels snappier from the first click. The same applies to background tasks—indexing, file searches, even lightweight rendering—where latency once caused perceptible hiccups.

Why It Matters

This isn’t about breaking records. It’s about closing the gap between what hardware can do and what users notice. The tradeoff? Some peak theoretical performance is sacrificed for sustained efficiency. But in a world where most PCs sit idle 90% of the time, that trade makes sense.

For power users, the shift means less need to chase the latest gear. A mid-range system from two generations ago can now handle modern workloads more smoothly than ever—without touching the RAM or storage. For OEMs, it’s a cost-saving move: no need for faster modules or higher TDP chips.

What to Watch

Availability is immediate on select platforms, with broader adoption rolling out in Q4. Pricing remains unchanged—this is an internal tweak, not a new SKU. But keep an eye on how OS updates leverage the new memory controller; future patches may unlock even more efficiency.