Security operations centers are drowning in alerts. On average, enterprise SOCs process **10,000 security signals daily**, each demanding **20 to 40 minutes** of manual review. Even with full staffing, fewer than one in five alerts receive proper scrutiny—leaving the rest vulnerable to exploitation. The backlog isn’t just a productivity crisis; it’s a strategic one. Attackers now achieve **breakout times as fast as 51 seconds**, leveraging identity abuse and AI-driven evasion tactics that outpace traditional human response cycles.

Enter the automated SOC. Tier-1 analysts—once buried in triage, enrichment, and escalation—are being replaced by supervised AI agents. The goal? To free human expertise for high-stakes decisions while processing alerts at machine speed. Early adopters report **over 98% accuracy** in AI-driven triage, slashing manual workloads by **40+ hours per week**. Yet beneath the efficiency gains lies a critical flaw: **without governance boundaries, more than 40% of these AI projects will fail by 2027**, according to Gartner. The reason? Unclear business value and unchecked autonomy.

Why is this happening?

The problem starts with the legacy SOC architecture. Most environments are patchworks of siloed tools—each generating conflicting alerts, none speaking to one another. The result? Analyst burnout so severe that **senior SOC professionals are leaving the field in record numbers**. Worse, the talent pipeline can’t keep up. When AI agents take over triage, they don’t just reduce workloads—they demand **new rules**. Without them, even the most advanced tools risk becoming **automated chaos agents**, misclassifying threats or overreacting to false positives.

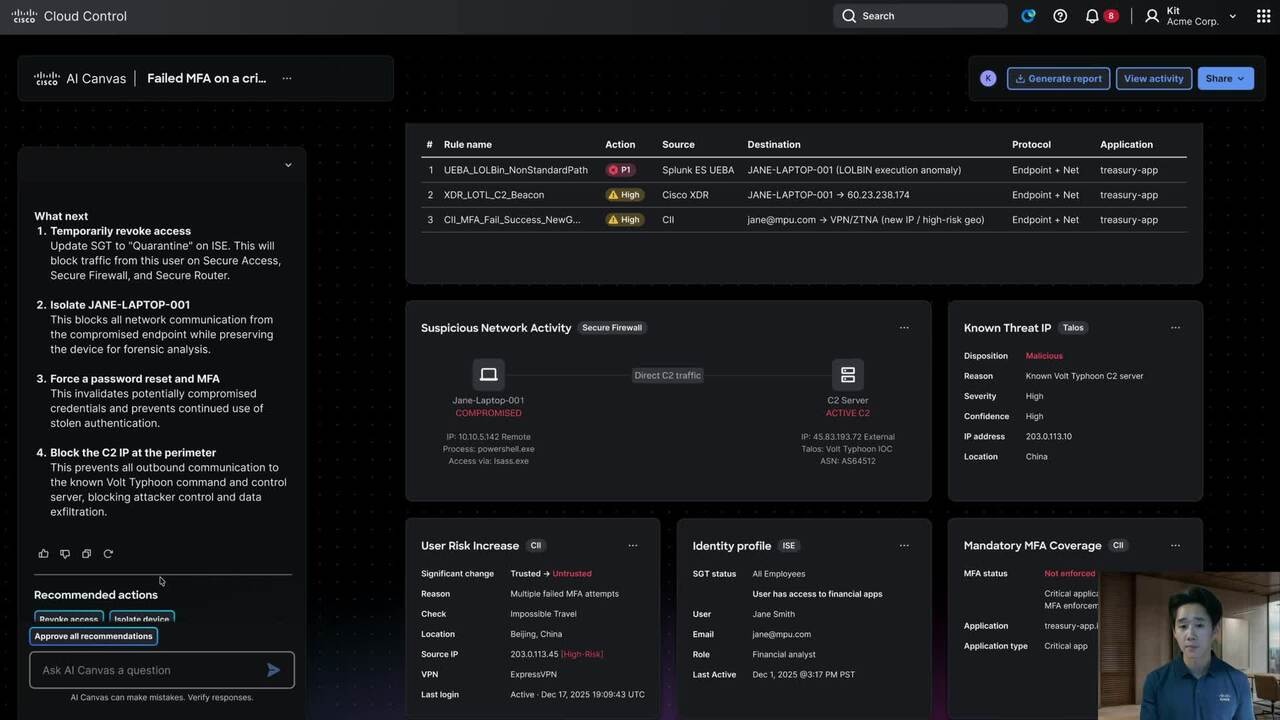

A better approach: bounded autonomy

The most effective SOCs aren’t fully autonomous—they’re **bounded**. AI handles triage and enrichment, but critical containment decisions remain human-approved. This hybrid model leverages **graph-based detection**, where AI traces attack paths across interconnected events rather than processing alerts in isolation. A suspicious login, for example, gains context when the system recognizes it’s two hops from a domain controller—a pattern that would be invisible in traditional SIEMs.

Speed matters, but accuracy matters more. Deployments like those at **ServiceNow**—which spent **$12 billion on security acquisitions in 2025 alone**—show how bounded autonomy scales. Ivanti, too, is extending the model to IT service desks, compressing a **three-year kernel-hardening roadmap into 18 months** after nation-state attacks exposed vulnerabilities faster than patches could be deployed. The goal? **24/7 coverage without proportional headcount growth**—a necessity in financial services, healthcare, and government sectors.

To succeed, bounded autonomy requires **three non-negotiable boundaries**

- Autonomous action limits: Define which alert categories AI can act on without human review.

- Human-required thresholds: Specify incidents that demand oversight, regardless of confidence scores.

- Escalation protocols: Establish clear paths when AI certainty falls below acceptable levels.

High-severity incidents—ransomware, lateral movement, or credential theft—**must** trigger human approval before containment. The stakes are too high for automation to operate in a vacuum.

Security leaders should begin with **low-risk workflows**—those where failure is recoverable. Three processes account for **60% of analyst time** but yield minimal investigative value

- Phishing triage (missed escalations can be caught in secondary review).

- Password reset automation (limited blast radius).

- Known-bad indicator matching (deterministic logic).

Automate these first, then validate AI decisions against human benchmarks for **30 days**. The goal isn’t to replace analysts—it’s to **redefine their roles**. In a world where adversaries weaponize AI at machine speed, **human judgment must focus on edge cases, not triage**. The alternative? A SOC that’s faster at failing than it is at stopping threats.