Samsung has unveiled its next-generation HBM4E memory, delivering up to 4 TB/s bandwidth per stack while maintaining a 16 Gbps data rate. This marks a significant leap forward in memory performance, but the question remains: will it be enough to justify the price tag for most IT teams?

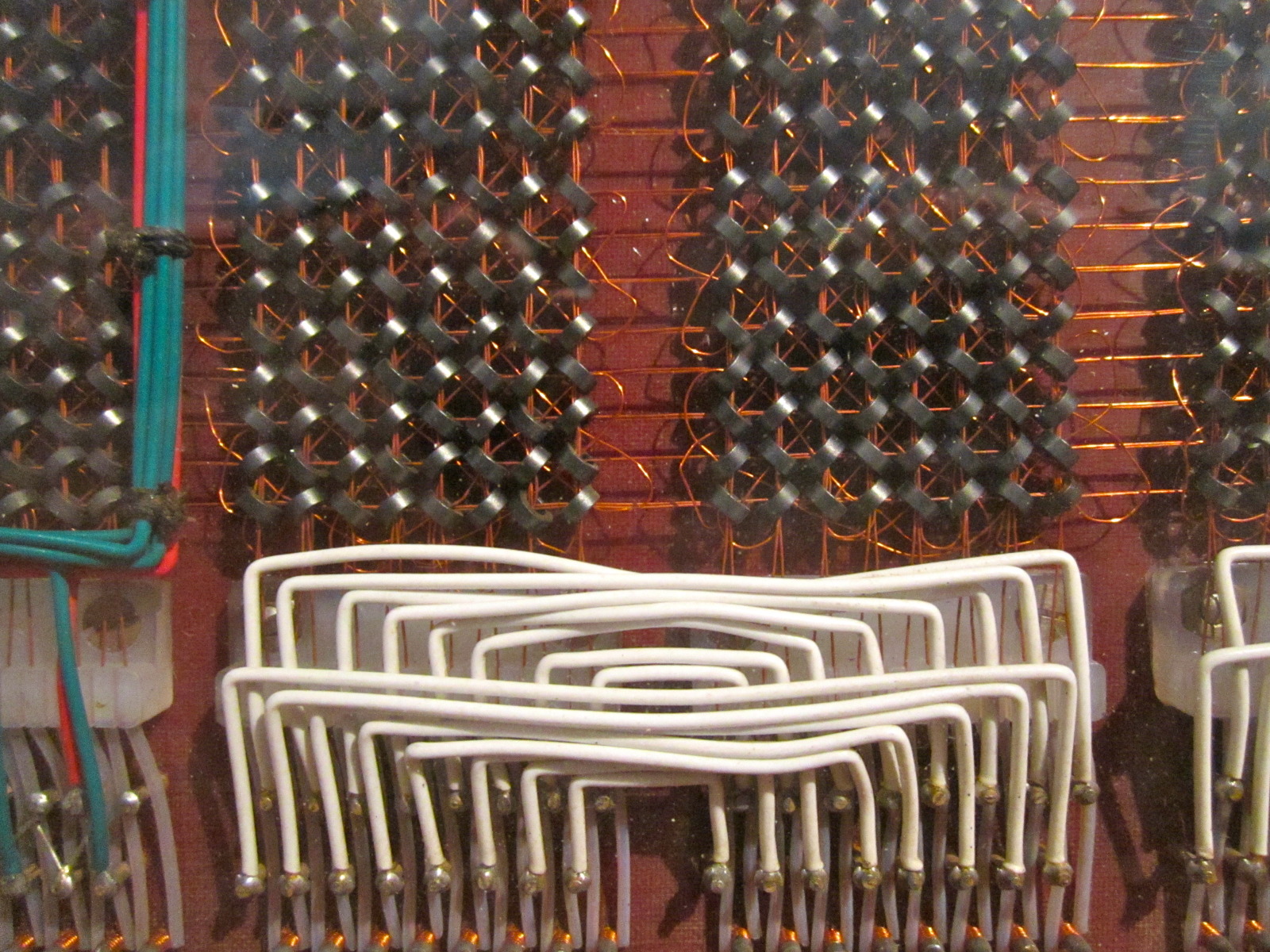

Traditionally, high-bandwidth memory (HBM) has been reserved for specialized workloads like AI training and graphics rendering. The introduction of HBM4E could shift that dynamic, offering a performance boost that could make it viable for more mainstream applications—if the cost doesn’t become prohibitive.

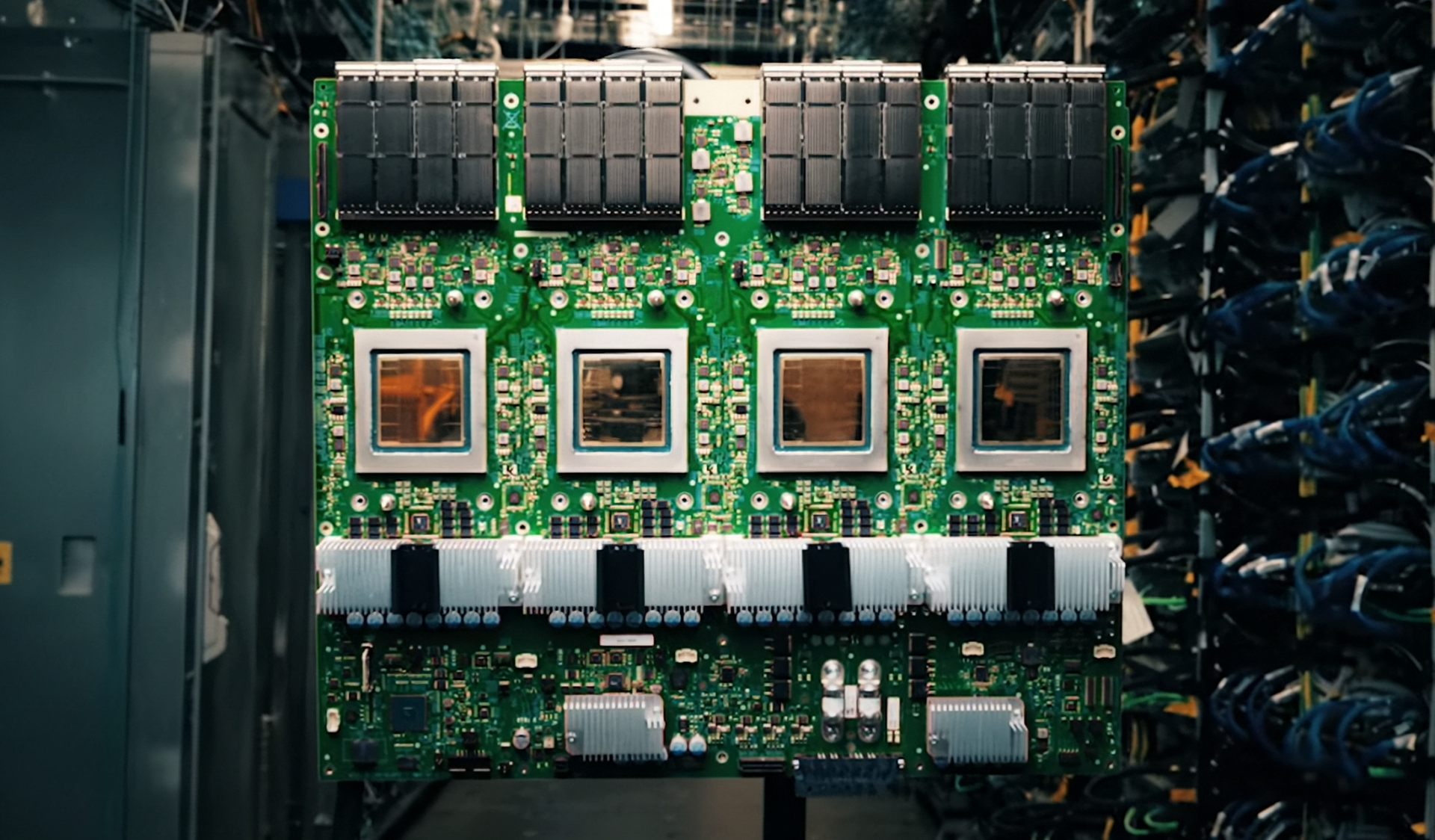

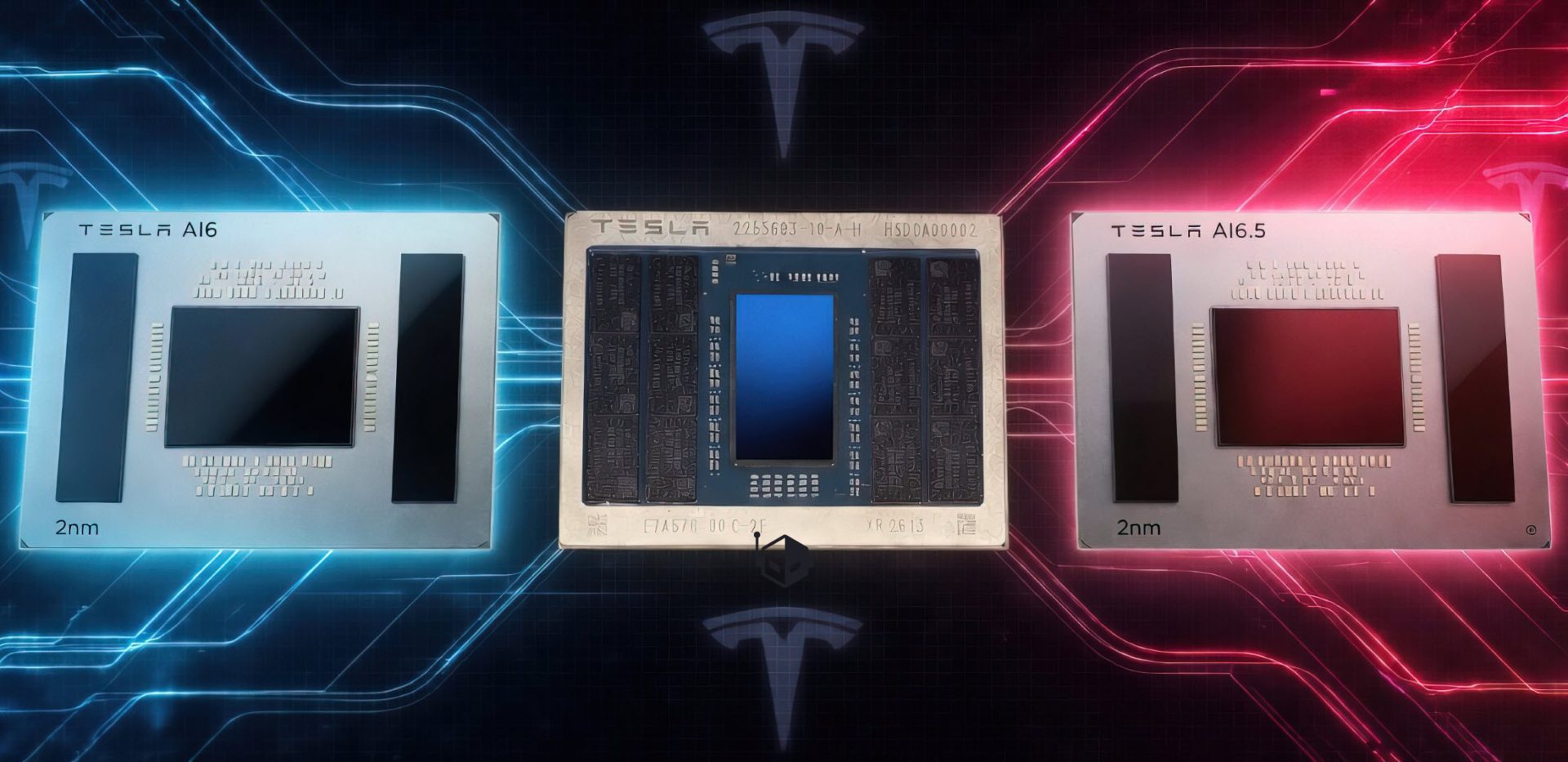

The new memory stack is built on Samsung’s 3D vertical NAND technology, allowing for capacities up to 48 GB per stack. This is a substantial increase from previous generations, which typically maxed out around 16 GB. The higher capacity, combined with the increased bandwidth, could make HBM4E a compelling option for data centers and high-performance computing environments where memory bottlenecks are a persistent issue.

Performance vs. Practicality

The 4 TB/s bandwidth per stack is a notable achievement, but it comes with trade-offs. The higher performance requires more power and cooling, which could increase operational costs for data centers. Additionally, the cost per GB is likely to be significantly higher than traditional DDR memory, making it a niche product for now.

- Up to 4 TB/s bandwidth per stack

- 16 Gbps data rate

- 48 GB capacity per stack

- Built on 3D vertical NAND technology

That’s the upside—here’s the catch. While the performance is impressive, the real-world impact will depend on how quickly software and hardware ecosystems can adapt to this new memory standard. For now, HBM4E is likely to remain a tool for specialized applications, but its potential to redefine high-performance computing is undeniable.

The timeline for widespread adoption is unclear, but Samsung’s push into higher bandwidth and capacity suggests it sees long-term value in this technology. Whether that value translates to cost-effective solutions for IT teams remains an open question. One thing is certain: the memory landscape just got a lot more complex.