NVIDIA is redefining the landscape of AI hardware by prioritizing cost efficiency while maintaining high performance. The company’s latest GPUs are designed to accelerate large-scale AI training, delivering faster token production at a significantly lower cost than traditional setups. This strategic move is part of NVIDIA’s broader initiative to democratize AI development, enabling smaller teams and organizations to compete with industry giants that have historically dominated the field due to vast computational resources.

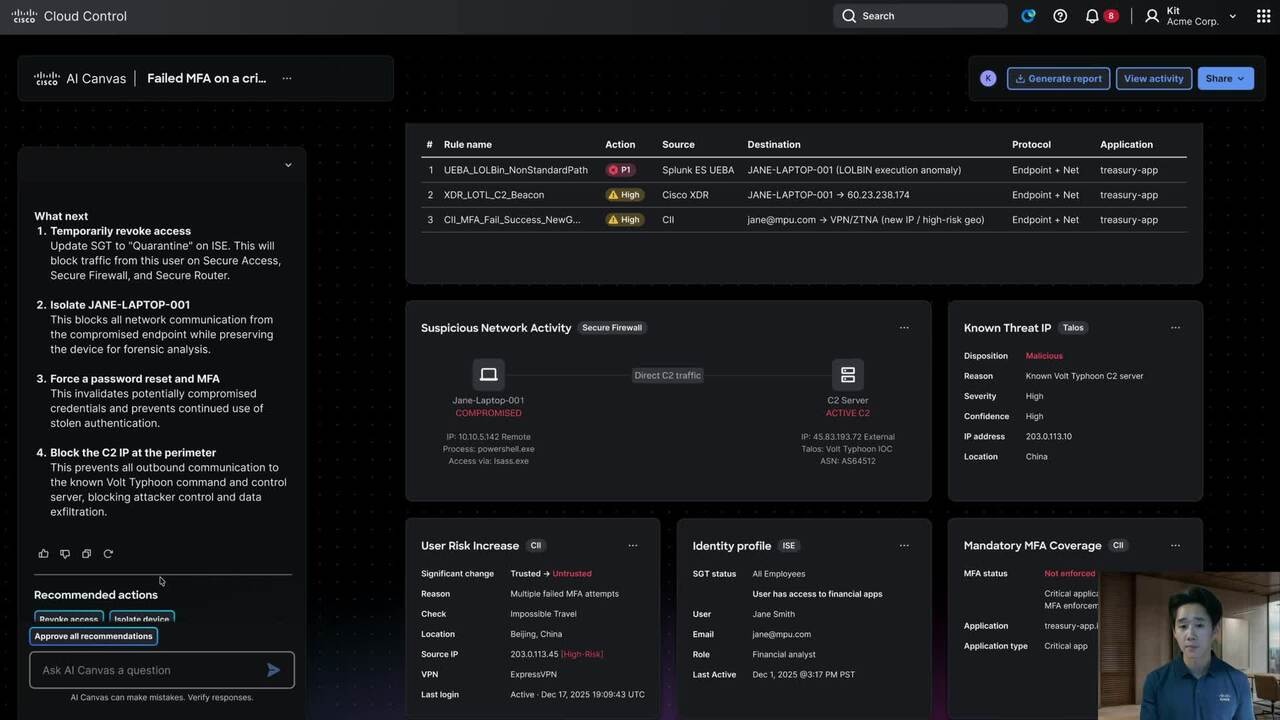

Seamless Integration Across Hardware and Software

The new hardware is engineered to work in harmony with updated software tools, creating a unified ecosystem that simplifies AI workflows. These GPUs feature enhanced memory bandwidth and compute density, which translate into faster response times and reduced latency during model deployment. Applications such as recommendation engines or chatbots could benefit from smoother performance without requiring the investment in large-scale data centers.

Performance Without Compromise

The hardware is built to handle AI tasks with exceptional efficiency, claiming to produce tokens at a lower cost than competing solutions while maintaining performance parity. Although exact benchmarks are still under review, NVIDIA’s focus remains on reducing computational overhead without sacrificing performance. This approach could be particularly advantageous for smaller businesses or research teams seeking to scale their models without the need for significant capital expenditure.

Long-Term Ecosystem Viability

The success of NVIDIA’s new hardware hinges on its ability to balance cost savings with ecosystem flexibility. While the hardware itself is powerful, its true value lies in how seamlessly it integrates with existing workflows and whether alternatives can keep pace. Developers will need to weigh short-term gains against long-term adaptability when evaluating this new stack.

NVIDIA’s push to make advanced AI more cost-effective could reshape the industry, but real-world performance metrics and adoption over time will determine its lasting impact. The company’s ability to deliver both affordability and ecosystem integration will be critical in achieving its goal of making AI accessible to a broader range of users.